1 Introduction

Experimental sciences have traditionally been a source of challenging mathematical problems with a double edge: while mathematical theories are created, technology moves fast and industry develops. Imaging sciences provide a remarkable example. Typical imaging systems, such as radar [28 S. W. McCandless and C. R. Jackson, Principles of synthetic aperture radar. In SAR Marine Users Manual, NOAA (2004) ], magnetic resonance tomography, ultrasound, echography [25 A. Maier, S. Steidl, V. Christlein, J. Hornegger, Medical imaging systems: An introductory guide. Springer (2018) ], and seismic imaging [34 J. Tromp, Seismic wavefield imaging of Earth’s interior across scales. Nature Reviews Earth & Environment 1, 40–53 (2020) ], pose inverse scattering problems with a similar mathematical structure. In all of them, waves generated by a set of emitters interact with a medium under study and the wave field resulting from the interaction is recorded at a set of receivers [10 D. Colton and R. Kress, Inverse acoustic and electromagnetic scattering theory. Appl. Math. Sci. 93, Springer, Berlin (1992) ]. Different imaging systems resort to different types of waves and arrange emitters and receivers according to varied geometries. The nature of the employed waves depends on factors such as the size of the specimens under study, the contrast between components, and the damage caused to the sample during the imaging procedure. Knowing the emitted and recorded waves, we aim to infer the structure of the medium.

Approximating the solutions of inverse scattering problems is a challenging task because such problems are severely ill posed [10 D. Colton and R. Kress, Inverse acoustic and electromagnetic scattering theory. Appl. Math. Sci. 93, Springer, Berlin (1992) ]. Given arbitrary data, the problem under study may not admit a solution, the solution may not be unique, or it may not depend continuously on the given data. This means that small errors may lead to a solution different from the searched one. In view of the relevant technological applications in a host of fields, such as medicine, security, geophysics, or materials testing, to mention a few, there is a need of even better mathematical techniques for classical imaging problems, as well as a need of new ideas to tackle new imaging set-ups.

We focus here on recent developments in digital holography, summarizing work done during the past 10 years in collaboration with experimentalists designing holographic microscopes. This collaboration started in 2012 thanks to the interdisciplinary communication environment created at the Harvard University’s Kavli Institute seminars. Since then, we have developed analytical and computational tools to handle inverse problems arising in digital holography, in collaboration with researchers from Harvard University and Tesla, Universidad Complutense de Madrid, Universidad Politécnica de Madrid, Universidad de Oviedo, Université de Technologie de Compiègne, and New York University.

Digital in-line holography is a noninvasive tool for accelerated three-dimensional imaging of soft matter and live cells [23 S. H. Lee, Y. Roichman, G. R. Yi, S. H. Kim, S. M. Yang, A. van Blaaderen, P. van Oostrum and D. G. Grier, Characterizing and tracking single colloidal particles with video holographic microscopy. Optics Express 15, 18275–18282 (2007) , 16 J. Fung, R. P. Perry, T. G. Dimiduk and V. N. Manoharan, Imaging multiple colloidal particles by fitting electromagnetic scattering solutions to digital holograms. J. Quant. Spectroscopy Radiative Transfer 113, 212–219 (2012) , 26 P. Marquet, B. Rappaz, P. J. Magistretti, E. Cuche, Y. Emery, T. Colomb and C. Depeursinge, Digital holographic microscopy: a noninvasive contrast imaging technique allowing quantitative visualization of living cells with subwavelength axial accuracy. Optics Letters 30, 468–478 (2005) , 37 A. Yevick, M. Hannel and D. G. Grier, Machine-learning approach to holographic particle characterization. Optics Express 22, 26884–26890 (2014) ] that achieves high spatial (nanometers) and temporal (microseconds) resolution without the need of toxic fluorescent markers or stains. In this context, a hologram is a two-dimensional light interference pattern encoding information about the optical and geometrical properties of a set of objects [35 T. Vincent, Introduction to holography. CRC Press (2012) ]. Shining a properly chosen light beam back through the hologram we can recreate the original three-dimensional image. Instead, digital holography is designed to produce numerical reconstructions of the objects in an automatic way, which amounts to solving computationally an inverse scattering problem. We will show next that optimization schemes with partial differential equation constraints, analysis of the topological derivative of objective functions, regularized Gauss–Newton iterations, and Bayesian inference are effective tools to invert holographic data in the presence of noise while quantifying uncertainty.

2 The forward problem

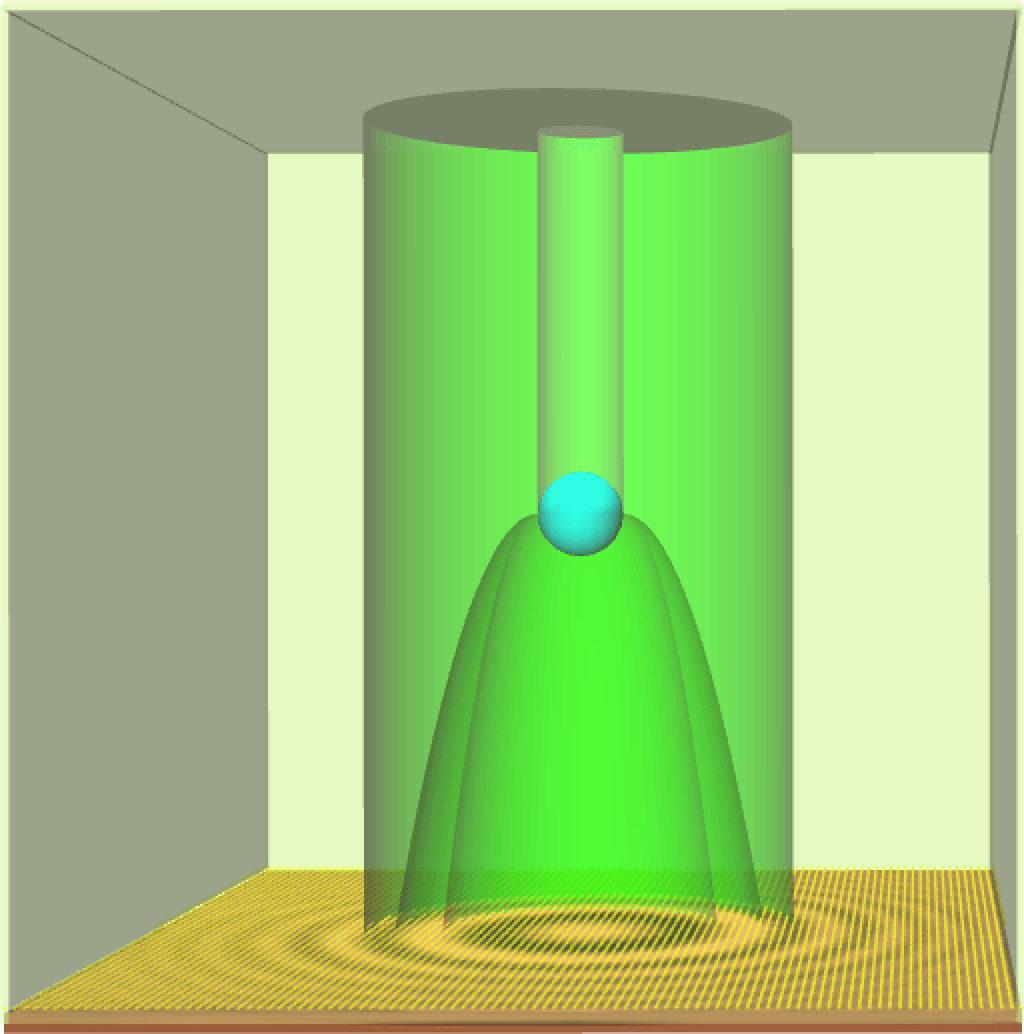

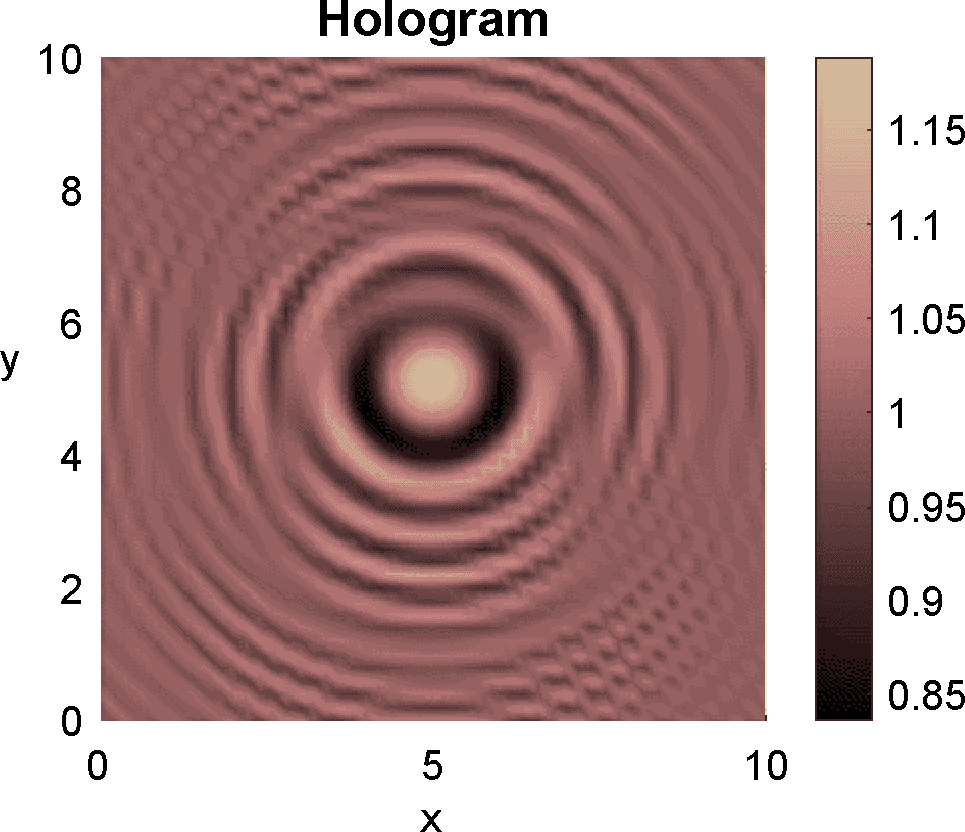

The forward problem is a mathematical model of how a hologram is generated. Figure 1 illustrates how an in-line hologram is formed, though more complicated set-ups are possible. First, a laser light beam interacts with a sample. Then, interference of the scattered light field with the undiffracted beam generates the hologram on a detector screen past the object [23 S. H. Lee, Y. Roichman, G. R. Yi, S. H. Kim, S. M. Yang, A. van Blaaderen, P. van Oostrum and D. G. Grier, Characterizing and tracking single colloidal particles with video holographic microscopy. Optics Express 15, 18275–18282 (2007) ]. The light wave field obeys the Maxwell equations. Typically, the emitted laser beams are time harmonic, that is, . The resulting wave field is also time harmonic, namely, , with complex amplitude governed by the stationary Maxwell equations. The resulting forward problem is

where , and are the permeabilities, permittivities and wavenumbers of the imaged objects , while , and correspond to the ambient medium [3 C. F. Borhen and D. R. Huffman, Absorption and scattering of light by small particles. Wiley Sciences, John Wiley & Sons, Berlin (1998) ] and are known. In biomedical applications, , being the vacuum permeability. The upper signs and represent limit values from inside and outside , respectively, and denotes the outer unit normal vector. Incident waves are polarized in a direction orthogonal to the direction of propagation , that is, , where stands for the magnitude of the incident field.

For any smooth region and any real , system (1) has a unique solution [31 J.-C. Nédélec, Acoustic and electromagnetic equations. Appl. Math. Sci. 144, Springer, New York (2001) ] in the Sobolev space that is continuous in (see [19 P. Grisvard, Elliptic problems in nonsmooth domains. Classics Appl. Math. 69, SIAM, Philadelphia (2011) ]). For collections of spheres and piecewise-constant , one can calculate Mie series solutions [3 C. F. Borhen and D. R. Huffman, Absorption and scattering of light by small particles. Wiley Sciences, John Wiley & Sons, Berlin (1998) ]. Starshaped object parametrizations with piecewise-constant allow for fast spectral solvers [20 H. Harbrecht and T. Hohage, Fast methods for three-dimensional inverse obstacle scattering problems. J. Integral Equations Appl. 19, 237–260 (2007) , 24 F. Le Louër, A spectrally accurate method for the direct and inverse scattering problems by multiple 3D dielectric obstacles. ANZIAM J. 59, E1–E49 (2018) ]. Coupled BEM/FEM formulations [29 S. Meddahi, F.-J. Sayas and V. Selgás, Nonsymmetric coupling of BEM and mixed FEM on polyhedral interfaces. Math. Comp. 80, 43–68 (2011) , 31 J.-C. Nédélec, Acoustic and electromagnetic equations. Appl. Math. Sci. 144, Springer, New York (2001) ] are convenient for more general parametrizations, while discrete dipole approximations [36 A. Wang, T. G. Dimiduk, J. Fung, S. Razavi, I. Kretzschmar, K. Chaudhary and V. N. Manoharan, Using the discrete dipole approximation and holographic microscopy to measure rotational dynamics of non-spherical colloidal particles. J. Quant. Spectroscopy Radiative Transfer 146, 499–509 (2014) , 38 M. A. Yurkin and A. G. Hoekstra, The discrete-dipole-approximation code ADDA: Capabilities and known limitations. J. Quant. Spectroscopy Radiative Transfer 112, 2234–2247 (2011) ] solve the problem avoiding the use of parametrizations.

In principle, the hologram is obtained evaluating the solution of the forward problem (1) at detectors placed on the screen: . In practice, the measured holograms are corrupted by noise.

3 Deterministic inverse problem

Given a hologram measured at screen points , , the inverse holography problem seeks objects and functions such that

where is the solution of the forward problem (1) with an object and the wavenumber (see [5 A. Carpio, T. G. Dimiduk, F. Le Louër and M. L. Rapún, When topological derivatives met regularized Gauss–Newton iterations in holographic 3D imaging. J. Comput. Phys. 388, 224–251 (2019) ]). Since the measured data are not exact, in practice one seeks shapes and functions for which the error between the recorded hologram and the synthetic hologram that would be generated solving (1) for the proposed objects and wavenumbers is as small as possible.

3.1 Constrained optimization

We recast the inverse problem as an optimization problem with a partial differential equation constraint: find and minimizing the cost functional

where and is the solution of (1). Here and are the design variables and the stationary Maxwell system (1) is the constraint. For exact data, the true objects would be a global minimum at which the functional (2) vanishes. In general, spurious local minima may arise.

3.2 Topological derivative based approximations

A topological study of the shape functional (2) for fixed provides first guesses of the imaged objects without a priori information on them. The topological derivative of a shape functional [32 J. Sokołowski and A. Żochowski, On the topological derivative in shape optimization. SIAM J. Control Optim. 37, 1251–1272 (1999) ] quantifies its sensitivity to removing and including points in an object. Given a point in a region , we have the expansion

for any ball centered at with radius . The factor is the topological derivative of the functional at (see [32 J. Sokołowski and A. Żochowski, On the topological derivative in shape optimization. SIAM J. Control Optim. 37, 1251–1272 (1999) ]). If is negative, for small. We expect the cost functional to decrease by forming objects with points below a large enough negative threshold [9 A. Carpio and M.-L. Rapún, Solving inhomogeneous inverse problems by topological derivative methods. Inverse Problems 24, Article ID 045014 (2008) , 14 G. R. Feijoo, A new method in inverse scattering based on the topological derivative. Inverse Problems 20, 1819–1840 (2004) , 27 M. Masmoudi, J. Pommier and B. Samet, The topological asymptotic expansion for the Maxwell equations and some applications. Inverse Problems 21, 547–564 (2005) ]:

When , and , asymptotic expansions yield the formula [27 M. Masmoudi, J. Pommier and B. Samet, The topological asymptotic expansion for the Maxwell equations and some applications. Inverse Problems 21, 547–564 (2005) ]

where and

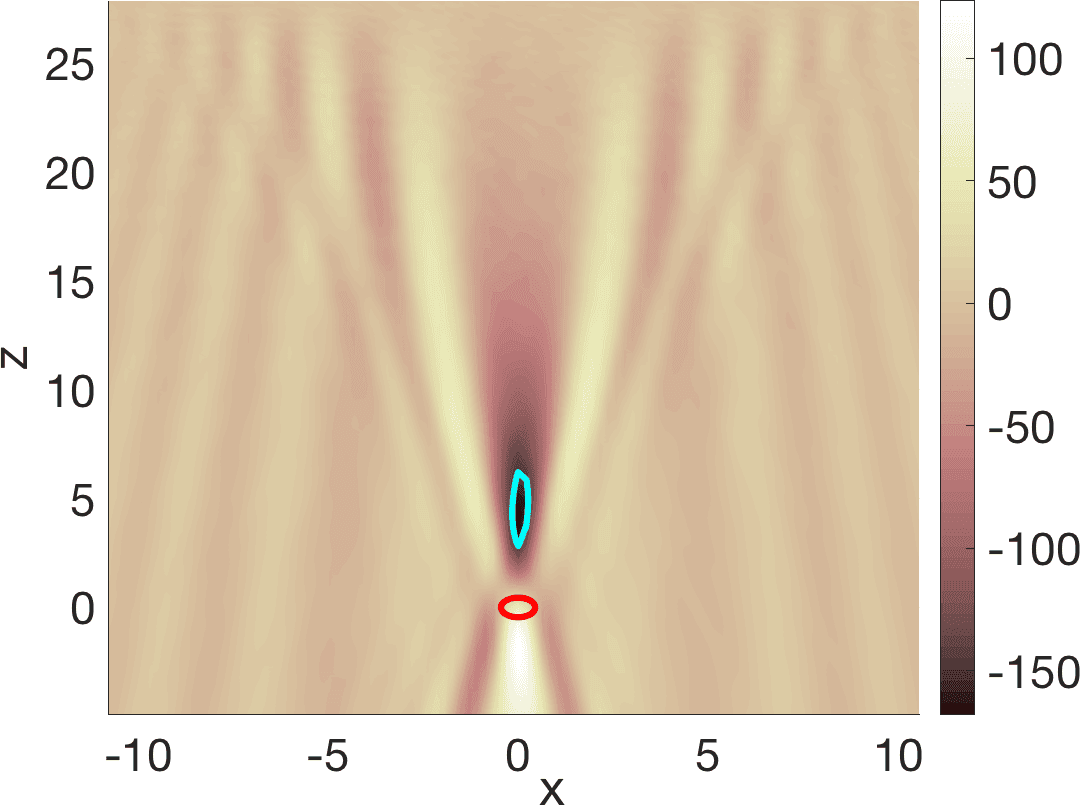

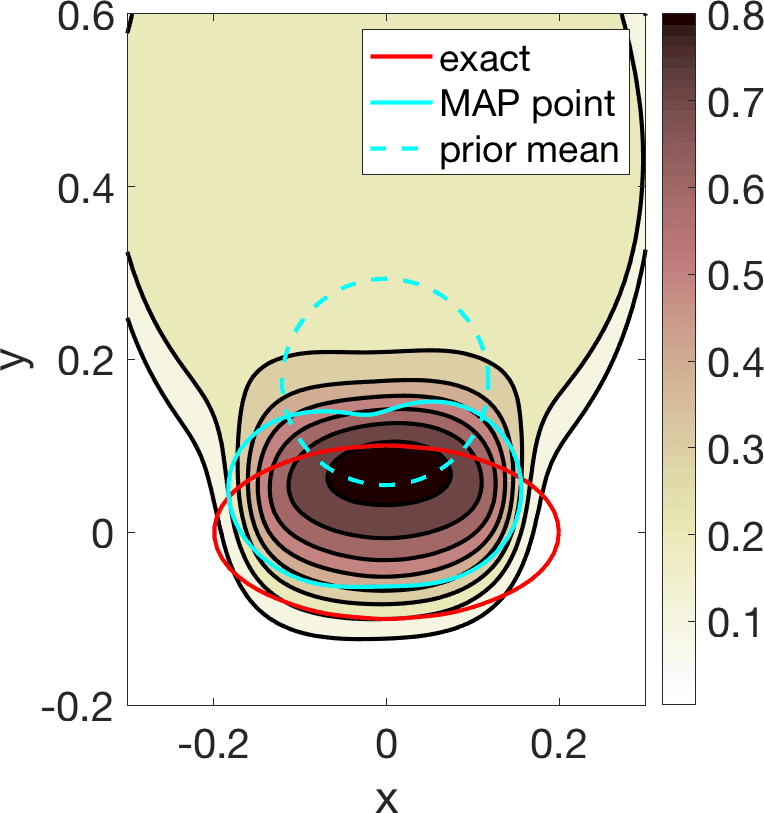

with denoting the outgoing Green function of the Helmholtz equation [31 J.-C. Nédélec, Acoustic and electromagnetic equations. Appl. Math. Sci. 144, Springer, New York (2001) ]. Once is constructed, we fit aparametrized contour to its boundary. Starshaped parametrizations are typical choices. Figure 2 exemplifies the procedure. The method is robust to noise, in the sense that perturbations of the data with random 10 % or 20 % noise, for instance, produce similar results. Notice that the value of enters through a factor that we may scale out in (5) and it is not really needed to localize the object. Similar results are obtained using the topological energy [6 A. Carpio, T. G. Dimiduk, M. L. Rapún and V. Selgas, Noninvasive imaging of three-dimensional micro and nanostructures by topological methods. SIAM J. Imaging Sci. 9, 1324–1354 (2016) ]

which does not involve at all. No knowledge of is needed to construct a first guess of the objects.

3.3 Regularized Gauss–Newton iterations

Fast methods to improve our knowledge of the objects starting from an initial guess are based on the following result. Let us consider two Hilbert spaces , and a Fréchet differentiable operator . Assuming that the exact data are attainable (that is, there is such that ), but only noisy data verifying are accessible, the iteratively regularized Gauss–Newton (IRGN) method [1 A. B. Bakushinskii, On a convergence problem of the iterative-regularized Gauss–Newton method. Zh. Vychisl. Mat. i Mat. Fiz. 32, 1503–1509 (1992) ] constructs a sequence as follows. We linearize the equation at at each step, approximate the solution of through the minimization problem

and set . The Tikhonov term has regularizing properties and promotes convergence for specific choices and (see [21 T. Hohage, Logarithmic convergence rates of the iteratively regularized Gauss–Newton method for an inverse potential and an inverse scattering problem. Inverse Problems 13, 1279–1299 (1997) ]). The theory of linear Tikhonov regularization guarantees that

where denotes the adjoint of the Fréchet derivative . The noise level affects the stopping criterion, the so-called discrepancy principle.

In a holography set-up, the map is the operator that to each parametrization of objects assigns the synthetic hologram generated by solving the forward problem for those objects. Starshaped parametrizations are a standard choice for simple objects. They describe each object by a few parameters: its center and a radius function represented by a finite combination of spherical harmonics [5 A. Carpio, T. G. Dimiduk, F. Le Louër and M. L. Rapún, When topological derivatives met regularized Gauss–Newton iterations in holographic 3D imaging. J. Comput. Phys. 388, 224–251 (2019) , 20 H. Harbrecht and T. Hohage, Fast methods for three-dimensional inverse obstacle scattering problems. J. Integral Equations Appl. 19, 237–260 (2007) ]. Given a starshaped parametrization and a recorded hologram with a level of noise , the IRGN method first solves the linearized equation

by addressing the nonlinear least squares problem

where , , is an adequate Sobolev space [5 A. Carpio, T. G. Dimiduk, F. Le Louër and M. L. Rapún, When topological derivatives met regularized Gauss–Newton iterations in holographic 3D imaging. J. Comput. Phys. 388, 224–251 (2019) ], and then sets . The initial parametrization represents the first guess of the objects constructed by topological methods. The updated objects correspond to the parametrizations . The stopping criterion for the noise level is as follows. If the synthetic hologram calculated numerically for the current approximation of the objects satisfies

we stop the algorithm, being a parameter adjusted to guarantee a reasonable approximation while preventing early stops.

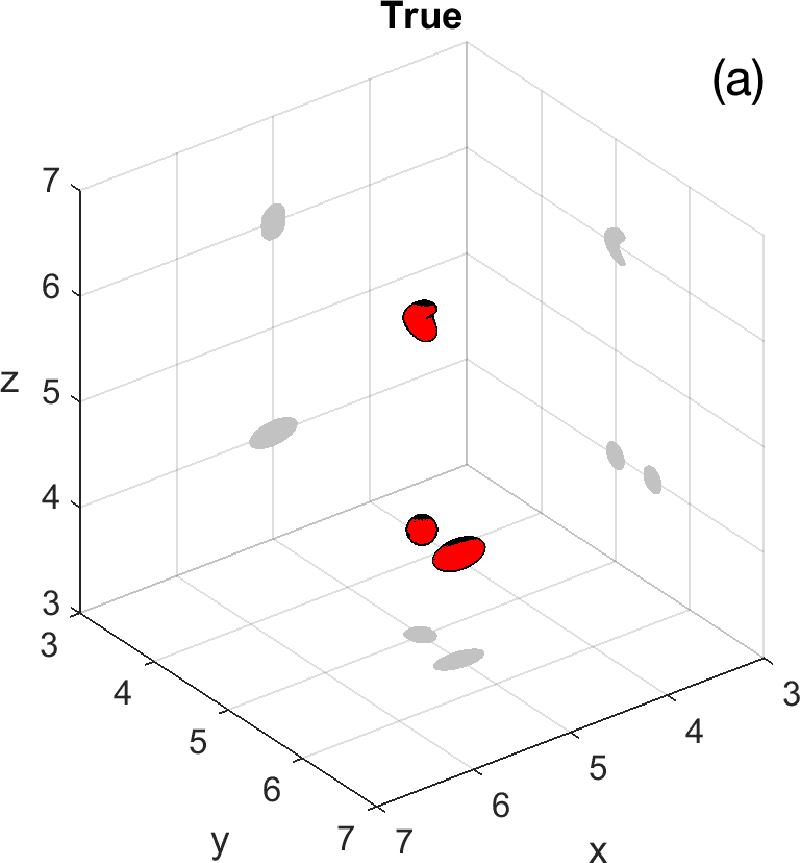

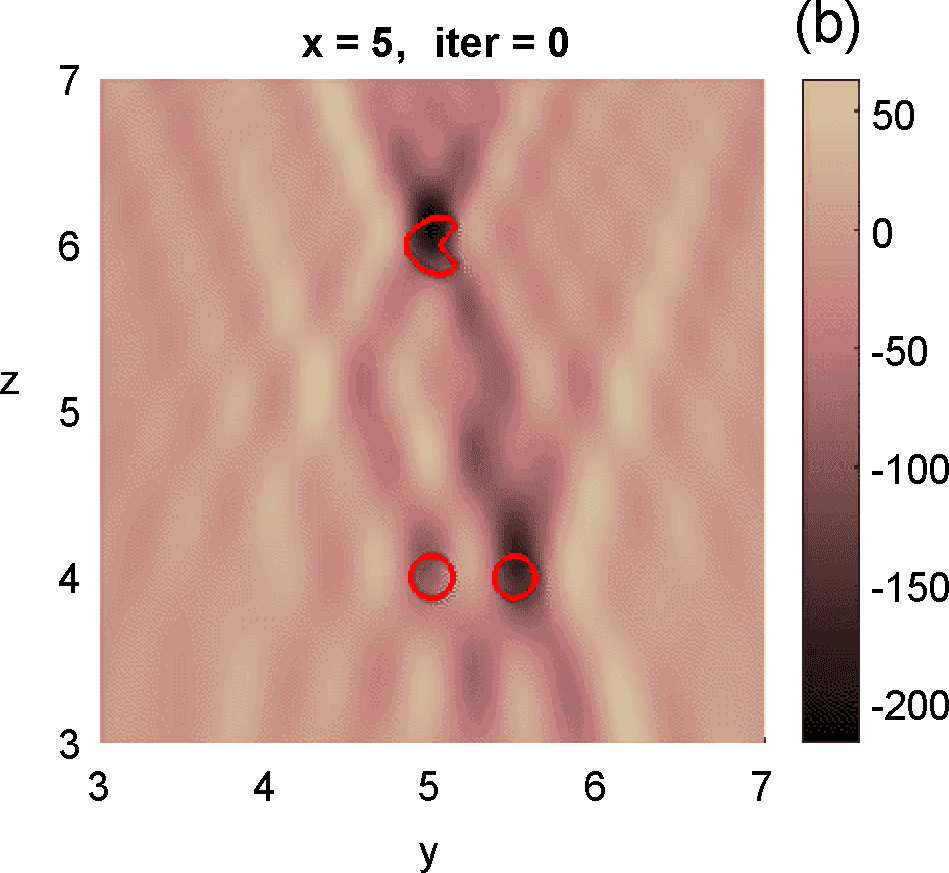

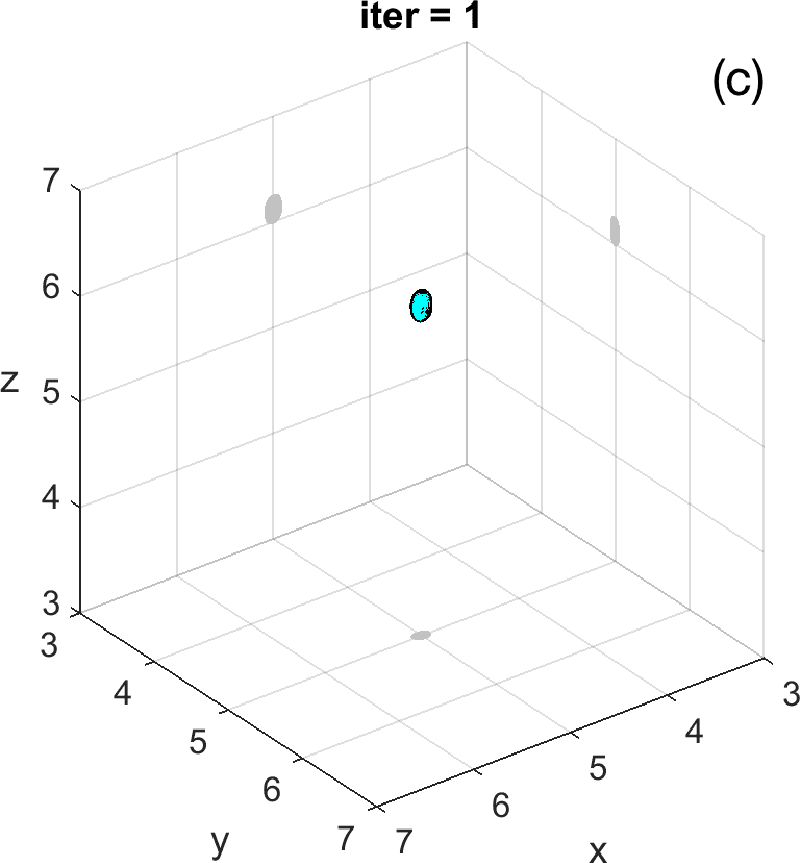

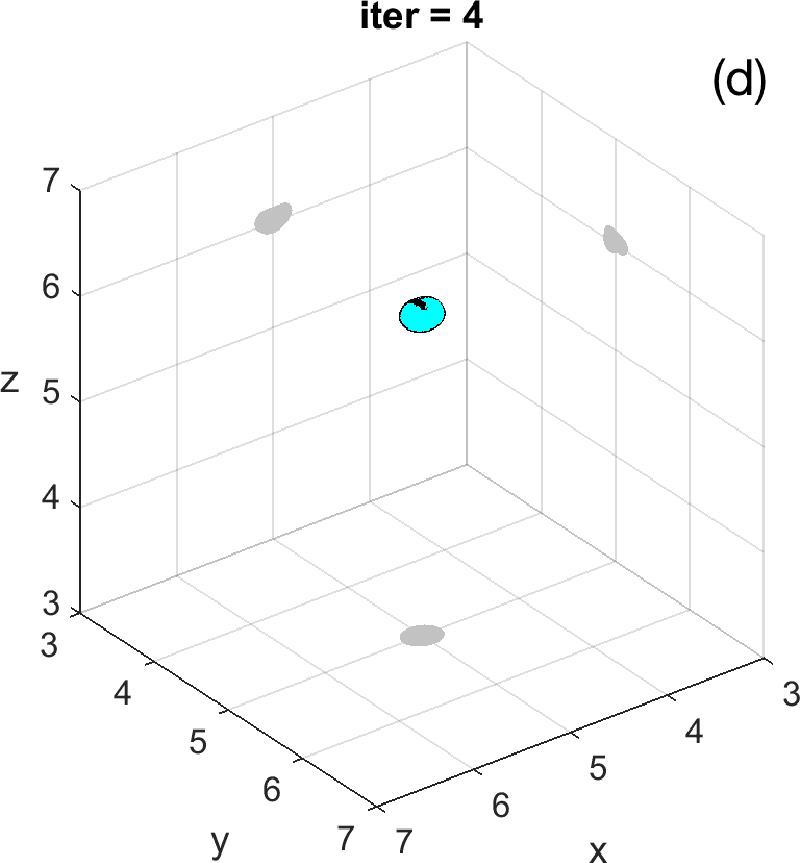

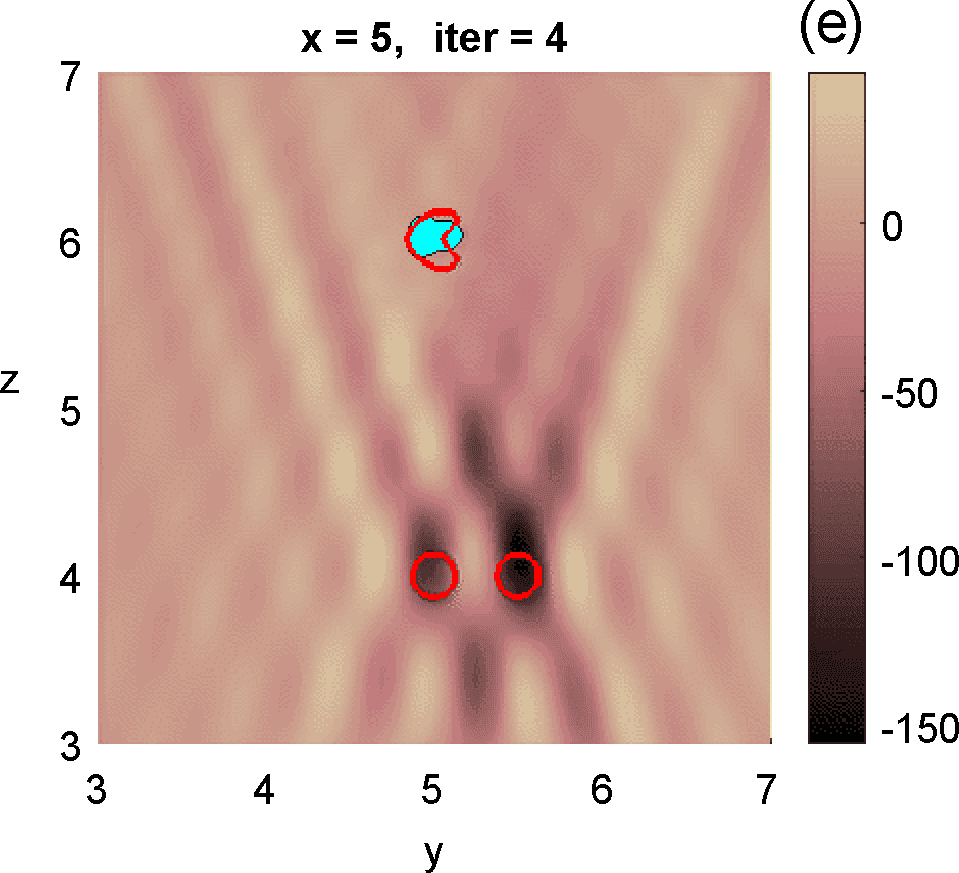

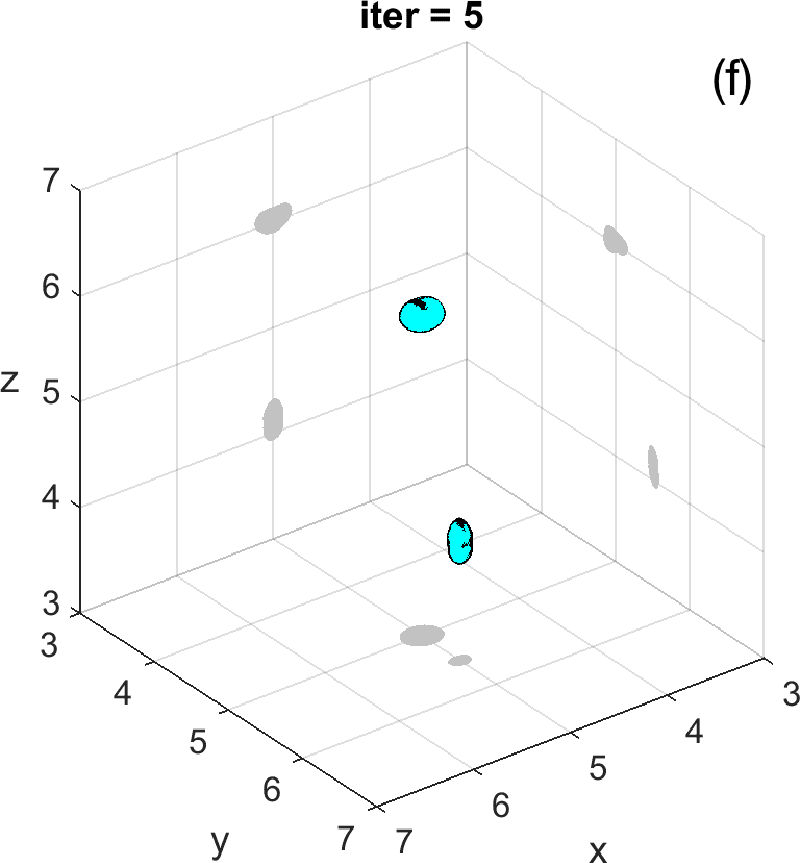

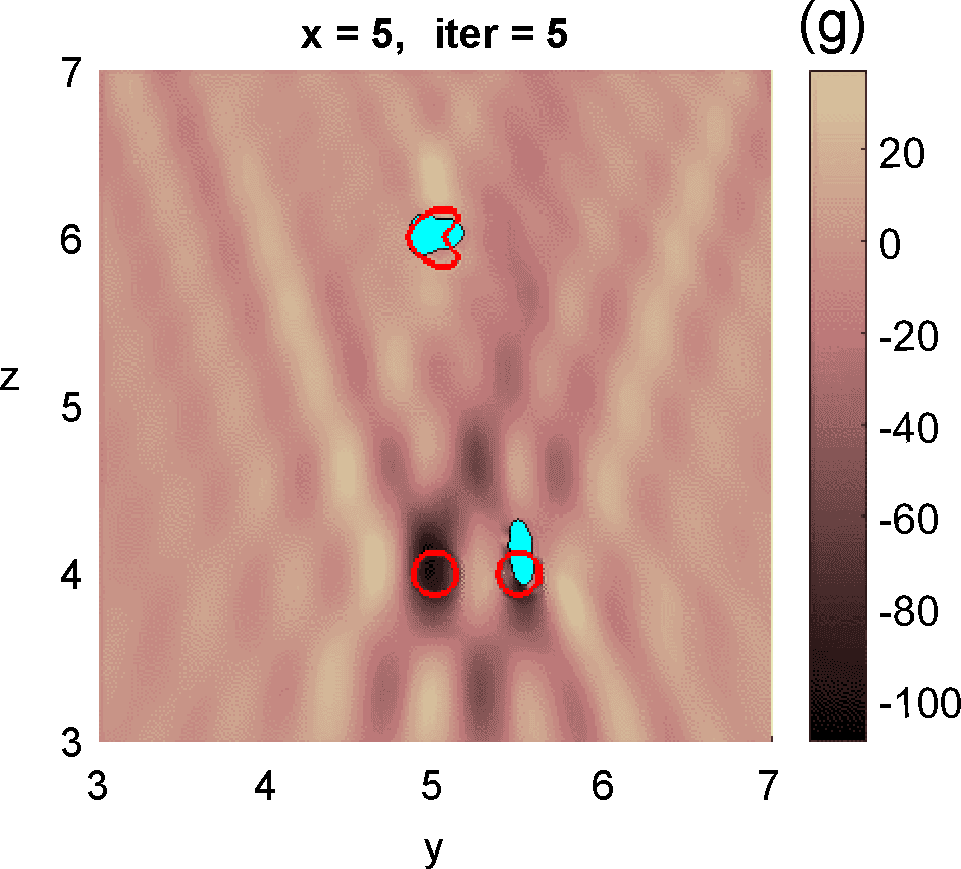

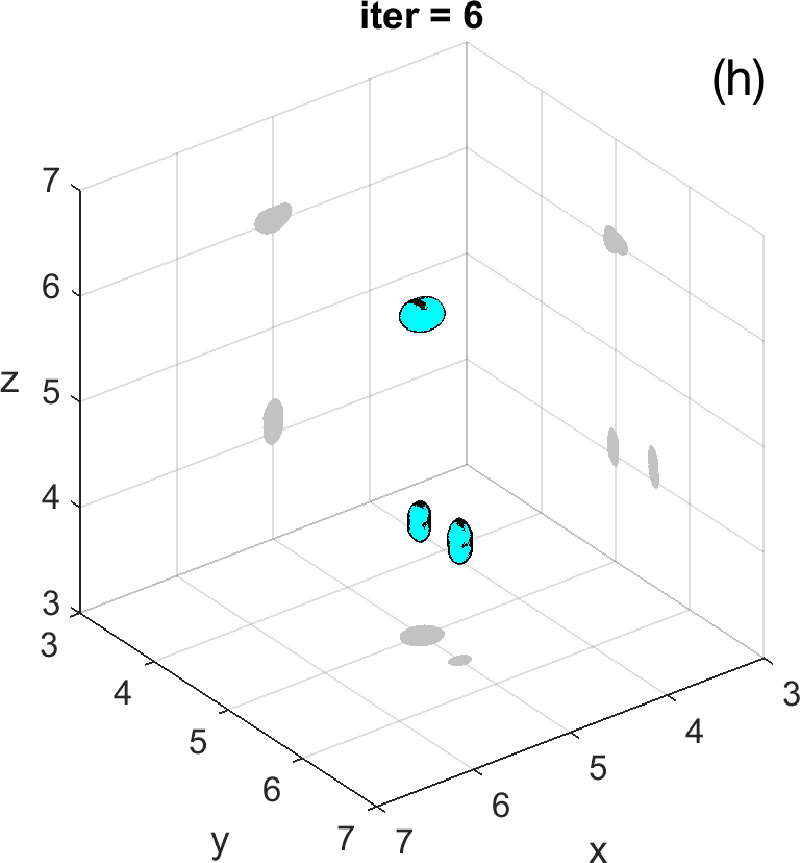

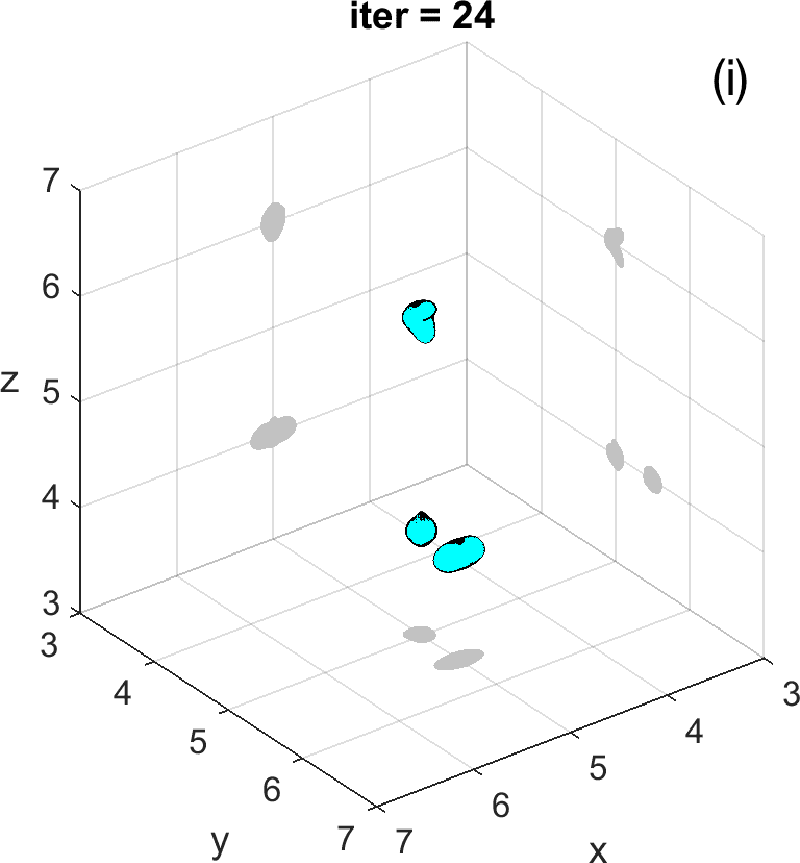

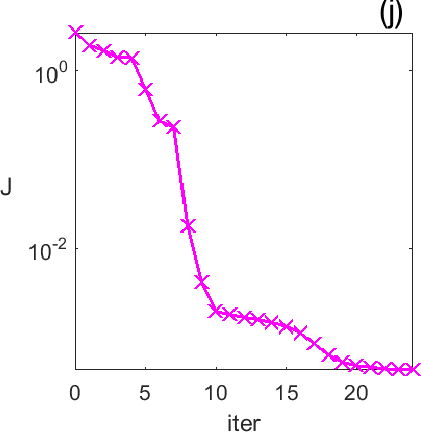

Figures 3 and 4 illustrate the process. Figure 4 depicts the hologram generated by the configuration with three objects shown in Figure 3 (a). We use the topological derivative (5) to spot a first dominant object at the top and locate an object there, see panel (b). Then we apply the IRGN method, see panels (c) and (d). At step 4 the cost functional, depicted in panel (j), stagnates without fulfilling the stopping criteria. This suggests that more objects should be created. This can be done by hybrid methods, as we explain next.

3.4 Topologically informed IRGN methods

Approaches that use initial object parametrization as reference have a drawback: the initial guess of the number of objects may be wrong. To overcome it, we have developed hybrid algorithms [5 A. Carpio, T. G. Dimiduk, F. Le Louër and M. L. Rapún, When topological derivatives met regularized Gauss–Newton iterations in holographic 3D imaging. J. Comput. Phys. 388, 224–251 (2019) ] combining topological derivatives and regularized Gauss–Newton iterations [5 A. Carpio, T. G. Dimiduk, F. Le Louër and M. L. Rapún, When topological derivatives met regularized Gauss–Newton iterations in holographic 3D imaging. J. Comput. Phys. 388, 224–251 (2019) ]. We fit an initial parametrization to the first guess of the objects constructed by topological methods. Then, we apply the IRGN method and check that the cost (2) decreases. When the cost stagnates without fulfilling the stopping criteria, we reset equal to the current guess of the objects for the last parametrization obtained and calculate the topological derivative of the cost for . This is given by (3) if and its equivalent

if . Asymptotic calculations yield the formula [9 A. Carpio and M.-L. Rapún, Solving inhomogeneous inverse problems by topological derivative methods. Inverse Problems 24, Article ID 045014 (2008) , 5 A. Carpio, T. G. Dimiduk, F. Le Louër and M. L. Rapún, When topological derivatives met regularized Gauss–Newton iterations in holographic 3D imaging. J. Comput. Phys. 388, 224–251 (2019) ]

when , with forward and conjugate adjoint fields satisfying transmission Maxwell problems with object :

where is the unit outer normal, and are Dirac masses concentrated at the detectors , .

We create a new approximation from by removing the points in at which the topological derivate surpasses a positive threshold and adding the points outside at which the topological derivate falls below a negative threshold , see [9 A. Carpio and M.-L. Rapún, Solving inhomogeneous inverse problems by topological derivative methods. Inverse Problems 24, Article ID 045014 (2008) , 6 A. Carpio, T. G. Dimiduk, M. L. Rapún and V. Selgas, Noninvasive imaging of three-dimensional micro and nanostructures by topological methods. SIAM J. Imaging Sci. 9, 1324–1354 (2016) ]:

The constants , are selected to ensure a decrease in the cost functional (2) keeping fixed. Once is constructed, we fit a parametrization to its contour and restart the IRGN procedure for . The procedure stops when the changes in the cost and the parametrizations fall below selected thresholds.

Let us revisit the example studied in Figures 3 and 4. At step 4 of the IRGN method the cost stagnates without fulfilling the stopping criteria. We calculate the topological derivative (6) of the cost for the current approximation of the objects, illustrated in Figure 3 (e). A new region where the topological derivative attains large negative values appears. We create a new object there and update the parametrization, see panel (f). Then we apply the IRGN method again. Since the cost functional still stagnates without fulfilling the stopping criteria, we recalculate the topological derivative (6) for the available object approximation. Panel (g) suggests the creation of a third object. We update the IRGN method using this new configuration, and evolve the resulting object configuration, represented in panel (h), until the stopping criterion is met at panel (i) after 24 steps. Panel (j) illustrates stagnation and decrease of the cost as new objects are added to the parametrization using topological information and the updated IRGN method evolves, in a logarithmic scale. These simulations assume known and fixed. Once first guesses for are available, we can implement this procedure considering constant values for at each component of the parametrization. Obtaining first guesses for that are reliable enough is a hard task [7 A. Carpio, T. G. Dimiduk, V. Selgas and P. Vidal, Optimization methods for in-line holography. SIAM J. Imaging Sci. 11, 923–956 (2018) ] and the optimization procedure can encounter difficulties. Bayesian approaches provide alternative procedures that can handle these difficulties while quantifying uncertainty associated to noise and missing information.

4 Bayesian inverse problem

Bayesian formulations consider all unknowns in the inverse problem as random variables. Given a recorded hologram , we seek a finite-dimensional vector of parameters characterizing the imaged objects. When we assume the presence of objects, is formed by blocks, one per object. Using Bayes’ formula [22 J. Kaipio and E. Somersalo, Statistical and computational inverse problems. Appl. Math. Sci. 160, Springer, New York (2005) , 33 A. Tarantola, Inverse problem theory and methods for model parameter estimation. Society for Industrial and Applied Mathematics (SIAM), Philadelphia (2005) ]

where represents the prior probability of the variables, which incorporates our previous knowledge on them, while is the conditional probability or likelihood of observing given . The solution of the Bayesian inverse problem is the posterior probability of the parameters given the data. Sampling the posterior distribution, we obtain statistical information on the most likely values of the object parameters with quantified uncertainty.

4.1 Likelihood choice

Assuming additive Gaussian measurement noise, the measured hologram and the synthetic hologram obtained for the true object parameters are related by , where the measurement noise is distributed as a multivariate Gaussian with zero mean and covariance matrix . A possible choice for the likelihood is [8 A. Carpio, S. Iakunin and G. Stadler, Bayesian approach to inverse scattering with topological priors. Inverse Problems 36, Article ID 105001 (2020) ]

with . Here, represents the synthetic hologram obtained solving the forward problem (1) for objects characterized by parameters , see Section 2.

4.2 Topological priors

A typical choice for the prior distribution is a multivariate Gaussian

if is “admissible”, and when is “not admissible”, that is, it does not satisfy known constraints on the parameter set, see [8 A. Carpio, S. Iakunin and G. Stadler, Bayesian approach to inverse scattering with topological priors. Inverse Problems 36, Article ID 105001 (2020) ] for details. Here, is the covariance matrix and is the total number of parameters characterizing the objects. The mean is typically a set of parameter values characterizing an initial guess of the objects. Sharp priors are obtained fitting parametrizations to first guesses of the objects obtained from the study of topological fields associated to deterministic shape costs, as explained in Section 3.2.

4.3 Markov chain Monte Carlo sampling

Combining (7), (8) and (9), the posterior probability becomes (neglecting normalization constants)

when is admissible, and otherwise. Markov chain Monte Carlo (MCMC) methods provide tools to sample unnormalized posteriors. Classical MCMC methods, such as Hamiltonian Monte Carlo or Metropolis–Hastings [30 R. M. Neal, MCMC using Hamiltonian dynamics. In Handbook of Markov Chain Monte Carlo, edited by S. Brooks, A. Gelman, G. L. Jones and X. L. Meng, Chapman & Hall/CRC Handb. Mod. Stat. Methods, CRC Press, Boca Raton, 113–162 (2011) ] construct a chain of -dimensional states which evolve to be distributed in accordance with the target distribution . After sampling an initial state from the prior distribution (9), the chain advances from one state to the next by means of a transition operator that varies with the method employed [30 R. M. Neal, MCMC using Hamiltonian dynamics. In Handbook of Markov Chain Monte Carlo, edited by S. Brooks, A. Gelman, G. L. Jones and X. L. Meng, Chapman & Hall/CRC Handb. Mod. Stat. Methods, CRC Press, Boca Raton, 113–162 (2011) ]. More recent ensemble MCMC samplers [13 M. M. Dunlop and G. Stadler, A gradient-free subspace-adjusting ensemble sampler for infinite-dimensional Bayesian inverse problems, preprint, arXiv:2202.11088v1 (2022) , 18 J. Goodman and J. Weare, Ensemble samplers with affine invariance. Commun. Appl. Math. Comput. Sci. 5, 65–80 (2010) ] draw initial states from the prior distribution (the “walkers” or “particles”) and transition to new states while mixing the previous ones to generate several chains. This approach allows for parallelization and can handle multimodal posteriors [8 A. Carpio, S. Iakunin and G. Stadler, Bayesian approach to inverse scattering with topological priors. Inverse Problems 36, Article ID 105001 (2020) ].

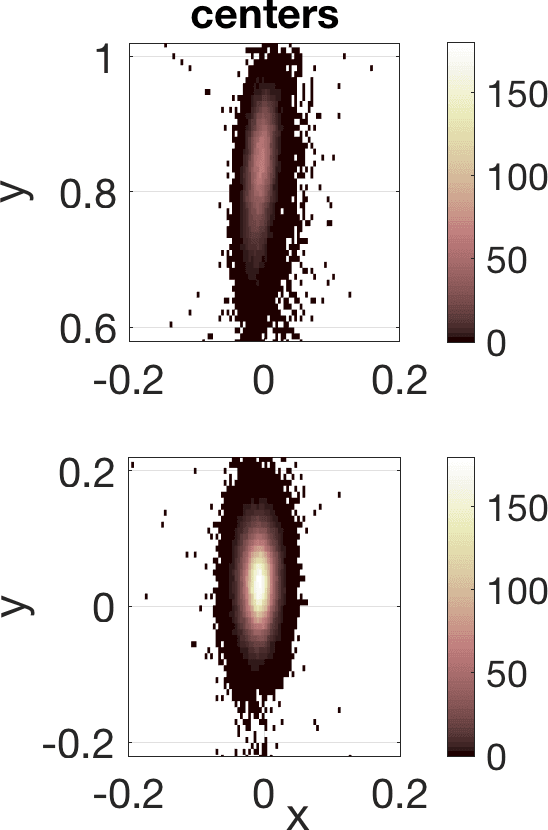

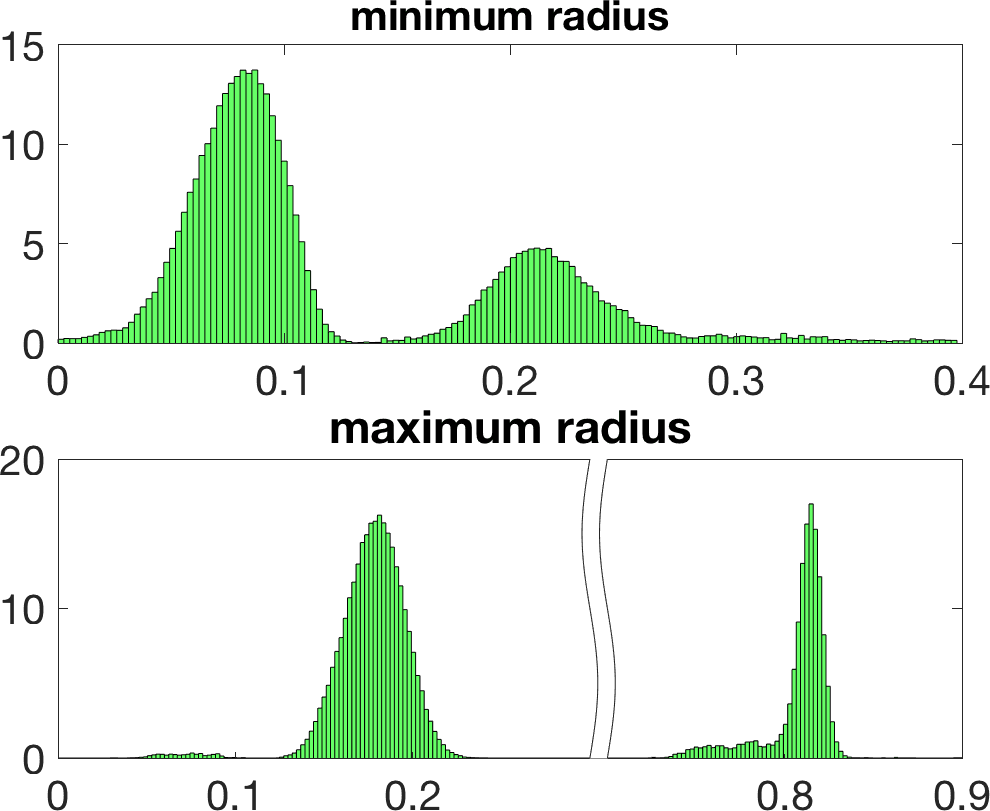

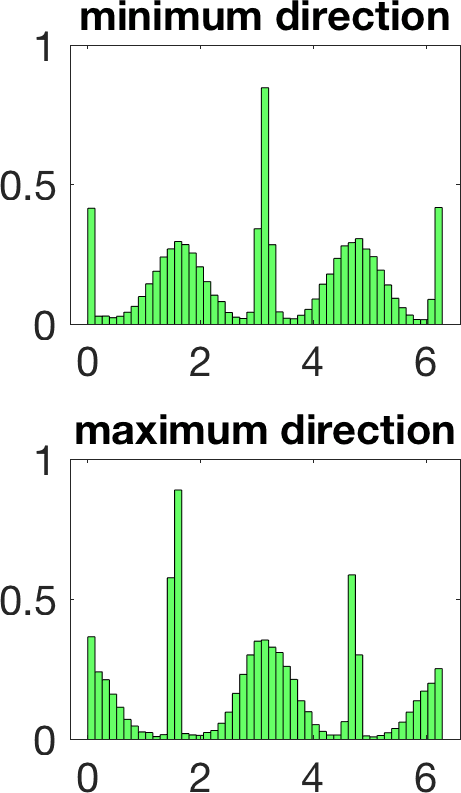

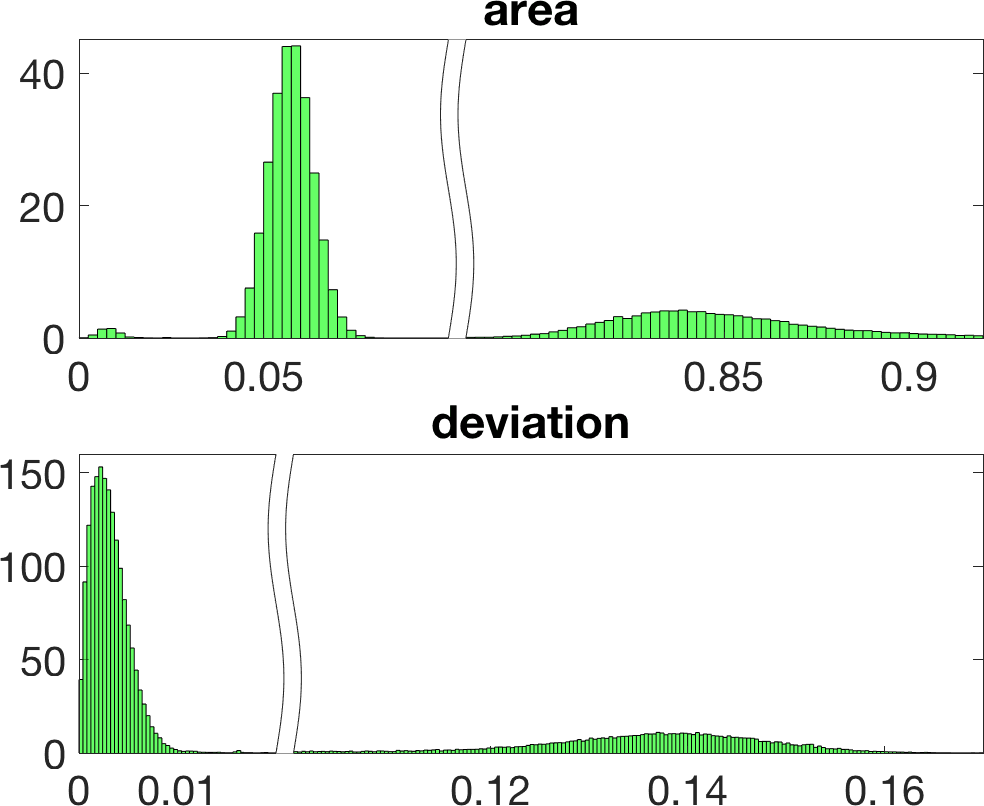

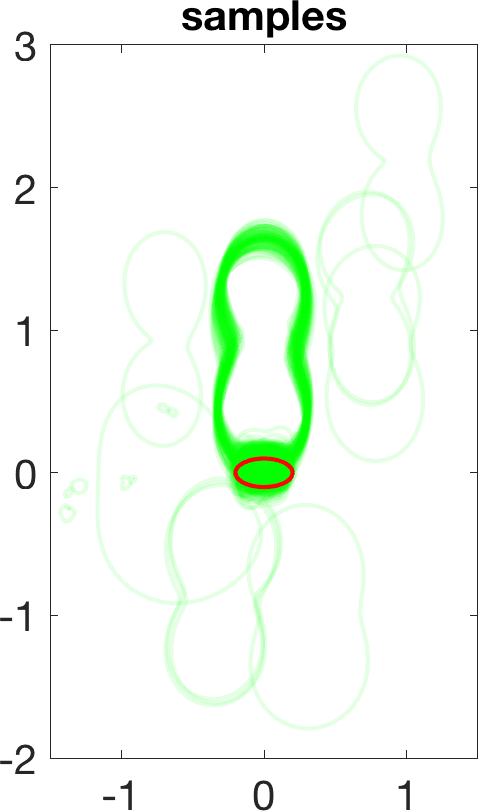

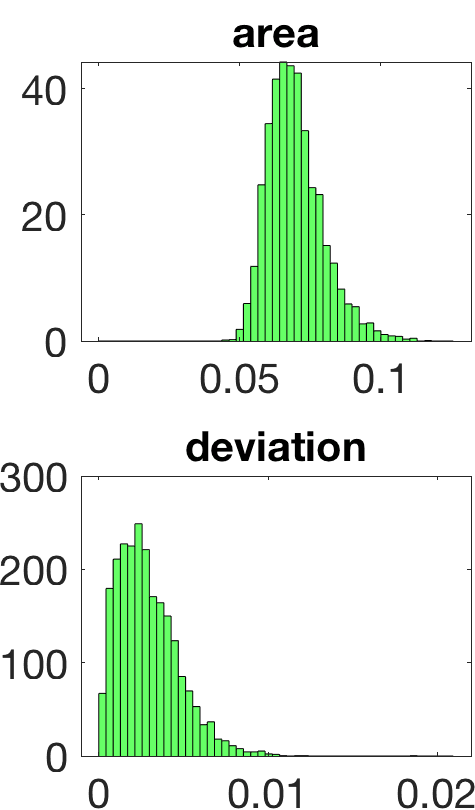

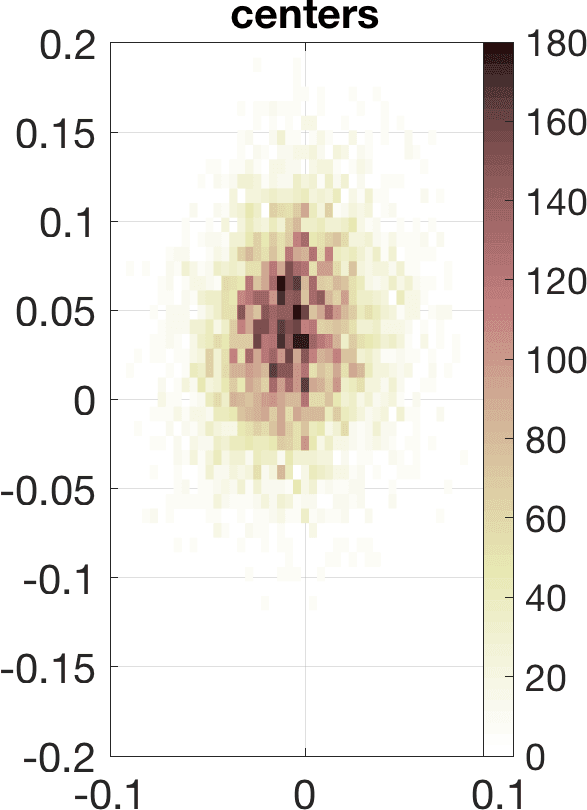

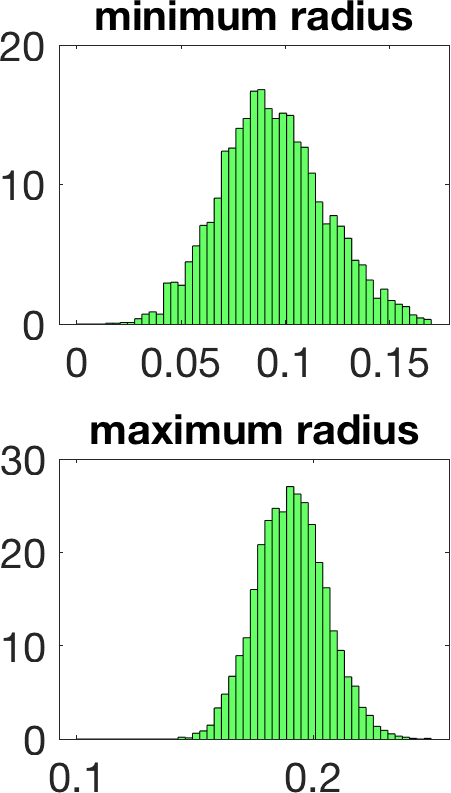

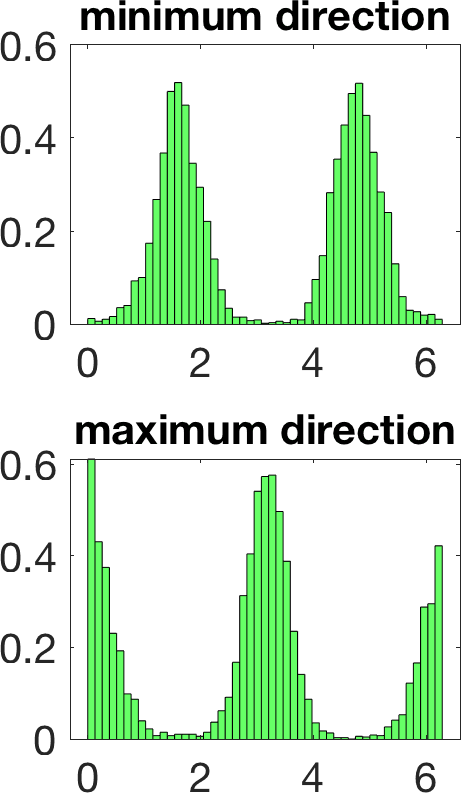

Figure 5 illustrates the results in a two-dimensional geometry, to reduce the computational cost in the tests. A few million samples were generated, which requires solving an identical number of forward problems. In two-dimensional set-ups we replace the stationary transmission problem for the Maxwell equations by a transmission problem posed for the Helmholtz equation [8 A. Carpio, S. Iakunin and G. Stadler, Bayesian approach to inverse scattering with topological priors. Inverse Problems 36, Article ID 105001 (2020) ]. Assuming is piecewise constant, we resort to fast boundary elements to solve the Hemholtz transmission problems in two dimensions [12 V. Domínguez, S. Lu and F.-J. Sayas, A fully discrete Calderón calculus for two dimensional time harmonic waves. Int. J. Numer. Anal. Model. 11, 332–345 (2014) ]. Once a large enough collection of samples is generated [17 A. Gelman and D. B. Rubin, Inference from iterative simulation using multiple sequences. Statist. Sci. 7, 457–472 (1992) ], we extract statistical information describing the imaged object: the most likely shapes, sizes, locations, as well as uncertainty in the predictions. While starshaped two-dimensional objects can be reasonably characterized with 10–20 parameters, three-dimensional objects require 80–90. Full characterization of the posterior probability by MCMC sampling becomes more expensive as the number of parameters and the time required to solve forward problems increase.

4.4 Laplace approximation

The full characterization of the posterior probability is a challenging and costly probability problem for moderate- and high-dimensional parameters . Low-cost approximations of the posterior distribution often rely on finding the maximum a posteriori (MAP) point, that is, the set of parameters that maximize the posterior probability. Upon taking logarithms, maximizing the posterior probability of the parameter set given the data is equivalent to minimizing the regularized cost functional [2 C. M. Bishop, Pattern recognition and machine learning. Springer, New York (2006) ]

This is a nonlinear least-squares problem of the form previously considered in deterministic inversion, including regularization terms provided by the prior knowledge. We can solve it efficiently by using an adapted Levenberg–Marquardt–Fletcher iterative scheme [15 R. Fletcher, Modified Marquardt subroutine for non-linear least squares. Technical report 197213 (1971) ]. Starting from , we set , where is the solution of

Here, is the Gauss–Newton approximation to the Hessian of the functional (10) and is its gradient, while is a scaling factor for that balances the different orders of magnitude of the two terms defining the cost in the first iterations, and becomes equal to 1 at a certain point. At each step, the adjustable parameter increases until the cost decreases, and decreases otherwise, making the iteration closer to Gauss–Newton or gradient schemes as required.

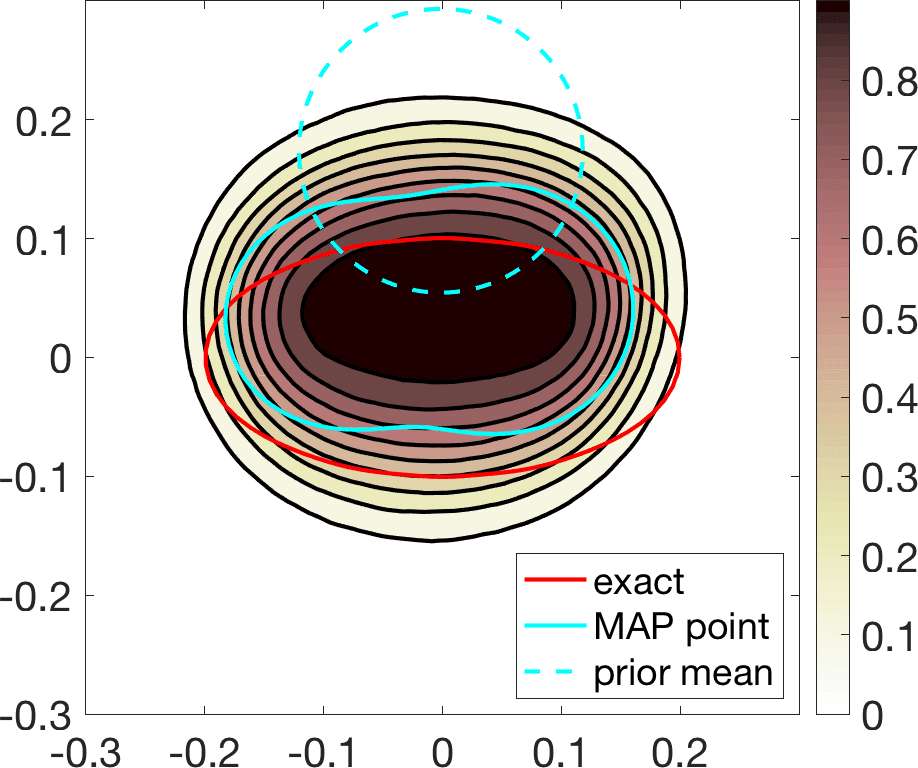

Linearization about the resulting MAP point (the so-called Laplace approximation) provides an approximation of the posterior distribution by a Gaussian with mean and posterior covariance . Sampling this Gaussian, we extract statistical information representing the dominant mode at a much lower computational cost, see Figure 6. Reaching takes about 20 steps of scheme (11). The whole process, sampling included, is finished in a few minutes, instead of a few days.

We have considered fixed and known in these tests. In case it is constant and unknown, it becomes an additional parameter included in . In the end, we obtain additional histograms reflecting uncertainty about the value with highest probability [8 A. Carpio, S. Iakunin and G. Stadler, Bayesian approach to inverse scattering with topological priors. Inverse Problems 36, Article ID 105001 (2020) ].

5 Perspectives

Digital holography poses challenging inverse problems which provide an opportunity to develop and test a variety of analytical and computational tools. First guesses of imaged objects are obtained by calculating the topological derivative of misfit functionals comparing the true hologram and the synthetic holograms that would be generated for different object configurations according to the selected forward model. Such guesses are robust to noise in the data. To reduce dimensionality, one can characterize the imaged objects by means of starshaped parametrizations. In a deterministic framework, we have shown that hybrid schemes combining iteratively regularized Gauss–Newton methods with topological derivative initializations and updates lead to good reconstructions of simple object configurations in a few steps, using stopping criteria that take into account the expected level of noise in the data. We are able to quantify uncertainty in such predictions by resorting to Bayesian formulations with topological priors. In two dimensions, Markov chain Monte Carlo methods provide a complete characterization of the posterior probability of the observed hologram being that is generated by a few starshaped objects. Three-dimensional tests are affordable for very simple shapes, such as a sphere or a cylinder [11 T. G. Dimiduk and V. N. Manoharan, Bayesian approach to analyzing holograms of colloidal particles. Optics Express 24, 24045–24060 (2016) ]. Handling high-dimensional parametrizations, in three dimensions or just irregular shapes, requires the introduction of strategies to reduce the computational cost. Laplace approximations based on optimizing to find the highest probability parameter set and then linearizing the posterior probability about it to obtain a multivariate Gaussian distribution are useful tools for uncertainty quantification when there is a single dominant mode. Developing fast sampling methods which are robust as dimension grows would be an important step forward to handle more general situations.

Holography set-ups are particularly challenging due to the fact that a single incident wave is used. We have focused here on light imaging, though acoustic waves can also be used to resolve at different scales. We expect similar techniques to be useful in inverse scattering problems involving other types of waves and different emitter/receiver configurations, such as microwave imaging or elastography, for instance.

Acknowledgements. Thanks to Krzysztof Burnecki for the kind invitation to write this article. The author is indebted to the Kavli Institute Seminars at Harvard for the interdisciplinary communication environment that initiated this work, M. P. Brenner for hospitality while visiting Harvard University, R. E. Caflisch for hospitality while visiting the Courant Institute at New York University, V. N. Manoharan for discussions of light holography, and D. G. Grier for an introduction to acoustic holography. The work summarized here has been done in collaboration with Thomas G. Dimiduk (Harvard University and Tesla), María Luisa Rapún (Universidad Politécnica de Madrid), Virginia Selgas (Universidad de Oviedo), Frédérique Le Louër (Université de Technologie de Compiègne), Georg Stadler (New York University), and Sergei Iakunin (Universidad Complutense de Madrid, now at the Basque Center for Applied Mathematics). This research was partially supported by the FEDER/MICINN–AEI grants PID2020-112796RB-C21, MTM2017-84446-C2-1-R, Fundación Caja Madrid Mobility grants, and MICINN “Salvador de Madariaga” Mobility grant PRX18/00112.

References

- A. B. Bakushinskii, On a convergence problem of the iterative-regularized Gauss–Newton method. Zh. Vychisl. Mat. i Mat. Fiz. 32, 1503–1509 (1992)

- C. M. Bishop, Pattern recognition and machine learning. Springer, New York (2006)

- C. F. Borhen and D. R. Huffman, Absorption and scattering of light by small particles. Wiley Sciences, John Wiley & Sons, Berlin (1998)

- T. Bui-Thanh, O. Ghattas, J. Martin and G. Stadler, A computational framework for infinite-dimensional Bayesian inverse problems Part I: The linearized case, with application to global seismic inversion. SIAM J. Sci. Comput. 35, A2494–A2523 (2013)

- A. Carpio, T. G. Dimiduk, F. Le Louër and M. L. Rapún, When topological derivatives met regularized Gauss–Newton iterations in holographic 3D imaging. J. Comput. Phys. 388, 224–251 (2019)

- A. Carpio, T. G. Dimiduk, M. L. Rapún and V. Selgas, Noninvasive imaging of three-dimensional micro and nanostructures by topological methods. SIAM J. Imaging Sci. 9, 1324–1354 (2016)

- A. Carpio, T. G. Dimiduk, V. Selgas and P. Vidal, Optimization methods for in-line holography. SIAM J. Imaging Sci. 11, 923–956 (2018)

- A. Carpio, S. Iakunin and G. Stadler, Bayesian approach to inverse scattering with topological priors. Inverse Problems 36, Article ID 105001 (2020)

- A. Carpio and M.-L. Rapún, Solving inhomogeneous inverse problems by topological derivative methods. Inverse Problems 24, Article ID 045014 (2008)

- D. Colton and R. Kress, Inverse acoustic and electromagnetic scattering theory. Appl. Math. Sci. 93, Springer, Berlin (1992)

- T. G. Dimiduk and V. N. Manoharan, Bayesian approach to analyzing holograms of colloidal particles. Optics Express 24, 24045–24060 (2016)

- V. Domínguez, S. Lu and F.-J. Sayas, A fully discrete Calderón calculus for two dimensional time harmonic waves. Int. J. Numer. Anal. Model. 11, 332–345 (2014)

- M. M. Dunlop and G. Stadler, A gradient-free subspace-adjusting ensemble sampler for infinite-dimensional Bayesian inverse problems, preprint, arXiv:2202.11088v1 (2022)

- G. R. Feijoo, A new method in inverse scattering based on the topological derivative. Inverse Problems 20, 1819–1840 (2004)

- R. Fletcher, Modified Marquardt subroutine for non-linear least squares. Technical report 197213 (1971)

- J. Fung, R. P. Perry, T. G. Dimiduk and V. N. Manoharan, Imaging multiple colloidal particles by fitting electromagnetic scattering solutions to digital holograms. J. Quant. Spectroscopy Radiative Transfer 113, 212–219 (2012)

- A. Gelman and D. B. Rubin, Inference from iterative simulation using multiple sequences. Statist. Sci. 7, 457–472 (1992)

- J. Goodman and J. Weare, Ensemble samplers with affine invariance. Commun. Appl. Math. Comput. Sci. 5, 65–80 (2010)

- P. Grisvard, Elliptic problems in nonsmooth domains. Classics Appl. Math. 69, SIAM, Philadelphia (2011)

- H. Harbrecht and T. Hohage, Fast methods for three-dimensional inverse obstacle scattering problems. J. Integral Equations Appl. 19, 237–260 (2007)

- T. Hohage, Logarithmic convergence rates of the iteratively regularized Gauss–Newton method for an inverse potential and an inverse scattering problem. Inverse Problems 13, 1279–1299 (1997)

- J. Kaipio and E. Somersalo, Statistical and computational inverse problems. Appl. Math. Sci. 160, Springer, New York (2005)

- S. H. Lee, Y. Roichman, G. R. Yi, S. H. Kim, S. M. Yang, A. van Blaaderen, P. van Oostrum and D. G. Grier, Characterizing and tracking single colloidal particles with video holographic microscopy. Optics Express 15, 18275–18282 (2007)

- F. Le Louër, A spectrally accurate method for the direct and inverse scattering problems by multiple 3D dielectric obstacles. ANZIAM J. 59, E1–E49 (2018)

- A. Maier, S. Steidl, V. Christlein, J. Hornegger, Medical imaging systems: An introductory guide. Springer (2018)

- P. Marquet, B. Rappaz, P. J. Magistretti, E. Cuche, Y. Emery, T. Colomb and C. Depeursinge, Digital holographic microscopy: a noninvasive contrast imaging technique allowing quantitative visualization of living cells with subwavelength axial accuracy. Optics Letters 30, 468–478 (2005)

- M. Masmoudi, J. Pommier and B. Samet, The topological asymptotic expansion for the Maxwell equations and some applications. Inverse Problems 21, 547–564 (2005)

- S. W. McCandless and C. R. Jackson, Principles of synthetic aperture radar. In SAR Marine Users Manual, NOAA (2004)

- S. Meddahi, F.-J. Sayas and V. Selgás, Nonsymmetric coupling of BEM and mixed FEM on polyhedral interfaces. Math. Comp. 80, 43–68 (2011)

- R. M. Neal, MCMC using Hamiltonian dynamics. In Handbook of Markov Chain Monte Carlo, edited by S. Brooks, A. Gelman, G. L. Jones and X. L. Meng, Chapman & Hall/CRC Handb. Mod. Stat. Methods, CRC Press, Boca Raton, 113–162 (2011)

- J.-C. Nédélec, Acoustic and electromagnetic equations. Appl. Math. Sci. 144, Springer, New York (2001)

- J. Sokołowski and A. Żochowski, On the topological derivative in shape optimization. SIAM J. Control Optim. 37, 1251–1272 (1999)

- A. Tarantola, Inverse problem theory and methods for model parameter estimation. Society for Industrial and Applied Mathematics (SIAM), Philadelphia (2005)

- J. Tromp, Seismic wavefield imaging of Earth’s interior across scales. Nature Reviews Earth & Environment 1, 40–53 (2020)

- T. Vincent, Introduction to holography. CRC Press (2012)

- A. Wang, T. G. Dimiduk, J. Fung, S. Razavi, I. Kretzschmar, K. Chaudhary and V. N. Manoharan, Using the discrete dipole approximation and holographic microscopy to measure rotational dynamics of non-spherical colloidal particles. J. Quant. Spectroscopy Radiative Transfer 146, 499–509 (2014)

- A. Yevick, M. Hannel and D. G. Grier, Machine-learning approach to holographic particle characterization. Optics Express 22, 26884–26890 (2014)

- M. A. Yurkin and A. G. Hoekstra, The discrete-dipole-approximation code ADDA: Capabilities and known limitations. J. Quant. Spectroscopy Radiative Transfer 112, 2234–2247 (2011)

Cite this article

Ana Carpio, Seeing the invisible: Digital holography. Eur. Math. Soc. Mag. 125 (2022), pp. 4–12

DOI 10.4171/MAG/99