The present column is devoted to Game Theory.

I Six new problems – solutions solicited

Solutions will appear in a subsequent issue.

245

We consider a setting where there is a set of candidates

and a set of voters . Each voter ranks all candidates from the most preferred one to the least preferred one; we write if voter prefers candidate to candidate . A collection of all voters’ rankings is called a preference profile. We say that a preference profile is single-peaked if there is a total order on the candidates (called the axis) such that for each voter the following holds: if ’s most preferred candidate is and or , then . That is, each ranking has a single ‘peak’, and then ‘declines’ in either direction from that peak.

(i) In general, if we aggregate voters’ preferences over candidates, the resulting majority relation may have cycles: e.g., if , and , then a strict majority (2 out of 3) voters prefer to , a strict majority prefer to , yet a strict majority prefer to . Argue that this cannot happen if the preference profile is single-peaked. That is, prove that if a profile is single-peaked, a strict majority of voters prefer to , and a strict majority of voters prefer to , then a strict majority of voters prefer to .

(ii) Suppose that is odd and voters’ preferences are known to be single-peaked with respect to an axis . Consider the following voting rule: we ask each voter to report their top candidate , find a median voter , i.e.

and output . Argue that under this voting rule no voter can benefit from voting dishonestly, if a voter reports some candidate instead of , this either does not change the outcome or results in an outcome that likes less than the outcome of the truthful voting.

(iii) We say that a preference profile is 1D-Euclidean if each candidate and each voter can be associated with a point in so that the preferences are determined by distances, i.e. there is an embedding such that for all and we have if and only if . Argue that a 1D-Euclidean profile is necessarily single-peaked. Show that the converse is not true, i.e. there exists a single-peaked profile that is not 1D-Euclidean.

(iv) Let be a single-peaked profile, and let be the set of candidates ranked last by at least one voter. Prove that .

(v) Consider an axis . Prove that there are exactly distinct votes that are single-peaked with respect to this axis. Explain how to sample from the uniform distribution over these votes.

These problems are based on references [4 H. Moulin, Axioms of Cooperative Decision Making. Cambridge University Press, Cambridge (1991) ] (parts (i) and (ii)), [2 C. H. Coombs. Psychological scaling without a unit of measurement. Psychological Review57, 145 (1950) ] (part (iii)) and [1 V. Conitzer, Eliciting single-peaked preferences using comparison queries. J. Artificial Intelligence Res.35, 161–191 (2009) , 5 T. Walsh, Generating single peaked votes. arXiv:1503.02766 (2015) ] (part (v)); part (iv) is folklore. See also the survey [3 E. Elkind, M. Lackner and D. Peters. Structured preferences. In Trends in Computational Social Choice, edited by U. Endriss, Chapter 10, AI Access, 187–207 (2017) ].

Edith Elkind (University of Oxford, UK)

246

Consider a standard prisoners’ dilemma game described by the following strategic form, with :

Assume that any given agent either plays or and that agents reproduce at a rate determined by their payoff from the strategic form of the game plus a constant . Suppose that members of an infinite population are assorted into finite groups of size . Let denote the proportion of agents playing strategy (“altruists”) in the population as a whole and denote the proportion of agents playing in group . We assume that currently .

The process of assortment is abstract, but we assume that it has finite expectation and variance . Members within each group are then randomly paired off to play one iteration of the prisoners’ dilemma against another member of their group. All agents then return to the overall population.

Find a condition relating , , , , and under which the proportion of altruists in the overall population rises after a round of play.

Now interpret this game as one where each player can confer a benefit upon the other player by individually incurring a cost , with , so that , and . Prove that, as long as (i) there is some positive assortment in group formation and (ii) the ratio is low enough, then the proportion of altruists in the overall population will rise after a round of play.

Richard Povey (Hertford College and St Hilda’s College, University of Oxford, UK)

247

Consider a village consisting of n farmers who live along a circle of length . The farmers live at positions . Each of them is friends with the person to the left and right of them, and each friendship has capacity where is a non-negative integer. At the end of the year, each farmer does either well (her wealth is dollars) or not well (her wealth is dollars) with equal probability. Farmers’ wealth realizations are independent of each other. Hence, for a large circle the share of farmers in each state is on average .

The farmers share risk by transferring money to their direct neighbors. The goal of risk-sharing is to create as many farmers with OK wealth (0 dollars) as possible. Transfers have to be in integer dollars and cannot exceed the capacity of each link (which is ).

A few examples with a village of size serve to illustrate risk-sharing.

Consider the case where farmers 1 to 4 have wealth

In that case, we can share risk completely with farmer sending a dollar to agent and farmer sending a dollar to farmer . This works for any .

Consider the case where farmers to have wealth

In that case, we can share risk completely with farmer sending a dollar to farmer , farmer sending two dollars to farmer and farmer sending one dollar to farmer . In this case, we need . If , we can only share risk among half the people in the village.

Show that for any wealth realization an optimal risk-sharing arrangement can be found as the solution to a maximum flow problem.

Tanya Rosenblat (School of Information and Department of Economics, University of Michigan, USA)

248

This exercise is a continuation of Problem 247 where we studied risk-sharing among farmers who live on a circle village and are friends with their direct neighbors to the left and right with friendships of a certain capacity. Assume that for any realization of wealth levels the best possible risk-sharing arrangement is implemented and denote the expected share of unmatched farmers with . Show that as .

Tanya Rosenblat (School of Information and Department of Economics, University of Michigan, USA)

249

In a combinatorial auction there are items for sale to buyers. Each buyer has some valuation function which takes as input a set of items and outputs that bidder’s value for that set. These functions will always be monotone ( for all ), and satisfy .

Definition 1 (Walrasian equilibrium). A price vector and a list of subsets of form a Walrasian equilbrium for if the following two properties hold:

Each .

The sets are disjoint, and .

Prove that a Walrasian equilibrium exists for if and only if there exists an integral1That is, a point such that each . optimum to the following linear program:

Hint. Take the dual, and start from there.

Matt Weinberg (Computer Science, Princeton University, USA)

250

Consider a game played on a network and a finite set of players . Each node in the network represents a player and edges capture their relationships. We use to represent the adjacency matrix of a undirected graph/network, i.e. . We assume . Thus, is a zero-diagonal, squared and symmetric matrix. Each player, indexed by , chooses an action and obtains the following payoff:

The parameter captures the strength of the direct links between different players. For simplicity, we assume .

A Nash equilibrium is a profile such that, for any ,

In other words, at a Nash equilibrium, there is no profitable deviation for any player choosing .

Let , for all (the transpose of a vector is denoted by ), and the identity matrix. Define the weighted Katz–Bonacich centrality vector as

Here denote the inverse Leontief matrix associated with network , while denote its entry, which is equal to the discounted number of walks from to with decay factor . Let be a vector of s. Then the unweighted Katz–Bonacich centrality vector can be defined as

Show that this network game has a unique Nash equilibrium . Can you link this equilibrium to the Katz–Bonacich centrality vector defined above?

Let denote the sum of actions (total activity) at the unique Nash equilibrium in part 1. Now suppose that you can remove a single node, say , from the network. Which node do you want to remove such that the sum of effort at the new Nash equilibrium is reduced the most? (Note that, after the deletion of node , we remove all the links of node , and the remaining network, denoted by , can be obtained by deleting the -th row and -th column of .)

Mathematically, you need to solve the key player problem

In other words, you want to find a player who, once removed, leads to the highest reduction in total action in the remaining network.

Hint. You may come up with an index for each such that the key player is the one with the highest . This should be expressed using the Katz–Bonacich centrality vector defined above.

Now instead of deleting a single node, we can delete any pair of nodes from the network. Can you identify the key pair, that is, the pair of nodes that, once removed, reduces total activity the most?

Yves Zenou (Monash University, Australia) and Junjie Zhou (National University of Singapore)

II Open problem

Equilibrium in Quitting Games

by Eilon Solan (School of Mathematical Sciences, Tel Aviv University, Israel)2The author thanks János Flesch, Ehud Lehrer, and Abraham Neyman for commenting on earlier versions of the text, and acknowledges the support of the Israel Science Foundation, Grant #217/17.

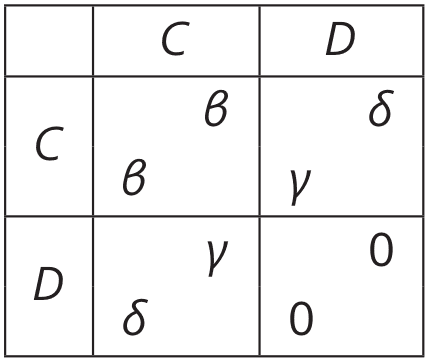

Alaya, Black, and Catherine are involved in an endurance match, where each player has to decide if and when to quit, and the outcome depends on the set of players whose choice is larger than the minimum of the three choices. Formally, each of the three has to select an element of : the choice corresponds to the decision to never quit, and the choice corresponds to the decision to quit the match in round . Denote by (resp. , ) Alaya’s (resp. Black’s, Catherine’s) choice, and by . As a result of their choices, the players receive payoffs, which are determined by the set and on whether . As a concrete example, suppose that if , the payoff of each player is 0, and if , the payoffs are given by the table in Figure 1.

Each entry in the figure represents one possible outcome. For example, when , the payoffs of the three players are : the left-most number in each entry is the payoff to Alaya, the middle number is the payoff to Black, and the right-most number is the payoff to Catherine. This game is an instance of a class of games that are known as quitting games.

How should the players act in this game? To provide an answer, we formalize the concepts of strategy and equilibrium. As the choice of each participant may be random, a strategy for a player is a probability distribution over . Denote a strategy of Alaya (resp. Black, Catherine) by (resp. , ), and by the expected payoff to player under the vector of strategies . A vector of strategies is an equilibrium if no player can increase her or his expected payoff by adopting another strategy while the other two stick to their strategies:

for every strategy of Alaya, and analogous inequalities hold for Black and Catherine.

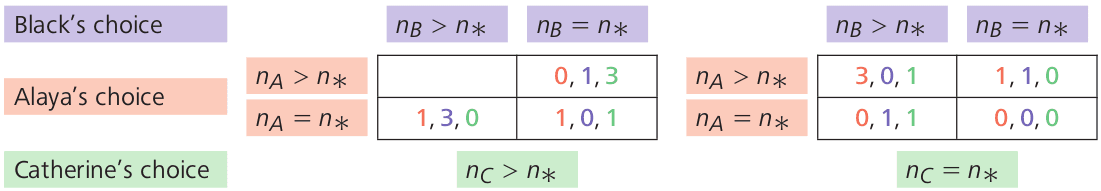

The three-player quitting game with payoffs as described above was studied by Flesch, Thuijsman, and Vrieze [15 J. Flesch, F. Thuijsman and K. Vrieze, Cyclic Markov equilibria in stochastic games. Internat. J. Game Theory26, 303–314 (1997) ] who proved that the following vector of strategies is an equilibrium:

Under , with probability 1 the minimum is the choice of exactly one player: with probability , with probability , and with probability . It follows that the vector of expected payoffs under is

Can a player profit by adopting a strategy different than , , or , assuming the other two stick to their prescribed strategies? It is a bit tedious, but not too difficult, to verify that this is not the case, hence is indeed an equilibrium.

In fact, Flesch, Thuijsman, and Vrieze [15 J. Flesch, F. Thuijsman and K. Vrieze, Cyclic Markov equilibria in stochastic games. Internat. J. Game Theory26, 303–314 (1997) ] proved that under all equilibria of the game, with probability 1 the minimum coincides with the choice of exactly one player. Moreover, a vector of strategies is an equilibrium if and only if the set can be partitioned into blocks of consecutive numbers, and up to circular permutations of the players, the support of the strategy of Alaya (which is a probability distribution over ) is contained in blocks number , and the total probability that is in block is (for each ), the support of the strategy of Black (resp. Catherine) is contained in blocks number (resp. ), and the total probability that (resp. ) is in block (resp. ) is (for each ).

Does an equilibrium exist if the payoffs are not given by the table in Figure 1, but rather by other numbers? Solan [19 E. Solan, The dynamics of the Nash correspondence and n-player stochastic games. Int. Game Theory Rev.3, 291–299 (2001) ] showed that this is not the case. He studied a three-player quitting game that differs from the game of [15 J. Flesch, F. Thuijsman and K. Vrieze, Cyclic Markov equilibria in stochastic games. Internat. J. Game Theory26, 303–314 (1997) ] in three payoffs:

the payoffs in the entry are ,

the payoffs in the entry are ,

the payoffs in the entry are ;

and showed that provided is sufficiently small, the game has no equilibrium. For example, the strategy vector described above is no longer an equilibrium, because Catherine is better off selecting with probability 1, thereby obtaining expected payoff , which is higher than her expected payoff under (that is still 1).

Yet in Solan’s variation [19 E. Solan, The dynamics of the Nash correspondence and n-player stochastic games. Int. Game Theory Rev.3, 291–299 (2001) ], for every there is an -equilibrium: a vector of strategies such that no player can profit more than by deviating to another strategy, in other words,

for every strategy of Alaya, and analogous inequalities hold for Black and Catherine. Indeed, given a positive integer , consider the following variation of , denoted , where the set is partitioned into blocks of size : block contains the integers , for each . is the probability distribution that assigns to each integer in block the probability , for every . Similarly, (resp. ) is the probability distribution that assigns to each integer in block (resp. ) the probability , for every . As mentioned above, the strategy vector is an equilibrium of the game whose payoff function is given in Figure 1, and one can verify that provided , it is an -equilibrium of Solan’s variation [19 E. Solan, The dynamics of the Nash correspondence and n-player stochastic games. Int. Game Theory Rev.3, 291–299 (2001) ].

It follows from [18 E. Solan, Three-player absorbing games. Math. Oper. Res.24, 669–698 (1999) ] that an -equilibrium exists in every three-player quitting game, for every , regardless of the payoffs. One of the most challenging problems in game theory to date is the following.

251*

Does an -equilibrium exist in quitting games that include more than three players, for every ?

For partial results, see [21E. Solan and N. Vieille, Quitting games. Math. Oper. Res.26, 265–285 (2001) , 22 E. Solan and N. Vieille, Quitting games – an example. Internat. J. Game Theory31, 365–381 (2002) , 16R. S. Simon, The structure of non-zero-sum stochastic games. Adv. in Appl. Math.38, 1–26 (2007) , 17 R. S. Simon, A topological approach to quitting games. Math. Oper. Res.37, 180–195 (2012) , 20 E. Solan and O. N. Solan, Quitting games and linear complementarity problems. Math. Oper. Res.45, 434–454 (2020) , 14 G. Ashkenazi-Golan, I. Krasikov, C. Rainer and E. Solan, Absorption paths and equilibria in quitting games. arXiv:2012.04369 (2021) ], which use different tools to study the problem: dynamical systems, algebraic topology, and linear complementarity problems. The open problem is a step in solving several other well-known open problems in game theory: the existence of -equilibria in stopping games, the existence of uniform equilibria in stochastic games, and the existence of -equilibria in repeated games with Borel-measurable payoffs.

It is interesting to note that if we defined

then an -equilibrium need not exist for small . Indeed, with this definition, the three-player game in which the payoff of player is 1 if for each , and 0 otherwise, has no -equilibrium for .

III Solutions

237

We take for our probability space : the unit interval equipped with Lebesgue measure defined on , the Borel subsets of and let be an invertible measure preserving transformation, that is is a bimeasurable bijection of some Borel set of full measure so that and for every .

Suppose also that is ergodic in the sense that the only -invariant Borel sets have either zero- or full measure (, ).

Birkhoff’s ergodic theorem says that for every integrable function ,

The present exercise is concerned with the possibility of generalizing this. Throughout, is an arbitrary ergodic, measure preserving transformation as above.

Warm-up 1. Show that if is measurable, and

then converges in a.s.

Warm-up 1 is [23 P. Hagelstein, D. Herden and A. Stokolos, A theorem of Besicovitch and a generalization of the Birkhoff ergodic theorem. Proc. Amer. Math. Soc. Ser. B8, 52–59 (2021) , Lemma 1]. For a multidimensional version, see [23 P. Hagelstein, D. Herden and A. Stokolos, A theorem of Besicovitch and a generalization of the Birkhoff ergodic theorem. Proc. Amer. Math. Soc. Ser. B8, 52–59 (2021) , Conjecture 3].

Warm-up 2. Show that if is as in Warm-up 1, there exist measurable with bounded so that .

Warm-up 2 is established by adapting the proof of [25 D. Volný and B. Weiss, Coboundaries in L0∞. Ann. Inst. H. Poincaré Probab. Statist.40, 771–778 (2004) , Theorem A].

Problem. Show that there is a measurable function satisfying so that

converges in a.s.

The existence of such for a specially constructed ergodic measure preserving transformation is shown in [24 D. Tanny, A zero-one law for stationary sequences. Z. Wahrscheinlichkeitstheorie und Verw. Gebiete30, 139–148 (1974) , Example b]. The point here is to prove it for an arbitrary ergodic measure preserving transformation of .

Jon Aaronson (Tel Aviv University, Israel)

Solution by the proposer

We’ll fix sequences , (). For each , , we’ll construct a small coboundary . The desired function will be of the form for a suitable choice of , ().

To construct , choose, using Rokhlin’s lemma, a set such that are disjoint and where . Let

It follows that

Set , , , and define as above.

Since

this is a finite sum and so

Proof that . For each ,

and

Next,

whence

It follows that

whence

∎

Proof that a.s.. There is a function so that for a.s. , for all . Suppose that and , then

and

∎

238

Let be a probability space and be a sequence of independent and identically distributed (i.i.d.) random variables on . Assume that there exists a sequence of positive numbers such that for every , , and . Prove that, if for each , then

Comment. The desired statement says that, if such a sequence exists, then satisfies the (generalized) Strong Law of Large Numbers (SLLN) when averaged by . If for every , then the desired statement follows trivially from Kolmogorov’s SLLN, since in which case, with probability one,

and hence

must converge to 0 under the assumptions on . Therefore, the desired statement can be viewed as an alternative to Kolmogorov’s SLLN for i.i.d. random variables that are not integrable.

Linan Chen (McGill University, Montreal, Quebec, Canada)

Solution by the proposer

As explained above, we will need to prove the desired statement without assuming integrability of ’s. For every , we truncate at the level by defining if , and if . Then, is again a sequence of independent random variables. It follows from the assumption on that

which, by the Borel–Cantelli lemma, implies that the sequence of the truncated random variables is equivalent to the original sequence in the sense that

Next, by setting , we have that

and hence the assumption on implies that

Our next goal is to establish the desired SLLN statement for . To be specific, we want to show that if for each , then almost surely. We will achieve this goal in two steps.

Step 1 is to treat the convergence of . To this end, we derive an upper bound for this term as

Then (2) implies that

which, by Kronecker’s lemma, leads to

Hence, we conclude that .

Step 2 is to establish the convergence of , for which we will use a martingale convergence argument. We note that if

for each , then is a martingale (with respect to the natural filtration) and for each ,

where the second last inequality follows from the assumption that is increasing in , and the last inequality is due to the fact that there exists constant such that for every . Hence, (2) implies that is bounded in . A standard martingale convergence result implies that exists in almost surely3One can also use Kolmogorov’s maximal inequality to prove the almost sure existence of the limit of ., which, by Kronecker’s lemma again, leads to

Finally, we write as

where the last two terms have been proven to converge to 0 almost surely, and (1) implies that, with probability one, the limit of the first term is also 0. We have completed the proof.

239

In Beetown, the bees have a strict rule: all clubs must have exactly members. Clubs are not necessarily disjoint. Let be the smallest number of clubs that the bees can form, such that no matter how they divide themselves into two teams to play beeball, there will always be a club all of whose membees are on the same team. Prove that

for some constant .

Rob Morris (IMPA, Rio de Janeiro, Brasil)

Solution by the proposer

This is an old result of Erdős, and a classic application of the probabilistic method. Let us think of the two teams as being red and blue, so that a club is ‘monochromatic’ if all of its membees are on the same team.

First, for the lower bound, we need to show that if , then for any collection of clubs there exists a colouring with no monochromatic club. To do so, we choose the teams randomly, and observe that the expected number of monochromatic clubs is less than . To be precise, let , independently for each bee , and let count the number of monochromatic clubs. Then, by linearity of expectation, , since each club is monochromatic with probability exactly . But this implies that , so there exists a colouring with no monochromatic club, as required.

For the upper bound, we choose the clubs randomly. To be precise, choose bees, and choose each club uniformly and independently from the -subsets of these bees. The idea is that, for any colouring of the bees, the expected number of monochromatic clubs is at least , so the probability of having no monochromatic club should be at most . Since there are colourings of these bees, the expected number of colourings with no monochromatic clubs is less than , so there must exist a choice for which it is zero.

To spell out the details, fix a colouring, and suppose that of the chosen bees are red. The probability that a random club is monochromatic is

for some constant , where in the final inequality we used the fact that .

Now, let count the number of colourings of the bees with no monochromatic club, and observe that if there are clubs, then

It follows that there exists a choice of clubs such that , as required.

240

agents are in a room with a server, and each agent is looking to get served, at which point the agent leaves the room. At any discrete time step, each agent may choose to either shout or stay quiet, and an agent gets served in that round if (and only if) that agent is the only one to have shouted. The agents are indistinguishable to each other at the start, but at each subsequent step, every agent gets to see who has shouted and who has not. If all the agents are required to use the same randomised strategy, show that the minimum time to clear the room in expectation is .

Bhargav Narayanan (Rutgers University, Piscataway, USA)

Solution by the proposer

Here is a simple strategy that works in expected time . The agents all toss independent fair coins to decide whether to shout or not in each of the first rounds. It is easy to see that with high probability, after these rounds, every agent (still in the room) has a unique ‘history’, i.e. no two agents have the exact same sequence of turns (shouting/staying quiet). Now the agents are all distinguishable, and we are done in more steps; for example, the agents can interpret each others histories as numbers in binary, and can get served in increasing order. Below, we show that no strategy can do significantly better.

At any time, we can partition all the agents into clusters based on their history so far: two agents go into the same cluster if they have chosen to do the same thing in all previous rounds. By the requirement that the agents all have the same randomised strategy, we know that at any time, all the agents in the same cluster must have the same strategy. Let be the number of times an agent from a cluster of size at least 2 gets served and leaves the room, and let be the number of times either

exactly two agents from the same cluster, and nobody else, ask to be served, or

nobody asks to be served at all.

An easy computation shows that

for all and any ; consequently, it is easy to see that stochastically dominates . So, if for some strategy,

then the expected time to clear the room, which is at least , is at least in expectation. So we may assume that with high probability for any strategy under consideration.

Let be the set of agents who leave the room only when they belong to their own singleton cluster. As we just observed, the number of such agents may be assumed to be at least . The key observation is this: if someone leaves the room in a particular step, the cluster structure of does not change in that step. To see this, note that when an agent not from leaves the room, that agent shouts and everyone in does not, so there is no change to the cluster structure of . On the other hand, when an agent from leaves the room, that agent is, by definition, already in their own singleton cluster, and every other agent in does not shout in this step; again, there is no change in the cluster structure of .

But we know that at the end of the process, which let us say takes rounds, has been split from a single cluster into singleton clusters. Nothing changes in the cluster structure of in the rounds when someone leaves the room, so gets broken down into singleton clusters in the remaining steps.

Consider these steps where nobody leaves the room. Deterministically, in the first of these steps, we can produce at most singletons in . The remaining agents in are all in clusters of size at least 2. Divide all these cluster into sub-clusters each of size 2 (by ignoring agents if necessary). The result is at least 2-clusters that we still need to break down into singletons (the worst case being when the agents are each in a cluster of size 3). The probability that a 2-cluster breaks down into two singletons at any given time step, with any strategy, is at most . So in any strategy, we need at least another time steps, say, for all these 2-clusters to separate into singletons. Thus, with high probability, which with our previous bound on , tells us that any strategy takes at least steps to clear the room.

241

Consider the following sequence of partitions of the unit interval : First, define to be the partition of into two intervals, a red interval of length and a blue one of length . Next, for any , define to be the partition derived from by splitting all intervals of maximal length in , each into two intervals, a red one of ratio and a blue one of ratio , just as in the first step. For example consists of three intervals of lengths (red), (red) and (blue), the last two are the result of splitting the blue interval in . The figure above illustrates , from top to bottom.

Let and consider the -th partition .

Choose an interval in uniformly at random. Let be the probability you chose a red interval. Does the sequence converge? If so, what is the limit?

Choose a point in uniformly at random. Let be the probability that the point is colored red. Does the sequence converge? If so, what is the limit?

Yotam Smilansky (Rutgers University, NJ, USA)

Solution by the proposer

The proposed solution is based on path counting results [26 A. Kiro, Y. Smilansky and U. Smilansky, The distribution of path lengths on directed weighted graphs. In Analysis as a Tool in Mathematical Physics, Oper. Theory Adv. Appl. 276, Birkhäuser/Springer, Cham, 351–372 (2020) ] on an appropriately defined graph, and can be generalized to higher dimensions and to more complicated sequences of partitions [27 Y. Smilansky, Uniform distribution of Kakutani partitions generated by substitution schemes. Israel J. Math.240, 667–710 (2020) ].

Let be a weighted graph with a single vertex and two directed loops: a red one of length and a blue one of length , and consider directed walks along the edges of that originate at the vertex and terminate on a point of a colored loop. The first important observation is that there is a 1-1 correspondence between colored intervals in and walks of length on , where is the increasing sequence of lengths of closed orbits on . In the following illustration, the top is partition and the corresponding two walks of length , and the bottom is partition and the corresponding three walks of length .

In general, a splitting of an interval corresponds to an extension of a walk that terminates at the vertex to two new walks, one that extends onto the red loop and the other onto the blue. Therefore, is the relative part of walks of length that terminate on the red loop. For , consider random walks on and prescribe probabilities to the two outgoing loops: a walk along is extended onto the red loop when reaching the vertex with probability , and onto the blue loop with probability . These are chosen because of a split interval is colored red and colored blue. It follows that is the probability that a walker is located on the red loop after walking a walk of length .

The second observation is that in order to compute the asymptotic behavior of and , one can apply the well-known Wiener–Ikehara theorem, originally devised to approach the prime number theorem. The theorem states that if there exists for which the Laplace transform of a counting function is analytic for , has a simple pole at and no other singular points on the vertical line , then the main term of the growth rate is , with the residue of the Laplace transform at .

A direct computation shows that the Laplace transform for the number of walks that terminate on the red loop is

Inspecting the term one sees that is a simple root of maximal real part, and so to apply the Wiener–Ikehara theorem it suffices to establish that there are no other roots of the form . Indeed, a careful but elementary inspection shows that otherwise, the loops of must have commensurable lengths, or equivalently , which is of course false. The Laplace transform of the total number of walks is similar but has numerator , and so tends to the ratio of the residues of these two transforms at , that is, . Similarly, the Laplace transform for is

with the same poles but shifted by . It follows that converges to the residue at , namely

Note that the limit of is simply the length of the blue interval in , and the limit of can be viewed as the relative contribution of the red interval to the partition entropy of . This interpretation leads me to suspect that there may exist a more direct and illuminating approach to these problems, possibly based on tools from probability and dynamics, and I would be very happy to discuss any ideas or suggestions.

242

Prove that there exist and such that if are increasing events of independent boolean random variables with for all , then

(What is the smallest that you can prove?)

Here is an “increasing event” if whenever , then the vector obtained by changing any coordinates of from to still lies in .

A useful fact is the Harris inequality, which states that for increasing events and of boolean random variables, .

I learned of this problem from Jeff Kahn.

Yufei Zhao (MIT, Cambridge, USA)

Solution by the proposer

We will show that the claim is true for every and .

If , then the conclusion is automatic. So let us assume that . Since for each , there exists some such that lies within of . Let and . We write and for the complementary events.

If exactly one of occurs, then exactly one of and can occur. So

where the second inequality is due to Harris’ inequality.

Remark. It is conjectured that for any there exists some for which the statement is true. Here is optimal, since if are independent Bernoulli random variables with mean , then the number of occurrences is asymptotically Poisson with mean 1, with so that the probability of single occurrence is .

We are eager to receive your solutions to the proposed problems, and any ideas that you may have on open problems. Send your solutions to Michael Th. Rassias (Institute of Mathematics, University of Zürich, Winterthurerstrasse 190, 8057 Zürich, Switzerland; michail.rassias@math.uzh.ch).

We also solicit your suggestions for new problems together with their solutions, for the next “Solved and unsolved problems” column, which will be devoted to Topology/Geometry.

- 1

That is, a point such that each .

- 2

The author thanks János Flesch, Ehud Lehrer, and Abraham Neyman for commenting on earlier versions of the text, and acknowledges the support of the Israel Science Foundation, Grant #217/17.

- 3

One can also use Kolmogorov’s maximal inequality to prove the almost sure existence of the limit of .

References

- V. Conitzer, Eliciting single-peaked preferences using comparison queries. J. Artificial Intelligence Res.35, 161–191 (2009)

- C. H. Coombs. Psychological scaling without a unit of measurement. Psychological Review57, 145 (1950)

- E. Elkind, M. Lackner and D. Peters. Structured preferences. In Trends in Computational Social Choice, edited by U. Endriss, Chapter 10, AI Access, 187–207 (2017)

- H. Moulin, Axioms of Cooperative Decision Making. Cambridge University Press, Cambridge (1991)

- T. Walsh, Generating single peaked votes. arXiv:1503.02766 (2015)

- B. Cooper and C. Wallace, Group selection and the evolution of altruism. Oxford Economic Papers56, 307–330 (2004)

- R. Povey, Punishment and the potency of group selection, J. Evolutionary Economics24 (2014)

- G. R. Price, Selection and covariance, Nature227, 520–521 (1970)

- G. R. Price, Extension of covariance selection mathematics, Ann. Human Genetics35, 485–490 (1972)

- E. Sober and D. S. Wilson, Unto Others, Harvard University Press, Cambridge, Massachusetts (1999)

- C. Ballester, A. Calvó-Armengol and Y. Zenou, Who’s who in networks. Wanted: the key player. Econometrica74, 1403–1417 (2006)

- M. O. Jackson and Y. Zenou, Games on networks. In Handbook of Game Theory. Vol. 4, edited by H. P. Young and S. Zamir, Handbooks in Economics, 91–157, Elsevier/North-Holland, Amsterdam (2015)

- Y. Sun, W. Zhao and J. Zhou, Structural interventions in networks. arXiv:2101.12420 (2021)

- G. Ashkenazi-Golan, I. Krasikov, C. Rainer and E. Solan, Absorption paths and equilibria in quitting games. arXiv:2012.04369 (2021)

- J. Flesch, F. Thuijsman and K. Vrieze, Cyclic Markov equilibria in stochastic games. Internat. J. Game Theory26, 303–314 (1997)

- R. S. Simon, The structure of non-zero-sum stochastic games. Adv. in Appl. Math.38, 1–26 (2007)

- R. S. Simon, A topological approach to quitting games. Math. Oper. Res.37, 180–195 (2012)

- E. Solan, Three-player absorbing games. Math. Oper. Res.24, 669–698 (1999)

- E. Solan, The dynamics of the Nash correspondence and n-player stochastic games. Int. Game Theory Rev.3, 291–299 (2001)

- E. Solan and O. N. Solan, Quitting games and linear complementarity problems. Math. Oper. Res.45, 434–454 (2020)

- E. Solan and N. Vieille, Quitting games. Math. Oper. Res.26, 265–285 (2001)

- E. Solan and N. Vieille, Quitting games – an example. Internat. J. Game Theory31, 365–381 (2002)

- P. Hagelstein, D. Herden and A. Stokolos, A theorem of Besicovitch and a generalization of the Birkhoff ergodic theorem. Proc. Amer. Math. Soc. Ser. B8, 52–59 (2021)

- D. Tanny, A zero-one law for stationary sequences. Z. Wahrscheinlichkeitstheorie und Verw. Gebiete30, 139–148 (1974)

- D. Volný and B. Weiss, Coboundaries in L0∞. Ann. Inst. H. Poincaré Probab. Statist.40, 771–778 (2004)

- A. Kiro, Y. Smilansky and U. Smilansky, The distribution of path lengths on directed weighted graphs. In Analysis as a Tool in Mathematical Physics, Oper. Theory Adv. Appl. 276, Birkhäuser/Springer, Cham, 351–372 (2020)

- Y. Smilansky, Uniform distribution of Kakutani partitions generated by substitution schemes. Israel J. Math.240, 667–710 (2020)

Cite this article

Michael Th. Rassias, Solved and unsolved problems. Eur. Math. Soc. Mag. 121 (2021), pp. 58–67

DOI 10.4171/MAG/38