The present column is devoted to Number Theory.

I Six new problems – solutions solicited

Solutions will appear in a subsequent issue.

269

Consider two positive integers and such that

is a prime. Prove that is a power of .

Dorin Andrica and George Cătălin Ţurcaş (Babeş-Bolyai University, Cluj-Napoca, Romania)

270

The Collatz map is defined as follows:

Let

That is, is the smallest integer such that, if we apply the Collatz map times, the result is larger than .

(a) Find and .

(b) Show that, for large enough (larger than (say) ), we have

(c) In general, for odd and large enough, there exists a constant such that is the smallest such that . Find and relate to .

Christopher Lutsko (Department of Mathematics, Rutgers University, Piscataway, USA)

271

The light-bulb problem: Alice and Bob are in jail for trying to divide by . The jailer proposes the following game to decide their freedom: Alice will be shown an grid of light bulbs. The jailer will point to a light bulb of his choice and Alice will decide whether it should be on or off. Then the jailer will point to another bulb of his choice and Alice will decide on/off. This continues until the very last bulb, when the jailer will decide whether this bulb is on or off. So the jailer controls the order of the selection, and the state of the final bulb. Alice is now removed from the room, and Bob is brought in. Bob’s goal is to choose bulbs such that his selection includes the final bulb (the one determined by the jailer).

Is there a strategy that Alice and Bob can use to guarantee success? What if Bob does not know the orientation in which Alice saw the board (i.e., what if Bob does not know which are the rows and which are the columns)?

Christopher Lutsko (Department of Mathematics, Rutgers University, Piscataway, USA)

272

Let and be coprime integers greater than or equal to . Let and denote the modular inverse of and , respectively. That is, and .

(a) Show that

(b) Show by providing an example that, if are coprime integers and , then the statement

is not necessarily true.

(c) What additional assumption should and/or satisfy so that the equivalence (1) holds?

Athanasios Sourmelidis (Institut für Analysis und Zahlentheorie, Technische Universität Graz, Austria)

273

Let denote the Ramanujan sum defined as the sum of th powers of the primitive th roots of unity. Show that, for any integer ,

where the sum is over all ordered pairs of positive integers such that their lcm is , and is Euler’s totient function.

László Tóth (University of Pécs, Hungary)

274

Show that, for every integer , we have the polynomial identity

where are the cyclotomic polynomials and denotes Euler’s totient function.

László Tóth (Department of Mathematics, University of Pécs, Hungary)

II Open problem

275*. Chowla’s conjecture and its relatives

by Terence Tao (UCLA, Department of Mathematics, Los Angeles, USA)

Let denote the Liouville function. In [2 S. Chowla, The Riemann hypothesis and Hilbert’s tenth problem. Mathematics and its Applications 4, Gordon and Breach Science Publishers, New York (1965) ], Chowla conjectured that

as , for any distinct natural numbers (in fact, Chowla made the more general conjecture that

whenever is a square-free polynomial mapping from to ). Chowla’s conjecture was extended to other bounded multiplicative functions by Elliott [3 P. D. T. A. Elliott, On the correlation of multiplicative functions. Notas Soc. Mat. Chile 11, 1–11 (1992) ] (see also a technical correction to the conjecture in [8 K. Matomäki, M. Radziwiłł and T. Tao, An averaged form of Chowla’s conjecture. Algebra Number Theory 9, 2167–2196 (2015) ]).

One can view (2) as a less difficult cousin of the notorious Hardy–Littlewood prime tuples conjecture [4 G. H. Hardy and J. E. Littlewood, Some problems of ‘Partitio numerorum’; III: On the expression of a number as a sum of primes. Acta Math. 44, 1–70 (1923) ], which conjectures an asymptotic of the form

where the singular series is an explicit product over primes of factors involving the numbers . For , both conjectures follow readily from the prime number theorem, but they remain open for higher . However, the analogue of (2) (and (3) for ) were recently established in certain function fields [11 W. Sawin and M. Shusterman, On the Chowla and twin primes conjectures over 𝔽q[T]. Ann. of Math. (2) 196, 457–506 (2022) ], and are also known to hold in the presence of a Siegel zero [1 J. Chinis, Siegel zeros and Sarnak’s conjecture, preprint, arXiv:2105.14653 (2021) , 5 D. R. Heath-Brown, Prime twins and Siegel zeros. Proc. London Math. Soc. (3) 47, 193–224 (1983) , 15 T. Tao, J. Teräväinen, The Hardy–Littlewood–Chowla conjecture in the presence of a Siegel zero, preprint, arXiv:2109.06291 (2021) , 6 K. Matomäki, J. Merikoski, Siegel zeros, twin primes, Goldbach’s conjecture, and primes in short intervals, preprint, arXiv:2112.11412 (2021) ]. The conjecture (2) is also known if one performs enough averaging in the variables [8 K. Matomäki, M. Radziwiłł and T. Tao, An averaged form of Chowla’s conjecture. Algebra Number Theory 9, 2167–2196 (2015) ].

The logarithmically averaged version

turns out to be more tractable, as it can be analyzed by the “entropy decrement method” [12 T. Tao, The logarithmically averaged Chowla and Elliott conjectures for two-point correlations. Forum Math. Pi 4, Paper No. e8 (2016) ], which has been successfully used to establish the conjecture for (see [12 T. Tao, The logarithmically averaged Chowla and Elliott conjectures for two-point correlations. Forum Math. Pi 4, Paper No. e8 (2016) ], building upon the breakthrough work [7 K. Matomäki and M. Radziwiłł, Multiplicative functions in short intervals. Ann. of Math. (2) 183, 1015–1056 (2016) ]) and for odd (see [14 T. Tao and J. Teräväinen, Odd order cases of the logarithmically averaged Chowla conjecture. J. Théor. Nombres Bordeaux 30, 997–1015 (2018) , 16 T. Tao and J. Teräväinen, The structure of logarithmically averaged correlations of multiplicative functions, with applications to the Chowla and Elliott conjectures. Duke Math. J. 168, 1977–2027 (2019) ]). The conjecture (4) for arbitrary is also known to be equivalent [13 T. Tao, Equivalence of the logarithmically averaged Chowla and Sarnak conjectures. In Number Theory—Diophantine Problems, Uniform Distribution and Applications, Springer, Cham, 391–421 (2017) ] to the (logarithmically averaged) Sarnak conjecture [10 P. Sarnak, Mobius randomness and dynamics. Not. S. Afr. Math. Soc. 43, 89–97 (2012) ], which asserts that

whenever is a compact dynamical system of zero entropy, is a point in , and is continuous. Many special cases of this conjecture are known, unfortunately too many to list here.

The conjecture (4) would also follow from a higher-order local Fourier uniformity conjecture [12 T. Tao, The logarithmically averaged Chowla and Elliott conjectures for two-point correlations. Forum Math. Pi 4, Paper No. e8 (2016) ], which is somewhat complicated to state in full generality here; however, the first unsolved special case of this conjecture asserts that

whenever is such that as , where . This is currently only established in the regime for a fixed (see [9 K. Matomäki, M. Radziwiłł and T. Tao, Fourier uniformity of bounded multiplicative functions in short intervals on average. Invent. Math. 220, 1–58 (2020) ]).

III Solutions

260

Let be a -differentiable and convex function with .

(i) Prove that, for every , the following inequality holds:

(ii) Determine all functions for which we have equality.

Dorin Andrica (“Babeş-Bolyai” University, Cluj-Napoca, Romania) and Mihai Piticari (“Dragoş Vodă” National College, Câmpulung Moldovenesc, Romania)

Solution by the proposers

(i) We have

By the Mean Value Theorem,

for some . Since is convex, it follows that is increasing; hence . Therefore, , and we obtain

(ii) We have to find all the solutions to the equation

Denoting

the above equation is equivalent to the second-order differential equation

Note that if is a solution, then

whence

It follows that

and so

where is an arbitrary constant. This last equation is equivalent to

or

Consequently,

and we get

On the other hand, is continuous and . This implies that and . We conclude that the sought-for functions are necessarily of the form , i.e., , where is an arbitrary real constant.

261

Let be the unknown function of the following fractional-order derivative Cauchy problem:

Find the solution of this problem by solving an equivalent first-order ordinary Cauchy problem, with a solution independent of the kernel of the fractional operator.

Carlo Cattani (Engineering School, DEIM, University “La Tuscia”, Viterbo, Italy)

Solution by the proposer

Before we give the solution of (5), let us make some preliminary remarks about the most popular definitions of fractional derivatives.

The Riemann–Liouville integral of fractional order of a function is defined as

The corresponding Riemann–Liouville fractional derivative of order is defined as

The main problem with this derivative is that it assigns a nonzero value to a constant function. To avoid this issue, people often use the so-called order- Caputo fractional derivative, defined as

where is an integer, , and .

Riemann–Liouville (RL) and Caputo (C) derivatives are the most popular and have been used in many applications; nevertheless, they both have some drawbacks. For this reason, many authors have introduced some more flexible fractional operators. The most general fractional derivative with a given kernel is defined as

The kernel should be chosen in a such a way that at least the following two conditions are satisfied:

Moreover, to ensure that one is dealing with a non-singular kernel, one requires that

Although several definitions of fractional derivatives are available, they all depend on the proposed kernel, thus implying a subjective and a priori unjustified choice of the fractional operator each time one studies a fractional differential problem. This issue can be avoided by using the following simple definition, which is based on an intuitive interpolation.

Limiting ourselves to the case , the general structure of the Caputo-type fractional derivative

is based on the kernel , which is a positive function that decays at infinity (to ensure convergence), while according to (6), the general structure of the Riemann–Liouville first-order derivative is

Usually, to find the solution of (5), we should first choose the kernel of the fractional operator and then solve the fractional problem by using a suitable numerical method, which roughly consists in constructing and solving an equivalent algebraic/differential (of integer order) problem. In any case, the solution will depend not only on the independent variable and the initial condition , but also on the kernel and on the fractional-order parameter:

The dependence on the fractional parameter is essential in solving fractional-order problems. However, the dependence on the kernel leads to an inessential “struggle” about the best choice of the kernel and about its physical/mathematical meaning – an obviously subjective and non-unique choice. Because of this lack of uniqueness, fractional calculus is missing a strong mathematical motivation. On the other hand, there exist many useful mathematical tools, important for the solution of differential problems, that require making choices, such as wavelets, orthogonal polynomials, integral transforms, and many more. Therefore, one can either ignore the uniqueness problem, or try to defend a specific choice by using some reasoning that may still be not sufficient to convince the mathematics community. In the following, we give a solution which is both independent of the choice of the kernel and can be analytically obtained by reduction to an equivalent ordinary differential problem having the same solution as (5).

We search the solution by assuming that the fractional derivative is obtained by linear interpolation between a function and its first-order derivative; consequently, we do not need the integral definition (7) and the accompanying choice of kernel. This is an acceptable assumption, based on the original simple idea in the fundamentals of fractional calculus that the fractional parameter describes a family of interpolation curves. Thus, we set

so that the initial value problem (5) becomes

Now, for , we easily obtain the following ordinary differential problem that is equivalent to (5):

For the moment, we can suppose that the initial conditions of (5) and (8) do not necessarily coincide, i.e., . However, some further assumptions can be made when the function is given explicitly. In particular, to achieve a perfect equivalence between (5) and (8), we can set , and then let .

262

Let be the unknown function of the following Bernoulli fractional-order Cauchy problem:

where is a continuous function in the interval .

Find the solution of this problem by solving an equivalent first-order ordinary Cauchy problem, with a solution independent of the kernel of the fractional operator.

Carlo Cattani (Engineering School, DEIM, University “La Tuscia”, Viterbo, Italy)

Solution by the proposer

We search the solution by simply assuming that the fractional derivative is a linear interpolation between a function and its first-order derivative so that we do not need the usual integral definition of the fractional operator which requires us to choose the underlying kernel.

Thus, we set

so that (9) becomes

Then taking and using equations (10) and (11), we easily get the following ordinary differential problem equivalent to (9):

For the moment, we search a general solution of (9) by assuming that . Solving separately the two equations

we obtain the respective solutions

Consequently, the solution of problem (12), which is also the solution of problem (9), takes the forms listed below.

1. Let and . Then

2. Let and . Then

3. Let , and . In this case, note that, in order to solve the given fractional-order Cauchy problem (9) via an equivalent ordinary differential problem, we can simply set in (12) and thus obtain the solution

4. Let and . Then

263

Let be a real-valued -function defined on , strictly increasing, such that for all and . Consider the boundary value problem

Prove that the solution has exactly one zero in , i.e., there exists a unique point such that , and give a positive lower bound for .

Luz Roncal (BCAM – Basque Center for Applied Mathematics, Bilbao, Spain, Ikerbasque Basque Foundation for Science, Bilbao, Spain and Universidad del País Vasco/Euskal Herriko Unibertsitatea, Bilbao, Spain)

Solution by the proposer

First suppose that for . The function is the solution to the auxiliary initial value problem

Therefore,

Integrating this equality over the interval , we obtain

and by the continuity of . But

so we reached a contradiction. Thus, has at least one zero in .

Next, observe that and by assumption . Consider the function , which is a solution to the second auxiliary initial value problem

and satisfies for . Suppose that has (at least) one zero in . Denote the smallest such zero by . Then is positive for (recall that ) and ; hence . An argument analogous to the one above shows that

Note that , but the integrand , so again we reached a contradiction. Thus, has no zero in , so is a positive lower bound for the zeros of .

Finally, suppose that has more than one zero in , namely, there exist at least two points such that and . Take the function

where are chosen in such a way that and is negative for ; see Figure 1.

We have , so , which is positive on the interval . Therefore,

On the other hand,

and the right-hand side is negative since and for .

264

We propose an interesting stochastic-source scattering problem in acoustics. The stochastic nature for such problems forces us to deal with stochastic partial differential equations (SPDEs), rather than partial differential equations (PDEs) which hold for the corresponding deterministic counterparts. In particular, the results of our proposed model will be applied to establish existence and uniqueness for the stochastic solution of a finite element approximation of the stochastic-source Helmholtz equation.

Consider the following approximation problem of a stochastic-source Helmholtz equation:

where is a generalized stochastic source. For the stochastic problem (13), we use the equations

and we get the collection of deterministic problems

Assume that solves problem (14). Then prove that, for all , the solution satisfies

George Kanakoudis, Konstantinos G. Lallas and Vassilios Sevroglou (Department of Statistics and Insurance Science, University of Piraeus, Greece)

Solution by the proposers

We decompose our problem into a hierarchy of deterministic evolution (BVPs), and we give their corresponding variational formulations.

For , we get

The estimation of a solution of problem (15) is

For , we get

We take an arbitrary and multiply equation (16) by . Then we get

and integrate over . Every term is integrable since , and hence we have and , so

Therefore, and , so . We obtain

We use Green’s formula according to which

since is equivalent to .

Let be a stochastic Hilbert space. If we now assume the bilinear form on ,

and the linear functional on ,

then the variational formulation of problem (17) is

The estimation of a solution of problem (18) is

For , we get

The estimation of a solution of problem (19) is

Via the above variational formulations and taking into account , we can prove that the solution of the stochastic boundary value problem (13) satisfies the following inequality:

where , , are considered to be in agreement with appropriate built-in weights. The solution belongs to the space which is a stochastic Hilbert space with the probability measure defined by .

265

For a Newtonian incompressible fluid, the Navier–Stokes momentum equation, in vector form, reads [4]

Here, is the fluid density, is the velocity vector field, is the pressure, is the viscosity, and is an external force field.

(i) Assuming that both the pressure drop and the external field are negligible, it is easy to show that equation (20) reduces to

and finally to equation (22), where is the so-called kinematic viscosity [5].

(ii) Regarding the one-dimensional viscous Burgers equation

prove that an analytical solution can be obtained by means of the Tanh Method [2, 3, 5] as

M. A. Xenos and A. C. Felias (Department of Mathematics, University of Ioannina, Greece)

Solution by the proposers

Notice that, for , equation (20) becomes

Since, for an incompressible fluid, is a nonzero constant, one can divide both sides of equation (23) by and thus obtain equation (21). Now consider the motion of a one-dimensional viscous fluid with fluid velocity along the -axis as time passes, . In this case, equation (21) transforms into equation (22). Introduce the transformation of given by

with representing the wave number and the velocity. Then transformation (24) reduces equation (22) to the following ODE for :

Integrating equation (25) and taking the integration constant to be zero, we obtain

The idea behind the Tanh method uses a key property of the functional derivatives all being written in terms of the Tanh function [2, 3]. The following identity is used:

This transforms equation (25) into a polynomial equation for successive powers of the Tanh function. Introducing the new variable

solution(s) can be sought in the form

Chain differentiation yields

The positive integer value of is determined after substituting expressions (29) and (30) into equation (26) and balancing the resulting highest-order terms.

Once is determined, substituting expression (29) in equation (26), one obtains an algebraic system for the coefficients , . Depending on the problem under consideration, is either determined or not, while is always a function of .

In the present case, is found to be equal to ; hence substituting expression (29) in equation (26) and setting the coefficients of the like powers of equal to zero leads to the algebraic system

the solution of which is

Combining (31) and (29) and using expression (28), one obtains

and finally

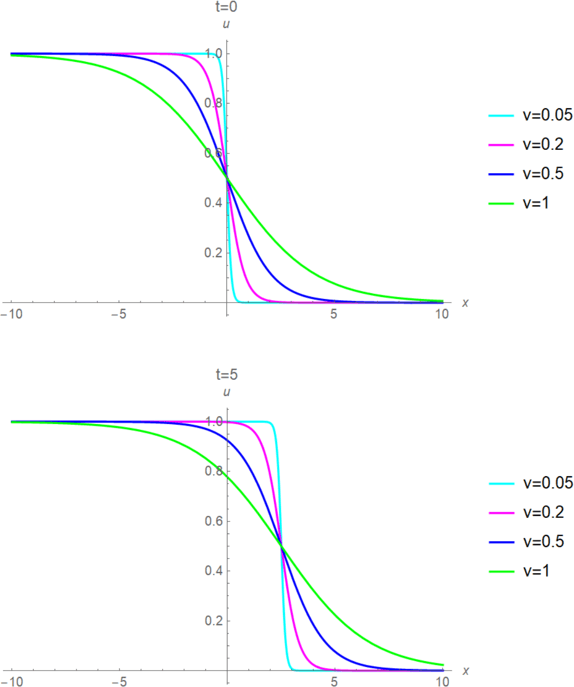

Figure 2 displays right-moving analytical solutions of the viscous Burgers equation for different values of the kinematic viscosity .

We wait to receive your solutions to the proposed problems and ideas on the open problems. Send your solutions to Michael Th. Rassias by email to mthrassias@yahoo.com.

We also solicit your new problems with their solutions for the next “Solved and unsolved problems” column, which will be devoted to Probability Theory.

References

- J. Chinis, Siegel zeros and Sarnak’s conjecture, preprint, arXiv:2105.14653 (2021)

- S. Chowla, The Riemann hypothesis and Hilbert’s tenth problem. Mathematics and its Applications 4, Gordon and Breach Science Publishers, New York (1965)

- P. D. T. A. Elliott, On the correlation of multiplicative functions. Notas Soc. Mat. Chile 11, 1–11 (1992)

- G. H. Hardy and J. E. Littlewood, Some problems of ‘Partitio numerorum’; III: On the expression of a number as a sum of primes. Acta Math. 44, 1–70 (1923)

- D. R. Heath-Brown, Prime twins and Siegel zeros. Proc. London Math. Soc. (3) 47, 193–224 (1983)

- K. Matomäki, J. Merikoski, Siegel zeros, twin primes, Goldbach’s conjecture, and primes in short intervals, preprint, arXiv:2112.11412 (2021)

- K. Matomäki and M. Radziwiłł, Multiplicative functions in short intervals. Ann. of Math. (2) 183, 1015–1056 (2016)

- K. Matomäki, M. Radziwiłł and T. Tao, An averaged form of Chowla’s conjecture. Algebra Number Theory 9, 2167–2196 (2015)

- K. Matomäki, M. Radziwiłł and T. Tao, Fourier uniformity of bounded multiplicative functions in short intervals on average. Invent. Math. 220, 1–58 (2020)

- P. Sarnak, Mobius randomness and dynamics. Not. S. Afr. Math. Soc. 43, 89–97 (2012)

- W. Sawin and M. Shusterman, On the Chowla and twin primes conjectures over 𝔽q[T]. Ann. of Math. (2) 196, 457–506 (2022)

- T. Tao, The logarithmically averaged Chowla and Elliott conjectures for two-point correlations. Forum Math. Pi 4, Paper No. e8 (2016)

- T. Tao, Equivalence of the logarithmically averaged Chowla and Sarnak conjectures. In Number Theory—Diophantine Problems, Uniform Distribution and Applications, Springer, Cham, 391–421 (2017)

- T. Tao and J. Teräväinen, Odd order cases of the logarithmically averaged Chowla conjecture. J. Théor. Nombres Bordeaux 30, 997–1015 (2018)

- T. Tao, J. Teräväinen, The Hardy–Littlewood–Chowla conjecture in the presence of a Siegel zero, preprint, arXiv:2109.06291 (2021)

- T. Tao and J. Teräväinen, The structure of logarithmically averaged correlations of multiplicative functions, with applications to the Chowla and Elliott conjectures. Duke Math. J. 168, 1977–2027 (2019)

Cite this article

Michael Th. Rassias, Solved and unsolved problems. Eur. Math. Soc. Mag. 127 (2023), pp. 53–61

DOI 10.4171/MAG/127