The evolution of a gas can be described by different mathematical models depending on the scale of observation. A natural question, raised by Hilbert in his sixth problem, is whether these models provide mutually consistent predictions. In particular, for rarefied gases, it is expected that the equations of the kinetic theory of gases can be obtained from molecular dynamics governed by the fundamental principles of mechanics. In the case of hard sphere gases, Lanford (1975) has shown that the Boltzmann equation does indeed appear as a law of large numbers in the low density limit, at least for very short times. The aim of this paper is to present recent advances in the understanding of this limiting process.

1 A statistical approach to dilute gas dynamics

1.1 The physical model: A dilute gas of hard spheres

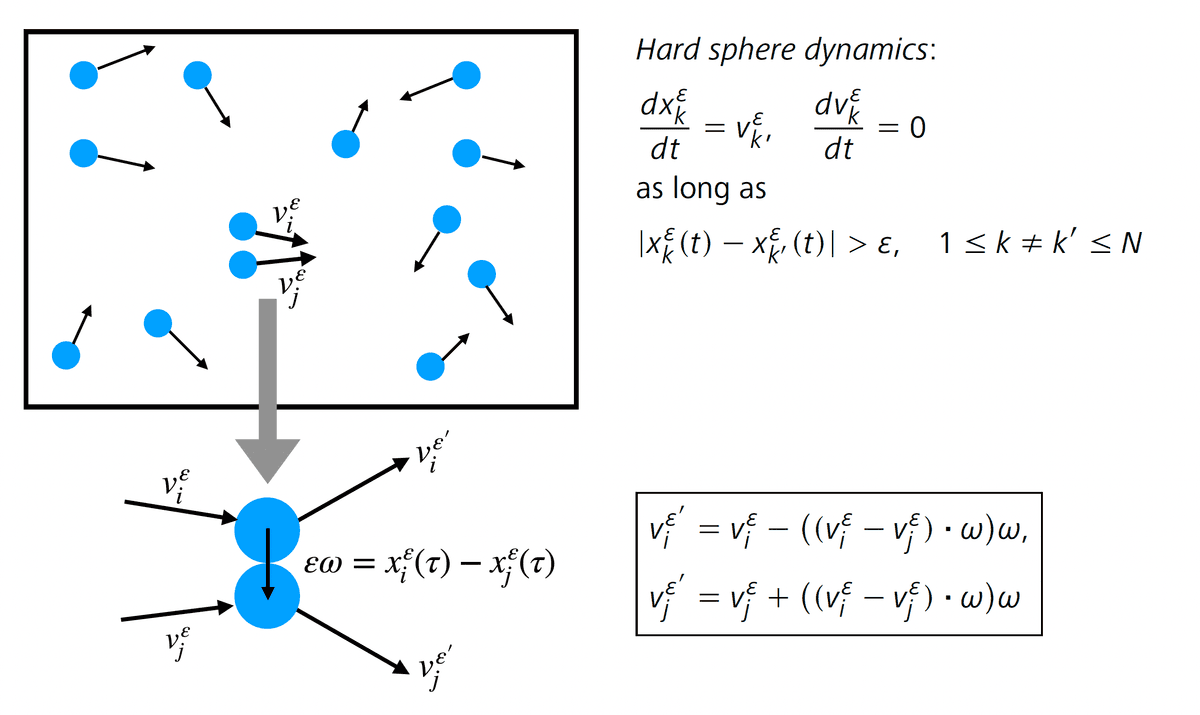

Although at the time Boltzmann published his famous paper [8 L. Boltzmann, Weitere Studien über das Wärmegleichgewicht unter Gasmolecülen. Wien. Ber. 66, 275–370 (1872) ] the atomic theory was still rejected by some scientists, it was already well established that matter is composed of atoms, which are the elementary constituents of all solids, liquids and gases. The particularity of gases is that the volume occupied by their atoms is negligible as compared to the total volume occupied by the gas, and there are therefore very few constraints on the atoms’ geometric arrangement: they are thus very loosely bound and almost independent. Neglecting the internal structure of the atoms, their possible organization into molecules, and the effect of long-range interactions, a gas can be represented as a system formed by a large number of particles that move in a straight line and occasionally collide with each other, resulting in an almost instantaneous scattering. The simplest example of such a model consists in assuming that the particles are small identical spheres, of diameter and mass 1, interacting only by contact (Figure 1). We refer to this as a gas of hard spheres. This microscopic description of a gas is explicit, but very difficult to use in practice because the number of particles is extremely large, their size is tiny and their collisions are very sensitive to small shifts (Figure 2). This model is therefore not efficient for making theoretical predictions. A natural question is whether one can extract, from such a complex system, less precise but more stable models suitable for applications, such as kinetic or fluid models. This question was formalized by Hilbert at the International Congress of Mathematicians in 1900, in his sixth problem:

Boltzmann’s work on the principles of mechanics suggests the problem of developing mathematically the limiting processes, there merely indicated, which lead from the atomistic view to the laws of motion of continua.

The Boltzmann equation, mentioned by Hilbert and described in more detail below, expresses that the particle distribution evolves under the combined effect of free transport and collisions. For these two effects to be of the same order of magnitude, a simple calculation shows that, in dimension , the number of particles and their diameter must satisfy the scaling relation , called low density scaling [14 H. Grad, Principles of the kinetic theory of gases. Handbuch der Physik 12, Thermodynamik der Gase, Springer, Berlin, 205–294 (1958) ]. Indeed, the regime described by the Boltzmann equation is such that the mean free path, i.e., the average distance traveled by a particle moving in a straight line between two collisions, is of order 1. Thus, a typical particle should go through a tube of volume between two collisions, and on average, this tube should cross one of the other particles. Note that, in this regime, the total volume occupied by the particles at a given time is proportional to and is therefore negligible compared to the total volume occupied by the gas. We speak then of a dilute gas.

1.2 Three levels of averaging

Henceforth, it is assumed that the particle system evolves in the unit domain with periodic boundary conditions . We consider that the particles are identical: this is the exchangeability assumption. The state of the system can be represented by a measure in the phase space called empirical measure,

where is the Dirac mass at . This measure is completely symmetric (i.e., invariant under permutation of the indices of the particles) because of the exchangeability assumption. This first averaging is however not sufficient to obtain a robust description of the dynamics when is large, because of the instabilities mentioned in the previous section (Figure 2) which lead to a strong dependence of the particle trajectories on . We will therefore introduce a second averaging, with respect to the initial configurations; from a physical point of view, this averaging is natural since only fragmentary information on the initial configuration is available. We therefore assume that the initial data are independent random variables, identically distributed according to a distribution . This assumption must be slightly corrected to account for particle exclusion: for . This statistical framework is called the canonical setting. It is a simple framework allowing us to establish rigorous foundations for the kinetic theory, i.e., to characterize, in the large asymptotics, the average dynamics and more precisely the evolution equation governing the distribution at time of a typical particle. In this paper, our aim is to go beyond this averaged dynamics, and to describe in a precise way the correlations that appear dynamically inside the gas. Fixing a priori the number of particles induces additional correlations, so to circumvent them, we introduce a third level of averaging by assuming that is also a random variable, and that only its average is determined (according to the low density scaling). To define a system of initially independent (modulo exclusion) identically distributed hard spheres according to , we introduce the grand canonical measure as follows: the probability density of finding particles of coordinates is given by

where the constant is the normalization factor of the probability measure. We will assume in the following that the function is Lipschitz continuous, with a Gaussian decay in velocity. The corresponding probability and expectation will be denoted by and .

1.3 A statistical approach

Once the initial random configuration is chosen, the hard sphere dynamics evolves deterministically (according to the hard sphere equations shown in Figure 1), and we seek to understand the statistical behavior of the empirical measure

and its evolution in time.

A law of large numbers

The first step is to determine the law of large numbers, that is, the limiting distribution of a typical particle when . In the case of identically distributed independent variables of expectation , the law of large numbers implies in particular that the mean converges in probability to the expectation:

One can easily show the following convergence in probability:

under the grand canonical measure. The difficulty is to understand whether the initial quasi-independence propagates in time so that there exists a function such that the following convergence in probability holds:

under the grand canonical measure (1.1) over the initial configurations. The most important result proving this convergence was obtained by Lanford [16 O. E. Lanford, III, Time evolution of large classical systems. In Dynamical systems, theory and applications (Rencontres, Battelle Res. Inst., Seattle, 1974), Lecture Notes in Phys. 38, Springer, Berlin, 1–111 (1975) ]: he showed that evolves according to a deterministic equation, namely the Boltzmann equation. This result will be explained in Section 2.2.

A central limit theorem

The approximation (1.3) of the empirical measure neglects two types of errors. The first is the presence of correction terms that converge to 0 when . The second is related to the probability, which must tend to zero, of configurations for which this convergence does not occur. A classical problem in statistical physics is to quantify more precisely these errors, by studying the fluctuations, i.e., the deviations between the empirical measure and its expectation. In the case of independent and identically distributed variables , the central limit theorem implies that the fluctuations are of order , and the following convergence in law holds true:

where is the normal law of variance . In particular, at this scale, we find some randomness. Investigating the same fluctuation regime for the dynamics of hard sphere gases consists in considering the fluctuation field defined by duality, namely,

where is a continuous function, and the expectation with respect to the grand canonical measure. At time , one can easily show that, under the grand-canonical measure, the fluctuation field converges in the low density limit to a Gaussian field with covariance

A series of recent works [4 T. Bodineau, I. Gallagher, L. Saint-Raymond and S. Simonella, Fluctuation theory in the Boltzmann–Grad limit. J. Stat. Phys. 180, 873–895 (2020) , 6 T. Bodineau, I. Gallagher, L. Saint-Raymond and S. Simonella, Statistical dynamics of a hard sphere gas: Fluctuating Boltzmann equation and large deviations, preprint, arXiv:2008.10403; to appear in Ann. Math. (2023) , 7 T. Bodineau, I. Gallagher, L. Saint-Raymond and S. Simonella, Long-time correlations for a hard-sphere gas at equilibrium, preprint, arXiv:2012.03813; to appear in Comm. Pure and Appl. Math. (2023) , 5 T. Bodineau, I. Gallagher, L. Saint-Raymond and S. Simonella, Long-time derivation at equilibrium of the fluctuating Boltzmann equation, preprint, arXiv:2201.04514 (2022) ] has allowed to characterize the fluctuation field (1.4) and to obtain a stochastic evolution equation governing the limit process. These results are presented in Section 3.3.

On large deviations

The last question generally studied in a classical probabilistic approach is that of the quantification of rare events, i.e., the estimation of the probability of observing an atypical behavior (which deviates macroscopically from the mean). For independent and identically distributed random variables, this probability is exponentially small, and it is therefore natural to study the asymptotics

The limit is called the large deviation functional and can be expressed as the Legendre transform of the log-Laplace transform . To generalize this statement to correlated variables in a gas of hard spheres, it is necessary to compute the log-Laplace transform of the empirical measure on deterministic trajectories, which requires extremely precise control of the dynamical correlations. Note that, at time , under the grand canonical measure, one can show that, for any ,

where is a distance on the space of measures. The dynamical cumulant method introduced in [4 T. Bodineau, I. Gallagher, L. Saint-Raymond and S. Simonella, Fluctuation theory in the Boltzmann–Grad limit. J. Stat. Phys. 180, 873–895 (2020) , 6 T. Bodineau, I. Gallagher, L. Saint-Raymond and S. Simonella, Statistical dynamics of a hard sphere gas: Fluctuating Boltzmann equation and large deviations, preprint, arXiv:2008.10403; to appear in Ann. Math. (2023) ] is a key tool for computing the exponential moments of the hard sphere distribution, thus obtaining the dynamical equivalent of this result in short time. We give an overview of these techniques in Section 3.

2 Typical behavior: A law of large numbers

2.1 Boltzmann’s amazing intuition

The equation that rules the typical evolution of a gas of hard spheres was heuristically proposed by Boltzmann [8 L. Boltzmann, Weitere Studien über das Wärmegleichgewicht unter Gasmolecülen. Wien. Ber. 66, 275–370 (1872) ] about a century before its rigorous derivation by Lanford [16 O. E. Lanford, III, Time evolution of large classical systems. In Dynamical systems, theory and applications (Rencontres, Battelle Res. Inst., Seattle, 1974), Lecture Notes in Phys. 38, Springer, Berlin, 1–111 (1975) ], as the “limit” of the particle system when . Boltzmann’s revolutionary idea was to write an evolution equation for the probability density giving the proportion of particles at position with velocity at time . In the absence of collisions, and in an unbounded domain, this density would be transported along the physical trajectories , which means that . The challenge is to take into account the statistical effect of collisions. As long as the size of the particles is negligible, one can consider that these collisions are pointwise in both and . Boltzmann proposed a quite intuitive counting:

the number of particles of velocity increases when a particle of velocity collides with a particle of velocity , and takes the velocity (Figure 1 and (2.2));

the number of particles of velocity decreases when a particle of velocity collides with a particle of velocity , and is deflected to another velocity.

The probability of these jumps in velocity is described by a transition rate, called the collision cross section. For interactions between hard spheres, it is given by , where is the relative velocity of the colliding particles, and is the deflection vector, uniformly distributed in the unit sphere . The fundamental assumption of Boltzmann’s theory is that, in a rarefied gas, the correlations between two colliding particles must be very small. Therefore, the joint probability of having two pre-colliding particles of velocities and at position at time should be well approximated by the product . This independence property is called the molecular chaos hypothesis. The Boltzmann equation then reads

where

with the scattering rules

being analogous to those introduced in Figure 1, with the important difference that is now a random vector chosen uniformly in the unit sphere : indeed, the relative position of the colliding particles disappeared in the limit . As a result, the Boltzmann equation is singular because it involves a product of densities at a single point . Boltzmann’s idea of reducing the Hamiltonian dynamics describing atomic behavior to a kinetic equation was revolutionary and paved the way to the description of non-equilibrium phenomena by mesoscopic equations. However, the Boltzmann equation (2.1) was first strongly criticized because it seems to violate some fundamental physical principles. It actually predicts an irreversible evolution in time: it has a Lyapunov functional, called entropy, defined by , such that , with equality if and only if the gas is in thermodynamic equilibrium. The Boltzmann equation thus provides a quantitative formulation of the second principle of thermodynamics. But at first glance, this irreversibility seems incompatible with the fact that the dynamics of hard spheres is governed by a Hamiltonian system, i.e., a system of ordinary differential equations that is completely reversible in time. Soon after Boltzmann postulated his equation, these two different behaviors were considered, by Loschmidt, as a paradox and an obstruction to Boltzmann’s theory. A fully satisfactory mathematical explanation of this question remained elusive for almost a century, until the role of probabilities was precisely identified: the underlying dynamics is reversible, but the description that is given of this dynamics is only partial and is therefore not reversible.

2.2 Typical behavior: Lanford’s theorem

Lanford’s result [16 O. E. Lanford, III, Time evolution of large classical systems. In Dynamical systems, theory and applications (Rencontres, Battelle Res. Inst., Seattle, 1974), Lecture Notes in Phys. 38, Springer, Berlin, 1–111 (1975) ] shows in which sense the Boltzmann equation (2.1) is a good approximation of the hard sphere dynamics. It can be stated as follows (this is not exactly the original formulation; see in particular Section 2.4 below for comments).

In the low density limit ( with ), the empirical measure defined by (1.2) concentrates on the solution of the Boltzmann equation (2.1): for any bounded and continuous function ,

on a time interval that depends only on the initial distribution .

The time of validity of the approximation is found to be a fraction of the average time between two successive collisions for a typical particle. This time is large enough for the microscopic system to undergo a large number of collisions (of the order of ), but (much) too small to see phenomena such as relaxation to (local) thermodynamic equilibrium, and in particular hydrodynamic regimes. Physically, we do not expect this time to be critical, in the sense that the dynamics would change in nature afterwards. In fact, in practice, Boltzmann’s equation is used in many applications (such as spacecraft reentrance calculations) without time restrictions. However, it is important to note that a time restriction might not be only technical: from a mathematical point of view, one cannot exclude that the Boltzmann equation presents singularities (typically spatial concentrations that would prevent the collision term from making sense, and that would also locally contradict the low density assumption). At present, the problem of extending Lanford’s convergence result to longer times still faces serious obstacles.

2.3 Heuristics of Lanford’s proof

Let us informally explain how the Boltzmann equation (2.1) can be predicted from the dynamics of the particles. The goal is to transport via the dynamics the initial grand canonical measure (1.1) and then to project this measure at time onto the 1-particle phase space. We thus define by duality the density of a typical particle with respect to a test function by

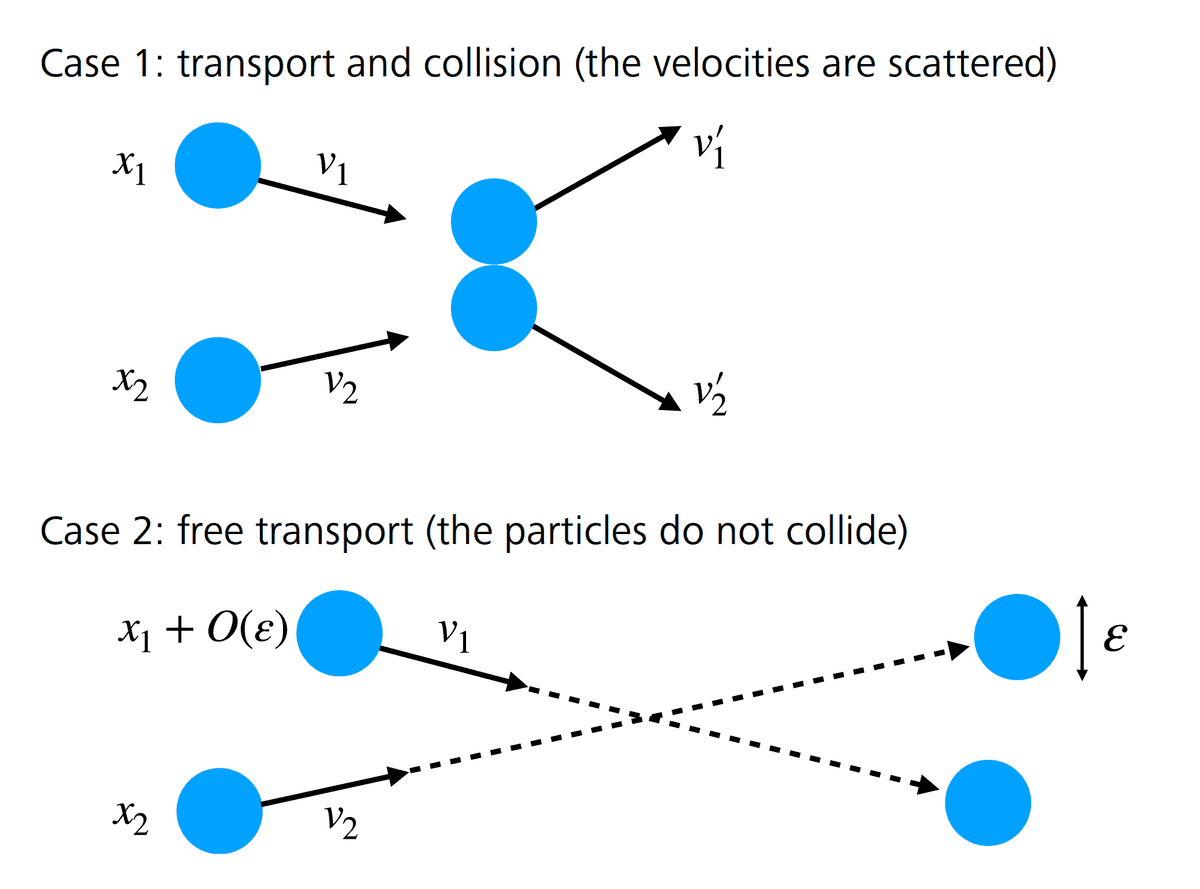

Theorem 2.1 states that converges to the solution to the Boltzmann equation in the low density limit. So let be a regular and bounded function on and consider the evolution of the empirical measure during a short time interval . Separating the different contributions according to the number of collisions, we can write

To simplify, denotes the coordinates of the -th particle at time . Since the left-hand side of (2.4) formally converges when to the time derivative of , we will analyze the limit of the first two terms in the right-hand side of (2.4), which should lead to a transport term and a collision term as in (2.1). We will also explain why the remainder terms, involving two or more collisions in the short time interval , tend to 0 with (showing that they are of order ). Since the particles move in a straight line and at constant speed in the absence of collisions, if the distribution is sufficiently regular, the definition (2.3) of formally implies that, when tends to 0, the first term in the right-hand side of (2.4) is asymptotically equal to

The transport term in (2.1) is thus well obtained in the limit. Let us now consider the second term in the right-hand side of (2.4). Two particles of configurations and at time collide at a later time if there exists such that

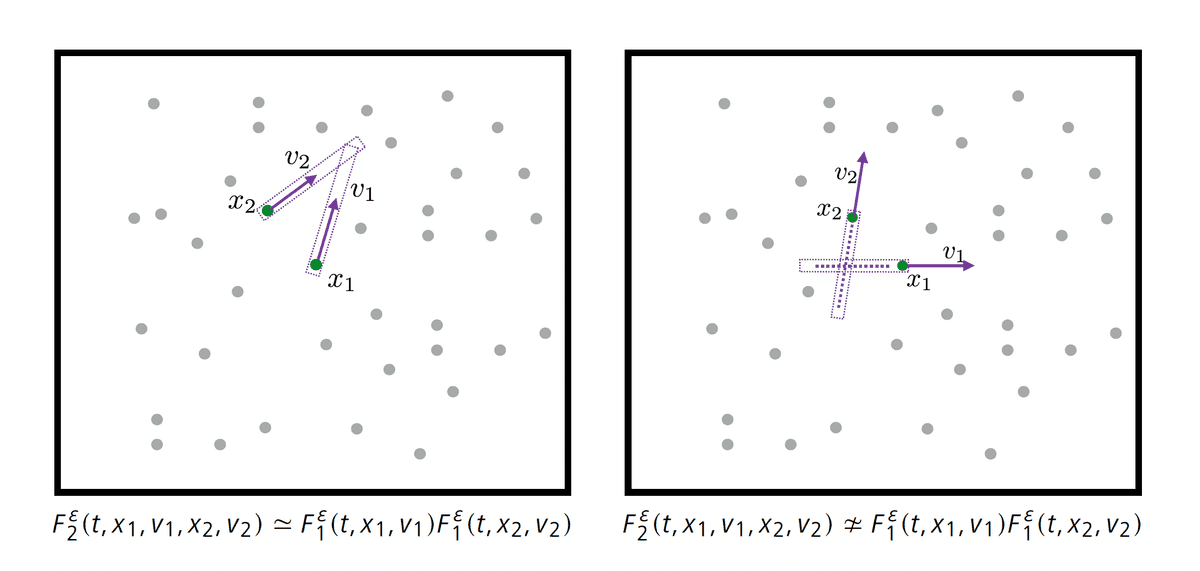

This implies that their relative position must belong to a tube of length and width oriented in the direction. The Lebesgue measure of this set is of the order (neglecting large velocities). More generally, a sequence of collisions between particles imposes constraints of the previous form, and this event can be shown to have probability less than (again neglecting large velocities). Since there are, on average, ways to choose these colliding particles, we deduce that the occurrence of collisions in (2.4) has a probability of order . This explains why the probability of having colliding particles can be estimated by and thus can be neglected in (2.4). It remains to examine more closely the collision term involving two particles in (2.4), in order to obtain the collision operator of the Boltzmann equation (2.1). This term involves the two-particle correlation function . For any , we define

where are all distinct and . We can then show that, in the limit ,

where

The key step in closing the equation is the molecular chaos assumption postulated by Boltzmann, which states that the pre-collisional particles remain independently distributed at all times so that, with the convention (2.5) fixing the sign of , we have

When the diameter of the spheres tends to 0, the coordinates and coincide and the scattering parameter becomes a random parameter. Assuming that converges, its limit must satisfy the Boltzmann equation (2.1). Establishing the factorization (2.8) rigorously uses a different strategy, elaborated by Lanford [16 O. E. Lanford, III, Time evolution of large classical systems. In Dynamical systems, theory and applications (Rencontres, Battelle Res. Inst., Seattle, 1974), Lecture Notes in Phys. 38, Springer, Berlin, 1–111 (1975) ], then completed and improved over the years: see the monographs [25 H. Spohn, Large scale dynamics of interacting particles. Texts and Monographs in Physics, Springer, Berlin (2012) , 11 C. Cercignani, R. Illner and M. Pulvirenti, The mathematical theory of dilute gases. Applied Mathematical Sciences 106, Springer, New York (1994) , 10 C. Cercignani, V. I. Gerasimenko and D. Y. Petrina, Many-particle dynamics and kinetic equations. Mathematics and its Applications 420, Kluwer Academic Publishers Group, Dordrecht (1997) ]. In the last few years, several quantitative convergence results have been established, and the proofs have been extended to the case of somewhat more general domains, potentials with compact support, or with super-exponential decay at infinity: see [1 N. Ayi, From Newton’s law to the linear Boltzmann equation without cut-off. Comm. Math. Phys. 350, 1219–1274 (2017) , 12 T. Dolmaire, About Lanford’s theorem in the half-space with specular reflection. Kinet. Relat. Models 16, 207–268 (2023) , 13 I. Gallagher, L. Saint-Raymond and B. Texier, From Newton to Boltzmann: Hard spheres and short-range potentials. Zurich Lectures in Advanced Mathematics, EMS, Zürich (2013) , 17 C. Le Bihan, Boltzmann–Grad limit of a hard sphere system in a box with isotropic boundary conditions. Discrete Contin. Dyn. Syst. 42, 1903–1932 (2022) , 21 M. Pulvirenti, C. Saffirio and S. Simonella, On the validity of the Boltzmann equation for short range potentials. Rev. Math. Phys. 26, Article ID 1450001 (2014) , 22 M. Pulvirenti and S. Simonella, The Boltzmann–Grad limit of a hard sphere system: Analysis of the correlation error. Invent. Math. 207, 1135–1237 (2017) ].

2.4 On the irreversibility

In this section, we will show that the answer to the irreversibility paradox lies in the molecular chaos hypothesis (2.8), which is valid only for specific configurations.

In fact, the notion of convergence that appears in the statement of Theorem 2.1 differs from the one used in Lanford’s proof: Theorem 2.1 states the convergence of the observables, i.e., the convergence in the sense of measures, since the test function must be continuous. This convergence is rather weak and is not sufficient to ensure the stability of the collision term in the Boltzmann equation because this term involves traces. In the proof of Lanford’s theorem, we consider all -particle correlation functions defined by (2.6) and show that, when , each of these correlation functions converges uniformly outside a set of negligible measure. Thus, the proof uses a much stronger notion of convergence than that stated in Theorem 2.1. Moreover, the set of bad microscopic configurations (on which does not converge) is somehow transverse to the set of pre-collisional configurations (as can be seen in Figure 3; two particles in tend to move away from each other so that they are unlikely to collide). The convergence defect is therefore not an obstacle to taking bounds in the collision term (correlation functions are only evaluated there in pre-collisional configurations). However, these singular sets encode important information about the dynamical correlations: by neglecting them, it is no longer possible to go back in time and reconstruct the backward dynamics. Thus, by discarding the microscopic information encoded in , one can only obtain an irreversible kinetic picture that is far from describing the full microscopic dynamics.

3 Fluctuations and large deviations

3.1 Corrections to the chaos assumption

Returning to equation (2.7) on , we can see that, apart from the small spatial shifts of the collision term, the deviations of the Boltzmann dynamics are due to the factorization defect , a geometric interpretation of which is given below.

Let us first describe the geometric representation of . We look at the history of particle located at position with velocity at time , in order to characterize all initial configurations that contribute to . The particle performs a uniform rectilinear motion until it collides with another particle, called particle , at a time . This collision can be of two types: either a physical collision (with deflection), or a mathematical artifact arising from the loss term in equation (2.7) (the particles touch but are not deflected). From then on, to understand the history of particle , we need to trace the history of both particles and before time . From time on, both particles perform uniform rectilinear motions until one of them collides with a new particle at time , and so on, until time 0. Note that, between the times of collision with new particles, the particles can collide with each other: this will be called recollision. The history of the particle can be encoded using a rooted tree whose vertices correspond to the different collisions that took place in the history of and are indexed by the parameters of these collisions. An example is shown in Figure 4. The root of the tree is indexed by . If is the total number of collisions, and are the times of the collisions, one can order the particles so that, at time , , the collision occurs between the -th particle and the -th particle, where (necessarily, at time ). Then the branching of the tree associated with the -th collision is indexed by the relation , where , together with the collision parameters , where is the deflection vector. The tensor product is then described by two independent collision trees, with roots and , and respectively and branches.

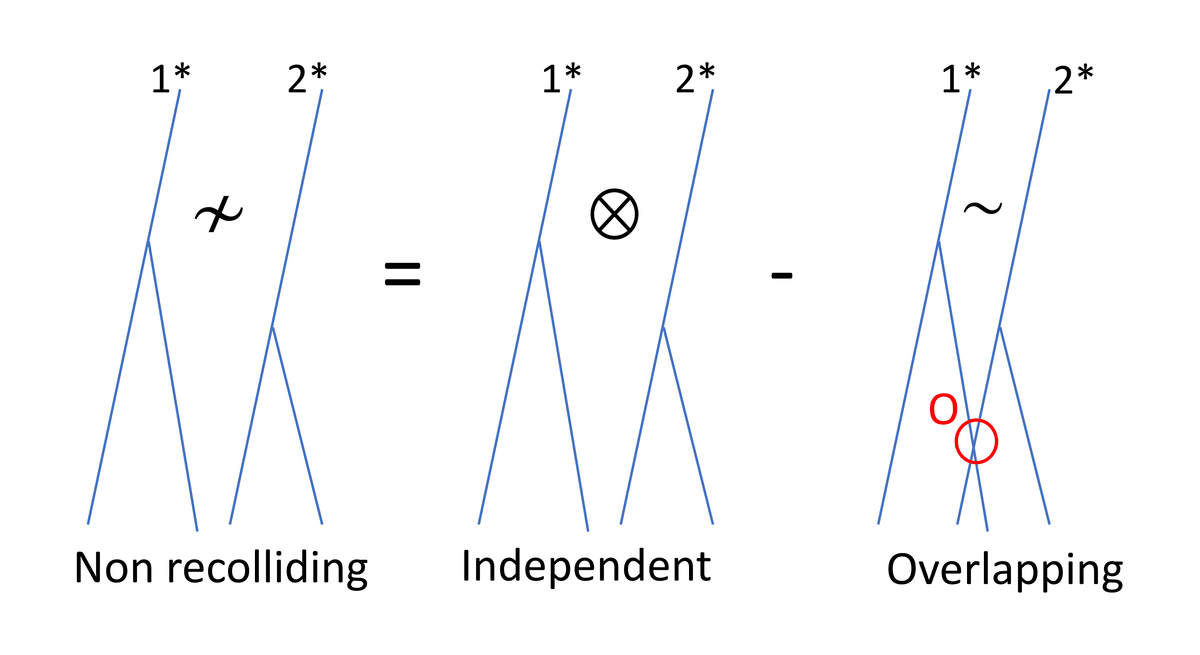

Now consider the second-order correlation function: can be described by a collision graph constructed from two collision trees with roots and , and branches. The main difference with is that the particles in the and trees may interact. We can thus decompose the trees constituting into two categories: those such that there is at least one collision involving a particle from each tree (such a recollision will be called external), and the others (Figure 5).

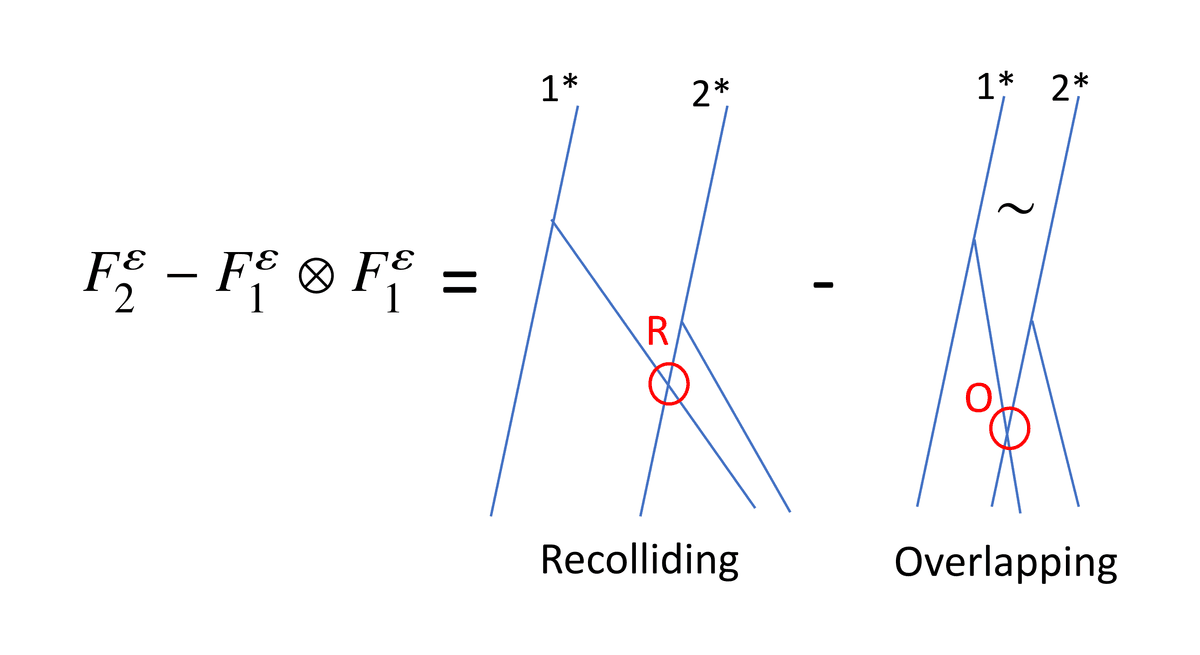

Note, however, that two collision-free trees do not correspond to independent trees, precisely because of the dynamical exclusion condition. This exclusion condition can itself be decomposed as (Figure 6), where means that there is an overlap at some point between a particle from the tree and a particle from the tree. This decomposition is a pure mathematical artifact, and the overlap condition does not affect the dynamics (the overlapping particles are not deflected).

Let us now define the second-order rescaled cumulant

The previous discussion indicates that this cumulant is represented by trees that are coupled by external collisions or overlaps (Figure 7). In view of definition (3.1) and the discussion in Section 2.3 giving an estimate of the Lebesgue measure of configurations giving rise to a collision, one can expect to be uniformly bounded in and therefore to have a limit in the sense of the measures. One can prove in addition that corresponds to trees with exactly one external recollision or overlap on : any other interaction between the trees gives rise to additional smallness and is therefore negligible.

Remark 3.1. The initial measure does not factorize exactly because of the static exclusion condition. Thus, the initial data also induce a small contribution to , but this contribution is significantly smaller than the dynamical correlations (by a factor ).

3.2 The cumulant generating function

For a Gaussian process, the first two correlation functions and determine completely all other -particle correlation functions , but in general, part of the information is encoded in the cumulants of higher order ()

where is the set of partitions of into parts with , being the cardinality of the set and . Each cumulant encodes finer and finer correlations. Contrary to the correlation functions , the cumulants do not duplicate the information which is already encoded at lower orders. From a geometric point of view, we can extend the analysis of the previous section and show that the cumulant of order can be represented by trees that are completely connected either by external collisions, or by overlaps (Figure 8). These dynamical correlations can be classified by a signed graph with vertices representing the different trees, coding tree collisions (the corresponding edges take a + sign) and overlaps (the corresponding edges take a sign). We can then systematically extract a minimally connected graph by identifying “aggregations” of tree collisions or overlaps. We then expect to decompose into a sum of terms, where the factor is the number of trees with numbered vertices (from Cayley’s formula). For each given signed minimally connected graph, the collision/overlap conditions correspond to independent constraints on the configuration at time . Therefore, neglecting the issue of large velocities, this contribution to the cumulant has a Lebesgue measure of size , and we derive the estimate

A geometric argument similar to the one developed in Lanford’s proof and recalled in the analysis of the second-order cumulant above shows that converges to a limiting cumulant and that only graphs with exactly external collisions or overlaps (and no cycles) contribute in the limit. Note further that a classical and rather simple calculation (based on the series expansions of the exponential and logarithm) shows that the cumulants are nothing but the coefficients of the series expansion of the exponential moment:

The quantity is called the cumulant generating function. Estimate (3.2) provides the analyticity of in short time as a function of , and this uniformly with respect to (sufficiently small). The limit of can then be determined as a series in terms of the limiting cumulants ,

In a suitable functional setting [5 T. Bodineau, I. Gallagher, L. Saint-Raymond and S. Simonella, Long-time derivation at equilibrium of the fluctuating Boltzmann equation, preprint, arXiv:2201.04514 (2022) ], it can be shown that this functional satisfies a Hamilton–Jacobi equation

with initial condition and Hamiltonian given by

where . We use here notation (2.2) for the pre-collisional velocities and the definition

The successive derivatives of this functional being precisely the limit cumulants , the successive derivatives of the Hamilton–Jacobi equation provide the evolution equations of these cumulants: for example, differentiating this equation once produces the Boltzmann equation, differentiating it twice produces the equation of the covariance described in the next paragraph.

3.3 Fluctuations

The control of the cumulant generating function allows in particular to obtain the convergence of the fluctuation field defined in (1.4) and thus to analyze the dynamical fluctuations over a time of the same order of magnitude as the convergence time of Theorem 2.1.

The fluctuation field converges, in the low density limit and on a time interval , towards a process , solution to the fluctuating Boltzmann equation

where is the solution at time to the Boltzmann equation (2.1) with initial data , and is a centered Gaussian noise delta-correlated in with covariance

where .

The limiting process (3.5) was conjectured by Spohn in [25 H. Spohn, Large scale dynamics of interacting particles. Texts and Monographs in Physics, Springer, Berlin (2012) ], and this reference also presents a large panorama on the theory of fluctuations in physics. In the context of dynamics with random collisions, a similar result is shown by Rezakhanlou in [24 F. Rezakhanlou, Kinetic limits for interacting particle systems. In Entropy methods for the Boltzmann equation, Lecture Notes in Math. 1916, Springer, Berlin, 71–105 (2008) ]. In the deterministic setting, the noise obtained in the limit is a consequence of the asymptotically unstable structure of the microscopic dynamics (Figure 2) combined with the randomness of the initial data at small scales.

3.4 Large deviations

The strength of the cumulant generating function becomes really apparent at the level of large deviations, i.e., for very improbable trajectories that are at a “distance” from the averaged dynamics: roughly speaking, we can show that the probability of observing an empirical distribution close to the density during the time interval decays exponentially fast with a rate quantified by a functional which evaluates the cost of this deviation in the low density asymptotics

The proximity between and is measured in the weak topology on the Skorokhod space of measure-valued functions. A precise formulation of (3.6) and a proof can be found in [6 T. Bodineau, I. Gallagher, L. Saint-Raymond and S. Simonella, Statistical dynamics of a hard sphere gas: Fluctuating Boltzmann equation and large deviations, preprint, arXiv:2008.10403; to appear in Ann. Math. (2023) ]. The result of [6 T. Bodineau, I. Gallagher, L. Saint-Raymond and S. Simonella, Statistical dynamics of a hard sphere gas: Fluctuating Boltzmann equation and large deviations, preprint, arXiv:2008.10403; to appear in Ann. Math. (2023) ] can be summarized as follows: for a class of functions in a neighborhood of the solution to the Boltzmann equation, there exists a time interval where the asymptotic (3.6) is characterized by a functional obtained by a certain Legendre transform of the Hamiltonian defined by (3.4). This functional is identical to the one conjectured in [24 F. Rezakhanlou, Kinetic limits for interacting particle systems. In Entropy methods for the Boltzmann equation, Lecture Notes in Math. 1916, Springer, Berlin, 71–105 (2008) , 9 F. Bouchet, Is the Boltzmann equation reversible? A large deviation perspective on the irreversibility paradox. J. Stat. Phys. 181, 515–550 (2020) ], by analogy with stochastic collision models of Kac type [23 F. Rezakhanlou, Large deviations from a kinetic limit. Ann. Probab. 26, 1259–1340 (1998) , 18 C. Léonard, On large deviations for particle systems associated with spatially homogeneous Boltzmann type equations. Probab. Theory Related Fields 101, 1–44 (1995) , 15 D. Heydecker, Large deviations of Kac’s conservative particle system and energy non-conserving solutions to the Boltzmann equation: A counterexample to the predicted rate function, preprint, arXiv:2103.14550 (2021) , 2 G. Basile, D. Benedetto, L. Bertini and C. Orrieri, Large deviations for Kac-like walks. J. Stat. Phys. 184, Paper No. 10 (2021) ]. Let us also note that the limiting SPDE (3.5) could be predicted by the same analogy with Kac’s model for which collisions are modeled by a Markov process [19 J. Logan and M. Kac, Fluctuations and the Boltzmann equation. I. Phys. Rev. A 13, 458–470 (1976) , 20 S. Meleard, Convergence of the fluctuations for interacting diffusions with jumps associated with Boltzmann equations. Stochastics Stochastics Rep. 63, 195–225 (1998) ]. Thus, the statistical analysis of the fluctuations and large deviations of the empirical measure confirms the robustness of Boltzmann’s intuition (cf. Section 2.1): even on exponentially small scales, the behavior of the empirical measure of a hard sphere gas is identical to that of a model of particles with random collisions depending only on the local density. This does not contradict the Hamiltonian structure of the microscopic dynamics. Memory effects persist, but they are encoded in ways that are “transverse” to the empirical measure (or at different spatial scales).

4 Conclusion

Over a short time, Lanford’s theorem states the convergence of the empirical measure of a hard sphere gas to the solution to the Boltzmann equation (Theorem 2.1). This result is completed by the analysis of fluctuations (Theorem 3.2) and large deviations (Section 3.4) of the empirical measure. These stochastic corrections are proved on times of the same order of magnitude as Lanford’s theorem. The strategy of the proof consists in tracking how the randomness of the initial measure is transported by the dynamics of hard spheres and how the instability of this dynamics transfers, in the low density asymptotics, the initial randomness into a dynamical white noise (space/time). The convergence time is limited because the current proof gives only rough estimates of the dynamical correlations, obtained by considering that collisions only destroy the initial chaos by forming larger and larger aggregates of correlated particles. An important step to progress in the mathematical understanding of these models would be to show that the disorder is not simply the result of the initial data, but that it can be regenerated by the mixing properties of the dynamics. A more favorable framework for controlling long time evolution is to consider an initial measure obtained as a perturbation of an equilibrium measure. The stationarity of the equilibrium measure then becomes a key tool to control dynamical correlations. The simplest case consists in perturbing only one particle, which shall be called the tagged particle, and to study its evolution over time. In [3 T. Bodineau, I. Gallagher and L. Saint-Raymond, The Brownian motion as the limit of a deterministic system of hard-spheres. Invent. Math. 203, 493–553 (2016) ], it is established that this particle follows a Brownian motion for large times. Another case where we know how to use the invariant measure is the study of the fluctuation field at equilibrium. In a series of recent works [7 T. Bodineau, I. Gallagher, L. Saint-Raymond and S. Simonella, Long-time correlations for a hard-sphere gas at equilibrium, preprint, arXiv:2012.03813; to appear in Comm. Pure and Appl. Math. (2023) , 5 T. Bodineau, I. Gallagher, L. Saint-Raymond and S. Simonella, Long-time derivation at equilibrium of the fluctuating Boltzmann equation, preprint, arXiv:2201.04514 (2022) ], Theorem 3.2 has been generalized to arbitrarily large, and even slightly divergent, kinetic times. This allows in particular to derive the fluctuating hydrodynamic Stokes–Fourier equations.

Acknowledgements. The EMS Magazine thanks La Gazette des Mathématiciens for authorization to republish this text, which is an English translation of the paper entitled “Sur la dynamique des gaz dilués” and published in [La Gazette des Mathématiciens, Number G174, October 2022]. The main part of the text is extracted from an article published in the ICM 2022 proceedings. The authors warmly thank Stéphane Baseilhac for his attentive proofreading and his numerous suggestions. They are also grateful to J.-B. Bru and M. Gellrich Pedra for the English translation of the original paper.

References

- N. Ayi, From Newton’s law to the linear Boltzmann equation without cut-off. Comm. Math. Phys. 350, 1219–1274 (2017)

- G. Basile, D. Benedetto, L. Bertini and C. Orrieri, Large deviations for Kac-like walks. J. Stat. Phys. 184, Paper No. 10 (2021)

- T. Bodineau, I. Gallagher and L. Saint-Raymond, The Brownian motion as the limit of a deterministic system of hard-spheres. Invent. Math. 203, 493–553 (2016)

- T. Bodineau, I. Gallagher, L. Saint-Raymond and S. Simonella, Fluctuation theory in the Boltzmann–Grad limit. J. Stat. Phys. 180, 873–895 (2020)

- T. Bodineau, I. Gallagher, L. Saint-Raymond and S. Simonella, Long-time derivation at equilibrium of the fluctuating Boltzmann equation, preprint, arXiv:2201.04514 (2022)

- T. Bodineau, I. Gallagher, L. Saint-Raymond and S. Simonella, Statistical dynamics of a hard sphere gas: Fluctuating Boltzmann equation and large deviations, preprint, arXiv:2008.10403; to appear in Ann. Math. (2023)

- T. Bodineau, I. Gallagher, L. Saint-Raymond and S. Simonella, Long-time correlations for a hard-sphere gas at equilibrium, preprint, arXiv:2012.03813; to appear in Comm. Pure and Appl. Math. (2023)

- L. Boltzmann, Weitere Studien über das Wärmegleichgewicht unter Gasmolecülen. Wien. Ber. 66, 275–370 (1872)

- F. Bouchet, Is the Boltzmann equation reversible? A large deviation perspective on the irreversibility paradox. J. Stat. Phys. 181, 515–550 (2020)

- C. Cercignani, V. I. Gerasimenko and D. Y. Petrina, Many-particle dynamics and kinetic equations. Mathematics and its Applications 420, Kluwer Academic Publishers Group, Dordrecht (1997)

- C. Cercignani, R. Illner and M. Pulvirenti, The mathematical theory of dilute gases. Applied Mathematical Sciences 106, Springer, New York (1994)

- T. Dolmaire, About Lanford’s theorem in the half-space with specular reflection. Kinet. Relat. Models 16, 207–268 (2023)

- I. Gallagher, L. Saint-Raymond and B. Texier, From Newton to Boltzmann: Hard spheres and short-range potentials. Zurich Lectures in Advanced Mathematics, EMS, Zürich (2013)

- H. Grad, Principles of the kinetic theory of gases. Handbuch der Physik 12, Thermodynamik der Gase, Springer, Berlin, 205–294 (1958)

- D. Heydecker, Large deviations of Kac’s conservative particle system and energy non-conserving solutions to the Boltzmann equation: A counterexample to the predicted rate function, preprint, arXiv:2103.14550 (2021)

- O. E. Lanford, III, Time evolution of large classical systems. In Dynamical systems, theory and applications (Rencontres, Battelle Res. Inst., Seattle, 1974), Lecture Notes in Phys. 38, Springer, Berlin, 1–111 (1975)

- C. Le Bihan, Boltzmann–Grad limit of a hard sphere system in a box with isotropic boundary conditions. Discrete Contin. Dyn. Syst. 42, 1903–1932 (2022)

- C. Léonard, On large deviations for particle systems associated with spatially homogeneous Boltzmann type equations. Probab. Theory Related Fields 101, 1–44 (1995)

- J. Logan and M. Kac, Fluctuations and the Boltzmann equation. I. Phys. Rev. A 13, 458–470 (1976)

- S. Meleard, Convergence of the fluctuations for interacting diffusions with jumps associated with Boltzmann equations. Stochastics Stochastics Rep. 63, 195–225 (1998)

- M. Pulvirenti, C. Saffirio and S. Simonella, On the validity of the Boltzmann equation for short range potentials. Rev. Math. Phys. 26, Article ID 1450001 (2014)

- M. Pulvirenti and S. Simonella, The Boltzmann–Grad limit of a hard sphere system: Analysis of the correlation error. Invent. Math. 207, 1135–1237 (2017)

- F. Rezakhanlou, Large deviations from a kinetic limit. Ann. Probab. 26, 1259–1340 (1998)

- F. Rezakhanlou, Kinetic limits for interacting particle systems. In Entropy methods for the Boltzmann equation, Lecture Notes in Math. 1916, Springer, Berlin, 71–105 (2008)

- H. Spohn, Large scale dynamics of interacting particles. Texts and Monographs in Physics, Springer, Berlin (2012)

Cite this article

Thierry Bodineau, Isabelle Gallagher, Laure Saint-Raymond, Sergio Simonella, On the dynamics of dilute gases. Eur. Math. Soc. Mag. 128 (2023), pp. 13–22

DOI 10.4171/MAG/124