Professor Sullivan, first we want to congratulate you on being awarded the Abel Prize for 2022 for your groundbreaking contributions to topology in its broadest sense and, in particular, its algebraic, geometric and dynamical aspects. You will receive the Abel Prize from His Majesty the King of Norway tomorrow.

Thank you!

You have worked in very many different fields, and, actually, your supervisor, William Browder, described you as sort of an intellectual vacuum cleaner. But it seems that you always had a guiding principle for what you are doing. If mathematics rests upon two pillars: space and number, you have been partial to space to the extent that you want to replace number by space.

A part of this quest of yours is the question: “What is a manifold?” And that is perhaps a good place to start; before we continue on your journey, as you say, from the outside to the inside, intuitively: what is a manifold?

It is space, expressed logically in terms of a set of points.

It’s space, but it’s sort of a special space, isn’t it?

No. The idea of space is that you can move things around. There isn’t an invisible wall that makes you stop here, but you can move around. Any object which is locally like that is called a manifold. Space itself is an intuitive word, that we all know about. But there is an actual concept called manifold, which is the logical version of that intuitive concept. It’s an attractive notion when you first learn about it as a math student. And the first math theory about these manifolds that I learned about was sort of strange.

Tell us!

You attached to such an object, which you didn’t really describe in terms of its logical definition, some other objects which were very abstract and part of algebraic topology. And when you had enough of those with the right conditions, you could build the manifold.

So you could actually reconstruct the manifold from these abstract objects?

You could build it up to equivalence. But you didn’t really construct the points of the manifold in a canonical way. So, it has no points. It was like a black box. The information is stored there. And that is where numbers come in; all these concepts are based on numbers, the algebra, whereas the actual texture of space is not there.

Abel Prize laureate Dennis Sullivan receives the Abel Prize from His Majesty, King Harald of Norway. © Naina Helén Jåma / The Abel Prize

©All rights reserved.

Is it like the recipe for the cake versus the cake?

Yeah, I’d say it’s exactly like that; it’s a good idea. You must have prepared that?

No, we did not!

It’s a very good interpretation. It’s like a cake with no edges or layers. It’s just this delicious cake going on for ever, right?

And you really want to get at the cake?

Well, that is what you are attracted to, the idea of space and its texture. And then, it turned out, that every time I would ask a professor a question, he gave me an answer that was in terms of number, which is algebraic topology and homotopy theory. So I had to learn that, as it were. I adjusted the geometrical problem so that it fitted with the numbers, so to speak. You know, some goals are not achievable and some are within reach, so I adjusted to get the ones within reach during that period.

Is what you describe here more or less what is called surgery, where you actually build the space according to the prescription?

Right, you have a prescription of the information: how many holes it has, how many handles etc., and you build an actual manifold with that description. And surgery allows you to build it. That was a powerful technique. Actually, it was a secondary technique following Thom’s cobordism theory, which was very influential.

But the important distinction here is between what can be deformed and pushed, well, in homotopy theory, in the homotopy type – to use technical jargon – as opposed to the actual manifold?

Right. First, it’s interesting that the classification of closed manifolds is an interesting subject. It’s not, a priori, clear that it will be so, but it’s extremely interesting classifying manifolds that are closed. You know, no boundary, not going off to infinity.

Classically, one knows the classification for surfaces. That goes back to Abel and Riemann. They figured that out. The sphere, the genus number, abelian functions, abelian differentials, and so on. But already Poincaré discovered that in dimension three it’s much more complex. And then it gets more and more complex as the dimension goes up.

It was kind of interesting that there is enough “number machinery”, so to speak, to understand spaces of dimension five and higher. That was an amazing development, basically due to Thom, I would say, who started this, and surgery was completing this story. And I got in on the last big boat heading to … wherever.

With the surgery exact sequence?

Kind of. Browder – you mentioned Browder – he was presenting this theory. And it was in a complicated form. You could sort of change it around a little bit and get it simpler. And then you could see from the changed picture which areas could be developed completely. The smooth structure is still open, in some sense, up to finiteness. I mean, we know all the infinite part for the smooth structures.

And that is an area where the previous Abel Prize winner Milnor had a huge impact.

Yeah, on that one, certainly. His 1963 paper with Kervaire was my math bible.

This actually leads us to your thesis in Princeton. Princeton must have been a fascinating place to be at that time?

Absolutely! All these famous people around with their expertise.

So you could just ask them?

Yeah, you could just ask them every day at tea, you didn’t have to make an appointment, because they all came to tea. You could ask them anything you wanted to.

There is a cute story about when you are closing in on your thesis, and you had a discussion with Milnor. Could you tell it?

Well, I had this sequence of steps, and if I could do them all, I could solve what I wanted. But each step had a clear surgery part and then it had a Milnor exotic sphere part. I didn’t know how they where linked together, so I went into his office, because I had a serious question. At tea you could ask any question, but this was serious. He looked at it and said: “Why don’t you just forget the Milnor-part”. He didn’t say it that way, but something like: “Why don’t you just forget the exotic sphere part, and just do the first part.” And this worked for piecewise differentiable manifolds.

So this is the combinatorial manifold case?

Yes, I call them combinatorial manifolds, or PL manifolds, or piecewise differentiable manifolds. You allow the differential structure to break, but you keep the combinatorial structure. And I said: “Thank you Professor Milnor”, but I thought: “Oh, these piecewise linear manifolds, I like smooth manifolds, but these are just piecewise linear.” And I thought about it: “Wait a minute, if I do that, I know the structure completely! That is, I know the local structure completely.” And I just had to figure out the global structure, which took another year, but then it solved the whole problem.

And you asked your thesis advisor Browder: “Can this go into my thesis?”

Well, yeah, that’s right. I asked him: “I have this sequence of steps, which have these coefficients, and if you can do all the steps you get this result. Can that be a part of my thesis?” And he said: “Well, I guess that is your thesis.”

And that answered a long standing question that people had been wondering about for quite a while? We are thinking about the so-called Hauptvermutung.

That was actually the driving engine. There was this more famous question about whether the combinatorial structure was uniquely determined by the topological structure. And that was called the Hauptvermutung. And it turned out that whenever I could understand the theory of what I was discussing completely, I could use the technique of Novikov to prove my list of numbers were zero.

The next eight months was like a race, it was really a race against reality. Every time I could understand this global theory better, I could prove the Hauptvermutung. It turned out that I could prove everything was zero except one little thing in dimension four that wasn’t zero, but had order two, and that was it. A few years later they actually found counterexamples in that little place there. So I proved as much as one could.

What you call “that little place” is an obstruction group in dimension four, right?

Yes, that was my obstruction group in dimension four. In a sense, that isn’t the way I work. Well, I would love it if I could solve a well-known question, but I really like understanding things better. So, I actually like the theory that says that these are all the piecewise linear manifolds in a given homotopy type, and you can compute these numbers and then you know which one you have, and that is a complete discussion. It turns out that 99 out of a 100 of those numbers are also topological invariants. So you get this corollary. People today only know the corollary. And now they even have a simpler proof, so everything I have done is forgotten! So I’m glad I get this Prize so I can talk about it again.

Immediately from there you move on and do other amazing stuff. You discover that the Galois group has important consequences for the study of manifolds. Indeed, you solve a famous conjecture that way. Could you elaborate on that, focusing on the manifold aspect of it? Specifically, how come you have a Galois action on manifolds, it doesn’t seem reasonable at all.

I would say that it’s still not understood. In other words, there was this list of invariants – I’m simplifying it a little bit – but a big part of that list could be collected into one element in K-theory. And K-theory has this symmetry, the Adams operations. One knows that when you look at the roots of unity in the complex numbers, that is if you add the roots of unity and form that field, that gives you the abelian part of the Galois group. And the symmetry of those fields, more precisely, you have to complete the manifold theory – it’s technically a little strange to topologists and geometers – you complete the number aspect of manifolds so to speak, and that has symmetry exactly the abelian part of the big Galois group. So we have Abel and Galois together.

And that symmetry exists in K-theory, so it acts on the invariants of manifolds. So, the manifolds were just given the information, the homotopy type and these other numerical invariants, and the Galois group acted on these invariants, and therefore it acted on the manifolds. That is how it came about. It doesn’t come about in a natural explicit geometric way, and that gave rise to this Jugendtraum, or dream of youth, a term coined by Kronecker in a different context. This Jugendtraum, explaining this in elementary terms, is still open.

How can we view manifolds? As we would view algebraic varieties?

It’s a little strange, you see. If you think of usual algebraic varieties with real numbers and complex numbers, they are normal topological spaces. And this topology comes from the topology of complex numbers or the real numbers, right? The Galois group doesn’t preserve that topology. A lesson from algebraic geometry is that to understand things that are defined in terms of integers it is best understood by looking at each prime and looking at the real completion, and view the information that way. The “intersection” of all this information gives the integral information. It’s kind of sophisticated. This was actually too much for my topological colleagues. They didn’t want to hear about it. The geometric topologists, not the homotopy theorists. The homotopy theorists – they loved it!

So, you are assembling all this information, one prime at a time, plus the rational information?

Yeah, for a manifold the finite prime part splits into the prime two and all the odd primes. Individual odd primes behave the same way. Because of the Poincaré duality, it’s like a quadratic form. It’s well known that quadratic forms behave differently at the prime two than at the odd primes.

Could we for a moment segue into a different topic, though still associated with the name Poincaré. We are thinking of the term Poincaré moment, which refers to the experience Poincaré himself described where he in a flash saw the solution to a problem he had worked on for months. Have you had such Poincaré moments?

I search for them all the time, but they come very seldom.

Could you tell us about the fascinating experience you had when you were about to take the oral exam as part of your PhD?

Oh, yeah, yes, right. There is a little book by Milnor called Topology from a differentiable viewpoint. About how you could do all of the usual things, you know, the Königsberg bridge problem, continuing to Betti numbers, etc., etc. You could do all that more geometrically using smooth functions and regular values, preimages of the nice points, submanifolds and stuff like that. That was Milnor’s beautiful description of the Thom theory from 1953, okay? So, we were studying that for the orals, and I knew it forwards and backwards, I could answer any question.

I was walking in to take the exam, and thought: “Let me look at it one more time before the exam”. I went to the library, opened the book, looked at it. It’s a small book, it’s got ten theorems in it. But still, there are a lot of steps, and I was looking at it one more time, and then this basic picture appeared to me: You have a map to something like a sphere, and you take the preimage of a point – which is what is called a nice value – you get a nice submanifold by the Implicit Function Theorem. You get local coordinates, and then the neighborhood sort of funnels down, like you would push a slinky down and flatten it out completely. But this was saying something about the global map: There is the preimage of one point, and then I noticed: “Oh, wait a minute, the preimage of one point has all the information.” The complement may be very complicated in the domain, but the complement of a point or a disk in the image sphere is contractible. It’s like taking a point out of a balloon, it contracts, it’s contractible! So you can extend the mapping to the contractible part uniquely. Any choice you make will be related by deformation to any other choice.

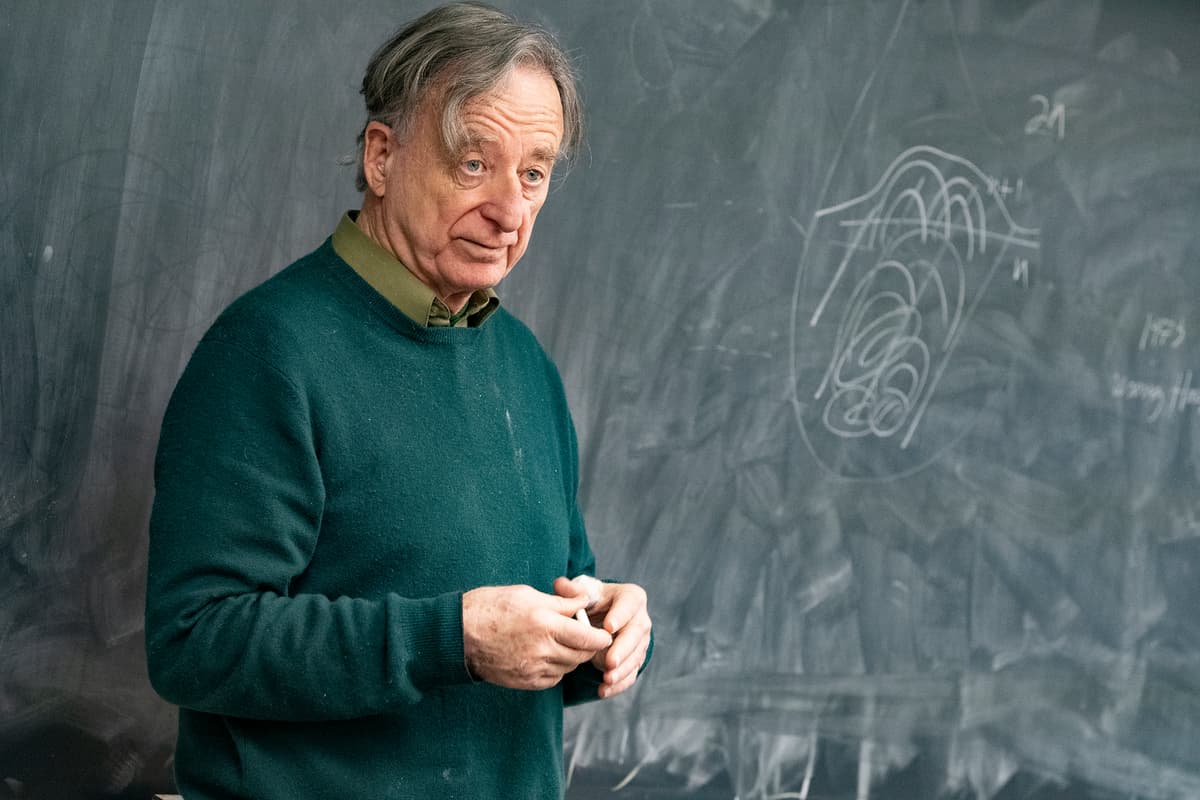

Dennis Parnell Sullivan – 2022 Abel Prize laureate. © John Griffin / Stony Brook University / Abel Prize

©All rights reserved.

Suddenly the whole book, or the whole theory, became clear. It just follows from this picture, from this slinky picture, with the logical remark that the complement here is contractible, so there is no more information. That is just pure logic, plus this simple picture. The whole book fell away, the entire theory fell away. If I got amnesia but was left with that picture in my mind, I could reproduce the whole book and the whole theory. And then I thought: “This is what it means to understand mathematics”. I was a graduate student! So, I want to feel this again!

And have you?

Yes! However, it takes longer and longer.

Of your other main results in this area, is there any one that has such a picture in your mind, where you actually see the entire theory?

Well, I mean, basically this sequence of steps things I was talking about, where you take preimages and use this picture, I kept using it. For example you know how a screwdriver works, it goes into the slot and you turn it. You can take apart this house, you know. I mean, you can do anything. You have to have a simple tool, you have to understand it, and then use it. Well, that wasn’t exactly a Poincaré moment.

The Poincaré moment I was thinking of, when you said that, was when he put his foot on the bus and he realized that the holomorphic bijections of the unit disk were the same as the symmetries or the congruences of non-euclidean geometry. And that was a fantastic connection. He knew both things. But, in a sense, the connection is the moment. This largely dictated the next century, and all the work of Thurston and so on.

But you must have had a similar experience, when you proved the Adams conjecture. You’ve commented that it wasn’t really important that the Galois action corresponded to the Adams operations. Still, it must have been very important to you at the time when you were trying to solve the Adams conjecture, that they were the same. That must have been a revelation, that that actually could be true?

Well, it’s not my creation, it was Quillen’s observation that somehow these Adams operations, whatever they are, let’s just say they are some symmetries of something that relates to manifolds and space.

The symmetry is related to the fact that, when you are working in the field of algebra, you may assume that times anything is , where is a prime, like times anything is zero, being the prime. There is an amazing fact that if you work, for example, with times something is , and you take a number and you cube it, and you take another number and cube it, then if you add the two and then cube the sum of the two numbers, you get the same thing: . This is because of what the binomial coefficient theorem says, that you get these -terms, but is zero, so you get and . That shows that you have this symmetry in each of these prime worlds. So, you have this additional symmetry given by what is called the Frobenius automorphism. That is fantastic!

Quillen had already suggested that there is a relation between the Adams conjecture and Frobenius, but then that was a little too exotic for me. I wanted to use the answer to the Adams conjecture, I didn’t want to prove it. And then I heard – I hadn’t met him yet – that he wasn’t going to work on it, because he first had to learn 200 pages of Grothendieck and transfer it into his setting. Okay, he only wrote perfect papers, it had to be perfect, or else he didn’t write it.

It’s Quillen you are talking about, right?

Yes, it’s Quillen. Now I’m adding what I found out later, as I read more of his work: every paper is perfect. Perfect isn’t the right word, it’s optimal. You can’t do better. So, I heard about this, and I said: “Okay, I’m going to pretend that this is true, because Quillen made this connection, and he could have written the proof out.” And then I said: “But wait a minute, I can’t just pretend that this is true, I’ve got to prove it myself.” But if it’s true, it’s easier to prove. Because you know it’s true. It’s a topological theorem, so I just kept working on it.

I worked on it for six months, which in those times was a really long time because things were happening faster. I reduced it to something – it was equivalent to something – and then I tried for a long time to prove this something, but I couldn’t do it. And then: I remember sitting on the lawn, I remember exactly that moment, August 19, 1967. I had just driven up from Mexico with my family to Berkeley. I was going to spend two years there. I was sitting on the lawn of the house where we were staying for a few days until we got our own place, and I thought: “What has Quillen said about this?” He said: “Frobenius! algebraic symmetry! at the primes!” It turned out that it gave my condition immediately, and so I had a proof of the Adams conjecture.

© Scott Sanden, Gyro AS

©All rights reserved.

In some sense that was a Poincaré moment. It took me a year to write out the details. There were different details, less foreboding than what Quillen had envisaged, so I was able to do it.

And that spawned the so-called MIT notes, which became widely circulated and famous?

That spawned the MIT notes, yeah. You have to first localize, then complete and then do all the related homotopy theory.

And then you moved on to the quasiconformal manifolds and Lipschitz conditions. How did that transition happen?

You sort of skipped about ten years … but we don’t have so many hours!

Yeah, we agreed to skip the rational homotopy theory, which really hurts, but …

Okay, but let me make one point about that. Algebraic geometry and stuff like that just does the finite primes. It turns out that all the information in this algebraic topology which is determined at the primes, has this extra symmetry in it, which is related to algebraic geometry. But then I thought: “Wait a minute, what about the infinite prime, the archimedean place?” I didn’t know any analysis, or anything like that. “But, maybe it has to do with differential forms?” And it turned out that it did. It’s sort of like algebra does this part, analysis and geometry do this part.

Which does open analysis to all of the rational theory.

Right!

And you then prove that cohomology in many situations determines the entire rational type; Kähler manifolds.

Yeah, it had nice corollaries. The idea was to express the information in terms that are natural. It’s natural to express the information of the infinite part, rational numbers, real numbers, in terms of differential forms, which is natural for analysis and geometry.

© John Griffin / Stony Brook University / Abel Prize

©All rights reserved.

So you have this information for the primes with the Galois action and you have analysis on the differential forms for the infinite piece?

All of which is related to topology, right. But then, to go on, all of this was frustrating, because it was outside the manifold. They were sort of invariants. I liked facts about things inside the manifold. Foliations or dynamical systems and fractal sets, these things are inside the manifold and they are constructed by infinite processes inside the manifold. So I started to learn about these infinite processes.

That began the dynamics part. It was sort of like just following this interest inside, there was no logical reason. I was starting over as a graduate student again, I’d say. It turns out that the best way to understand the holomorphic part of manifold theory in dimension two is not through the smooth structure, but in terms of the quasiconformal structure. That is the best way to understand dimension two. And it’s amenable to certain infinite fractal processes. Anyway, it was natural to leave this highly sophisticated algebraic viewpoint and go back to the original interest in manifolds, like dynamics – and processes like dynamics – inside the manifold. I mean, physical processes take place in space, so this is all about everything else in science. You know, even medicine; your body has tubes with fluids and so on.

Let’s talk a little more about these dynamical systems and their importance in studying manifolds. Perhaps we could start with something very concrete, namely Denjoy’s answer in the 1930s to a question posed by Poincaré about circle diffeomorphisms without periodic points. This was taken up and extended enormously in the ’70s by Michel Herman and his student Yoccoz, answering, among other things, a question posed by Arnold. With this as background, could we ask you how this theory impacted your desire, so to speak, to understand things inside the manifold? This in contrast to the picture you give of manifolds locally being like a puddle of milk looked at from the outside – there isn’t much personality.

Let me answer this by first posing the question: “Why is it interesting to know about manifolds?” It’s all about space. Okay, we have done the number aspect, but why is it really interesting? Well, all the processes that we see go on in space. All that stuff that is described by various other fields, ODEs, partial differential equations, functional analysis, that’s all part of describing the processes. It’s also combinatorics, computer algorithms. All that is about processes in time, but all these processes in time go on in space.

I didn’t know all that then, but I wanted to know more about things going on inside manifolds. A little dynamical system could create an interesting fractal set inside the manifold. And if you perturb that dynamical system, that fractal set was still there. It was structurally stable. So I had to learn about things such as Cantor sets, fractals and stuff. So I started and I’d say it was almost a ten year period of time before I got to quasiconformal mappings.

This was at the end of the ’70s. I was thinking about dynamics and foliations, like this idea of an onion that is foliated. That is a very attractive picture, and these were interesting objects. Thurston had arrived on the scene, and he blew everybody’s mind away, including mine. Immodestly, I have to say that I was smart enough to appreciate that I was watching Mozart playing the piano. I mean, not everyone did, because Thurston wasn’t so communicative.

But he was one of your heroes along with Thom, wasn’t he?

Yes, but he was younger than I was, he was my younger brother hero. All this fitted with this desire of mine to go inside the manifolds, and understand more geometric things. So I started studying dynamics, and I learned about the Smale school. And then, in France, I started going to Michel Herman’s lectures, and I met Yoccoz, his student. Michel Herman was working on the problem you alluded to in your question. It happened like this: Denjoy died in 1974, and Michel Herman was working on his papers for the French Mathematical Society. Herman started to talk about the Denjoy argument. So, I learned that argument. And then Herman started answering these questions, refining what Denjoy had done. You have to remember that Poincaré was doing celestial mechanics, in particular, the three body problem. He came up with this question that was answered by Denjoy, who did this a couple of decades after Poincaré died, actually.

This is all about one dimensional manifolds. It turns out that they are actually among the hardest from this interior point of view. They are very difficult. Herman analyzed the very fine structure of diffeomorphisms of the circle, and we were learning as he was producing results. I was just intrigued about it. For example, there is a beautiful example involving the golden ratio number and Fibonacci numbers, and that intrigued me.

And this is while you are at IHÉS?

At IHÉS, yes. He was at Orsay, which was just a walk across the valley of the Yvette. The interesting thing about the real line is that there are three kinds of distortions that behave algebraically very nicely. There’s the metric distance distortion, the ratio distance distortion and the cross ratio distortion; corresponding respectively to metric geometry, affine geometry and projective geometry. And there is the usual chain rule. You take the logarithm of that, it’s a nice formula under composition, and now you can do two other compositions with these higher distortions. Those were the key things that I used to explain Herman’s work to myself.

Michel Herman’s theorem took a whole volume of Publications Mathématiques de l’IHÉS, and I wanted to get it down to something like just a few key moments of understanding. And you could – after a couple of years thinking about it – get it down to something you could tell on the phone to somebody. That was my challenge: Find a proof that I could tell to somebody on the phone. You have to understand it, you can’t write down a lot of formulas and calculate and stuff like that, you have to understand it. It was just like that, the desire to understand, and it was just like fun, you know.

But then, in ’82, I heard that physicists had discovered something startling related to phase change. You know, the water gets colder and colder, and suddenly it forms this crystal, right? It’s when all this rigidity happens. That is called phase change. There are a lot of situations where that happens in physics. It turned out that physicists had calculated one such in a dynamics example, where you adjust a certain parameter to the freezing point, I’d say, and then you get this incredible thing: It could have depended on infinitely many parameters, and it doesn’t depend on anything at all, it’s universal!

That was what Feigenbaum first discovered, right?

Feigenbaum discovered that there was this rate (from the other direction). Then other physicists discovered – and Feigenbaum too, actually; he hadn’t communicated it as well as the other ones – that it was this intrinsic geometry, like a crystal, I’d say.

What was interesting about this for me was that there were not enough techniques available to prove this at the time. It was numerically calculated. You can take this formula and that formula, and do this infinite process, calculate and – bingo! The Hausdorff dimension is , or something like that. So, here is a theorem that is true and it is precisely formulated. True with quotes, because it was numerically true. The available techniques weren’t enough to prove it. It turned out that you just had to add three more things to the Michel Herman and Yoccoz stuff, and then you could prove it. But it took eight years.

The idea was, I could stop whatever I was doing, and just work on this, there wouldn’t be any counterexamples, you know. And a proof would need new math.

And you were the one that came up with a proof?

Yeah, I found it and it took eight years.

And that was in ’82?

It was in ’90. It was ’82 when I heard about it.

And in the meantime …

… in the meantime? I was just working on this. There might be other things that appeared in print, but I wasn’t working on anything else.

For instance the non-wandering-domain theorem?

No, that is ’81.

It was published in ’85?

No no no, that was already over. I was in quasiconformal mappings; Ahlfors and Bers’ theory goes into dynamics. That was already fait accompli by 1980.

That must have been very inspirational that you got this result about non-wandering sets.

It was sort of obvious. It was obvious from the understanding.

But it wasn’t obvious to Fatou.

No, but he didn’t have this theory of quasiconformal mappings, this deformation theory.

It must have been very satisfactory for you to prove that?

Well, no it’s not, no no, you have misread me. These prizes and stuff are nice, but that’s not the point. It’s not the point to solve a problem, the point is to understand. And by this point, by the time, you understood what Ahlfors and Bers were doing, it was like a Poincaré moment, where you say: “This theory here could be very useful in this other theory”. These are disjoint universes, and do this Fatou–Julia thing, and just transfer the technique over.

Are you now talking about your dictionary?

That is the first entry of my dictionary, right.

In the paper where you prove the non-wandering domain you state the dictionary in the introduction. But do you use your dictionary in order to prove, say, the non-wandering result?

I do. There is something called the Ahlfors finiteness theorem, and you take what makes that work, and you restructure it over in this other domain. It was really using the comparison, the correspondence.

The non-wandering result, the Fatou theorem, corresponds to a known theorem in this Kleinian group category. It’s about the idea of understanding, not the names, not what field it is, but what is the math idea. The math idea is the same here and here.

Is this like you were telling us a moment ago, that once you know something is true, it’s way easier to prove it? Was the dictionary some sort of guidance in that respect – you knew what would be true?

No, it’s like when you arrange a party: you have to have enough drinks, enough food. I mean, you have to have enough stuff. You have to accommodate the correspondence. In retrospect you can say that the Fatou problem corresponds to something known over here, in Ahlfors and Bers, okay?

The underlying math is the same, and that is satisfying. But it was so obvious, it wasn’t exciting. The idea is, if you think in terms of structures, the structure here and the structure there were the same, two examples, the same structure.

So we were talking about your dictionary between the Kleinian groups and quadratic or complex dynamics, if you like, right?

That is one item in the dictionary. The dictionary says: “For every item here, there should be a corresponding item here, because the basic elements of the two universes are the same. In fact, I once introduced Bers at a conference to Mostow. Bers asked: “Why are you introducing us? We’ve know each other for years, we’re close friends, but we never talk math”. Like he said it proudly. I said: “Well, I have this one theorem. If you do this it is Mostow’s theorem, if you do this it is your theorem.”

How did they react to that?

You know, people are in their comfortable world, it’s already rich and beautiful, they are happy there. I’m not like that, when I start to understand something, I start wanting to move sideways, somehow.

So, you have the dictionary and what you’re telling us is that the underlying mathematics of the two things are the same. But not for any particular reason; it’s just the same? It occasionally happens that you have two different mathematical problems, and the way you handle them, or the way their combinatorics work is just the same, for no apparent reason.

No. The question is: “what are the basic elements that are involved in the mathematics, in each situation?” In this case there is dynamics which has a certain form actually, a technical form called hyperfiniteness, related to von Neumann algebras, and also it has to do with Riemann’s ideas of deforming the complex structure. Okay, so those are the two ideas.

There is an underlying complex structure, that is preserved by the dynamics. These are called holomorphic dynamical systems. This technique can be used in the entire field. But before this happened there was a field called Fatou–Julia theory and one separate field involving Poincaré limit sets and domains of discontinuity and so on. These were two different fields. This was occupied by complex analysts, and this was occupied, in modern time, by dynamical systems people. The basic elements of the underlying discussion were the same. Every advance here should correspond to something over there.

It’s just to look at things in simple terms, without the words. I don’t let my graduate students use names, they can’t use any proper name. They have to say, in an English sentence, in terms of basic concepts, like linear algebra or integers what the hell they are talking about. And I slap them around if they don’t, verbally.

You are known to be very broad in your interests in mathematics, and you see connections that other people do not see. But could we ask you a provocative question: is there some type of mathematics that you don’t like?

No, because there is this one tapestry, it’s all connected. It’s like the tapestry behind you, it goes all around. Everything is interesting to me.

And now the fluid dynamics enters. Can you tell us about that and why? Okay, you have a punchline in the end here, we won’t spoil it for you.

I forgot …

Oh, you promised to replace Newton’s calculus by Poincaré’s combinatorial topology.

Oh, right, of course yes, but that isn’t a punchline, that’s the theme. The idea is, yeah, so, quick history of math, right: We had the Greeks, they had their problems, more than two thousand years ago. Newton came along and he invented the calculus along with Leibniz. Suddenly, a bunch of problems the Greeks had could be solved. You can compute volumes of new things. Because with calculus you sort of ignore higher order error terms. Error of 0.1 decimal place, and errors of 0.001, you ignore all those, and you just try to get the first part. And then the formula is simple, and you get this beautiful theory.

But, you know, if you look a physicist in the eyes and ask, they’ll say: “The continuum doesn’t exist.” The continuum doesn’t exist, because, what do we know about it? The atomic models, elementary particles, there is no physics below 33 decimal places. There is no physical theory, you can’t even talk about distance below that.

On the other hand, the calculus ideal works beautifully, we have gravity, Einstein’s theory. By the way, Einstein’s theory hasn’t been connected to the standard model, which is the way the elementary particles interact, with these small distances getting down to Planck scale. In fact, Planck scale is sort of the scale in which gravity and the strong forces of nature are comparable.

Even the physicists use the continuum … like in a religious way! As if it exists! And they know it’s not true, because Newton’s calculus leads to classical physics, which is negated by quantum theory. But it’s so beautiful! Representation theory, Lie groups, it’s so beautiful, and they can make models, and the models work! But there is no basis somehow, there is something missing, right? In the physics theory.

So, fluid mechanics has been in between the classical and the quantum discussion, you might say, the statistical discussion. It has been in between, and in three dimensions … Well, in two dimensions it has theoretically been worked out, not computationally, but theoretically worked out. For the same mathematical reasons, this Ahlfors–Bers theory and this deformation theory works, analysis, it’s related to that, and I understood that. That was one reason I got in, I understand that, and half of that theory works in dimension three, but not the other half.

I was astonished to hear, in ’91 or ’92, that these basic hydrodynamics equations in three dimensions weren’t theoretically understood – whether they have solutions or not – because in dimension two it was all clearcut, and I understood why. They’re used all over the world by engineers to produce oil and by doctors to fix aneurysms. The latter use a little turbulence inside the aneurysms and do a little support thing here, doctors can do several a day, and they can fix people up that might die at any point.

How could it be true that 3D hydrodynamics was so mysterious? Also about that same time one was able to put things on a computer quite well, but still there is now a limitation of a thousand of grid points or so in each direction. Thousand by thousand by thousand, that is a billion. You calculate, but then there is a matrix problem, billion by billion, so that is beyond reach. So there is this definite limitation to what one can compute. This mathematical problem, which actually became one of these millennium problems later, I was already working on it for about a decade before, is beautiful, precise and so on. But it’s not practical. What is really important is: what can you understand at the scale where you can compute? And then maybe prove theorems too.

The idea I had was, this is all about space – processes happening in space. And you’ve got the Newton continuum, which gives you a beautiful algebra picture of space, you have differential forms, calculus, the Leibniz’ rule for a product, you know. Great! It turns out that if you discretize the problem and put it on a computer, you’ve got to do difference quotients instead of derivatives, and they don’t satisfy the product rule which has an -error, divided by , still an -error. But then goes to . That is in every computation, that error term. So the idea is, and they know this, the numerical analysts know this, of course, they know this much better than I do, but they don’t seem to have a theoretical way to approach it. So, the idea is, or Poincaré told us, for all this topology, all the numbers games we were talking about before, which is quite deep, has to be done by breaking space into little chunks, and do some combinatorics with that. So, that is combinatorial topology, that allows you to understand the non-linear aspect, which has a product structure. That has been my theme of understanding, and now I have been working on it for three decades, and I think I have made some progress recently.

To take the discretization that we have to do in order to calculate anything in fluid mechanics and anything like that. Are you saying that we should make that as a main object of study itself?

Yes! We should study the full algebraic topology – this is going back to the beginning now: Poincaré duality, intersections, how things intersect, that’s the ring structure. You know, these objects in a manifold can be intersected, and that gives a ring structure.

Do you think the Navier–Stokes problem, which we’ve been talking about, is one of the hardest Millennium Prize Problems?

No idea. I’m even not concerned with it as a Millennium Problem. I’d love to prove it, but I’d rather understand some variant of it. I mean, what made this dictionary stuff so interesting in a way, there were several Fields Medals there and stuff like that, was because they had these pictures of the Mandelbrot set. Once a waiter came along while we were working on it, and he said: “Oh, that’s the Mandelbrot set”. Everybody knows the Mandelbrot set, right? There are good computations of the Mandelbrot set, you can zoom in to any scale, it gets more and more complicated, it’s beautiful, like a fern or something. And you go deeper, and then there is a new thing, you know, it’s precise. And that has led to many statements and conjectures, half of which have become theorems, and half of which are still open. So, it has been a very active field. We don’t have such good computations for fluids in general. We don’t have enough understanding. We can just try, if it works: good. If it doesn’t work, you know: bad. So, the idea is to put more kind of conceptual work on the problem.

To use Poincaré’s ideas, to break space up to combinatorial pieces, see how they interact, put other pieces to cover the breaks which reveals the Poincaré duality, and put all that into the computer programs that is treating the Navier–Stokes equation.

You’ve said several times, that simplicity is the thing. When Atle Selberg was interviewed two years before he died, one of the things he stressed very much, was, and we quote him with a direct translation from Norwegian: “I believe that it is the simple things that will survive in mathematics.” Would you agree with that?

Oh yeah, of course. C’est evident! You know, like a screwdriver. It’s going to last forever, if it’s simple, and it’s useful. I’ll go even further, the goal of mathematics is to simplify everything. I think that the complicated things can be simplified.

Actually, Selberg mentioned Hermann Weyl as a prime example of a person that could attack a problem, simplify it and solve it.

I think that is a good method, because there are these fundamental points, like the moments I was describing with the graduate students, organize everything. They aren’t easy to find, you know. What are the central points? You don’t know a priori. And you start by getting a sense of it, it has to do with the structure: what is the structure of the situation. A little “Grothendieck-like”.

The time is …

I’m not tired! I know this phenomenon; if hours are late and the mathematician one is talking to is tired, then one just asks him a question about what he is doing, right? And he starts talking, and suddenly he’s full of energy again!

This is going to be the last question, we promise! During our preparatory Zoom-meeting we mentioned a 1828 quote of Abel’s we’d like you to comment on.

One should give a problem such a form that it is possible to solve it, something one can always do with any problem. In presenting a problem in this manner, the actual wording of it contains the germ to its solution, and shows the route one should take. I have treated several topics in analysis and algebra in this manner, and although I have often posed myself problems that surpass my powers, I have nevertheless attained a great number of general results that have shed a broad light on the nature of these quantities, the knowledge of which is the object of mathematics.

Do you have any comment on this?

The formulation of the problem is very important. Even more, a given problem may not be the correct formulation of the problem. Every problem stands, if it’s well-defined, but it could be that there is a slightly different version of the problem which is more natural and will be successfully solved, you know.

I’m willing to change the problem, while it sounds like Abel is trying to take the problem as given and put it in its best perspective. I’m also willing to change a problem slightly, to one that can be solved, right? But I certainly agree with that.

Another thing that I’ve noticed, as I’ve been around doing this for a long time, is that when a subject is sort of complete, you can look back, you know, it’s very easy to close the barn door after the horse has escaped. You know that you should have done it before. When you look at the final story, you would say, “Jeez, if we had started over here, then it would be natural to do this, and then you would have gotten there very quickly.” Using just a simple picture of what has happened.

So, if you are in a situation where you don’t have that, look for it. That is kind of what Abel said.

On behalf of the Norwegian Mathematical Society and the European Mathematical Society and the two of us we would like to thank you very much for this most interesting interview.

It was my pleasure, I assure you!

Thank you!

Cite this article

Bjørn Ian Dundas, Christian F. Skau, Interview with Abel laureate 2022 Dennis Sullivan. Eur. Math. Soc. Mag. 125 (2022), pp. 20–30

DOI 10.4171/MAG/108